Key takeaways

- Small teams in 2025 leaned on affordable, all-in-one tools that combined content creation with basic AI visibility tracking, keeping monthly spend under $300.

- Enterprise teams ran layered stacks: separate tools for technical SEO, AI crawler monitoring, content gap analysis, and multi-model visibility tracking across 10+ AI engines.

- The biggest gap wasn't budget -- it was the "action loop." Teams that found gaps, created content to fill them, and tracked the results outperformed teams that only monitored their visibility scores.

- Ranking in ChatGPT requires a fundamentally different approach than ranking in Google. Citation-worthy content, structured data, and topical authority matter far more than raw backlink counts.

- In 2026, the divide is widening. Teams without dedicated AI visibility tooling are losing ground fast to competitors who've been optimizing for AI search for 12+ months.

The way teams approached SEO in 2025 split into two very different stories. One group kept doing what worked in 2022 -- keyword research, content briefs, rank tracking -- and watched their traffic plateau or drop. The other group rebuilt their stacks around a new reality: a growing share of their potential customers weren't clicking Google results at all. They were asking ChatGPT, Perplexity, or Gemini for recommendations, and getting answers that cited specific brands and pages.

This guide breaks down exactly what each type of team did, what tools they used, and what actually moved the needle.

Why ChatGPT ranking is different from Google ranking

Before getting into the stacks, it's worth being clear about what "ranking in ChatGPT" actually means -- because it's not a position on a results page.

When someone asks ChatGPT "what's the best project management software for remote teams," the model generates a response that may or may not mention your brand. Whether you appear depends on what the model has learned from its training data and, increasingly, what it finds via web browsing. Getting cited consistently means your content needs to be:

- Authoritative and specific enough that AI models treat it as a reliable source

- Structured so it's easy for a model to extract and quote

- Present on platforms AI models actively crawl (your site, Reddit, YouTube, review sites)

- Covering the exact questions and angles that users are prompting about

That last point is where most teams fell short in 2025. They were optimizing for keywords they already knew about, not for the specific prompts their target customers were typing into ChatGPT.

What small teams actually used in 2025

Small teams (typically 1-5 people, often at startups or agencies managing a handful of clients) faced a real constraint: they couldn't afford $500+/month per tool, and they didn't have time to manage a 10-tool stack. The teams that did well made deliberate choices about where to spend.

The core small-team stack

Content research and creation was usually handled by one or two tools. Surfer SEO at $99/month was a common anchor -- its Content Score gave writers a clear target without needing a dedicated SEO strategist to interpret the data.

For teams that needed more AI writing assistance baked in, tools like Frase or NeuronWriter offered content briefs plus optimization in a single interface at lower price points.

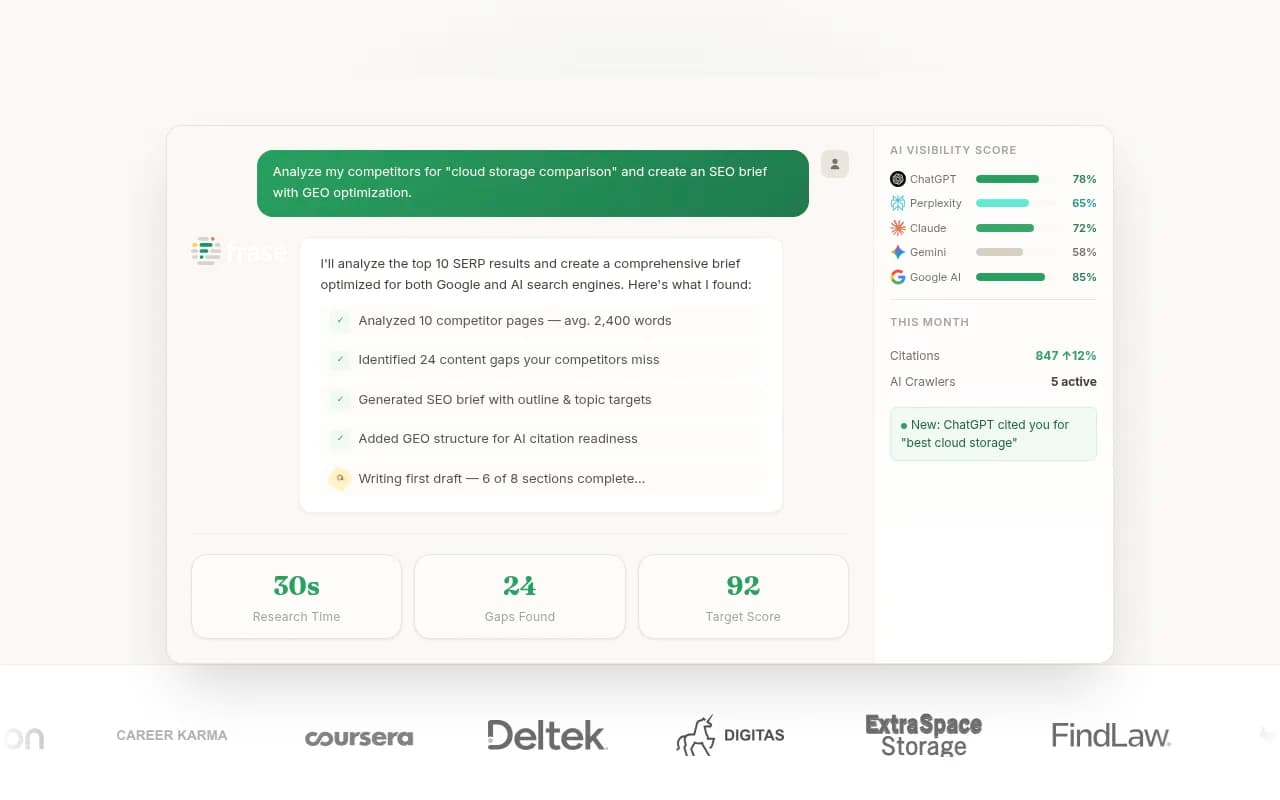

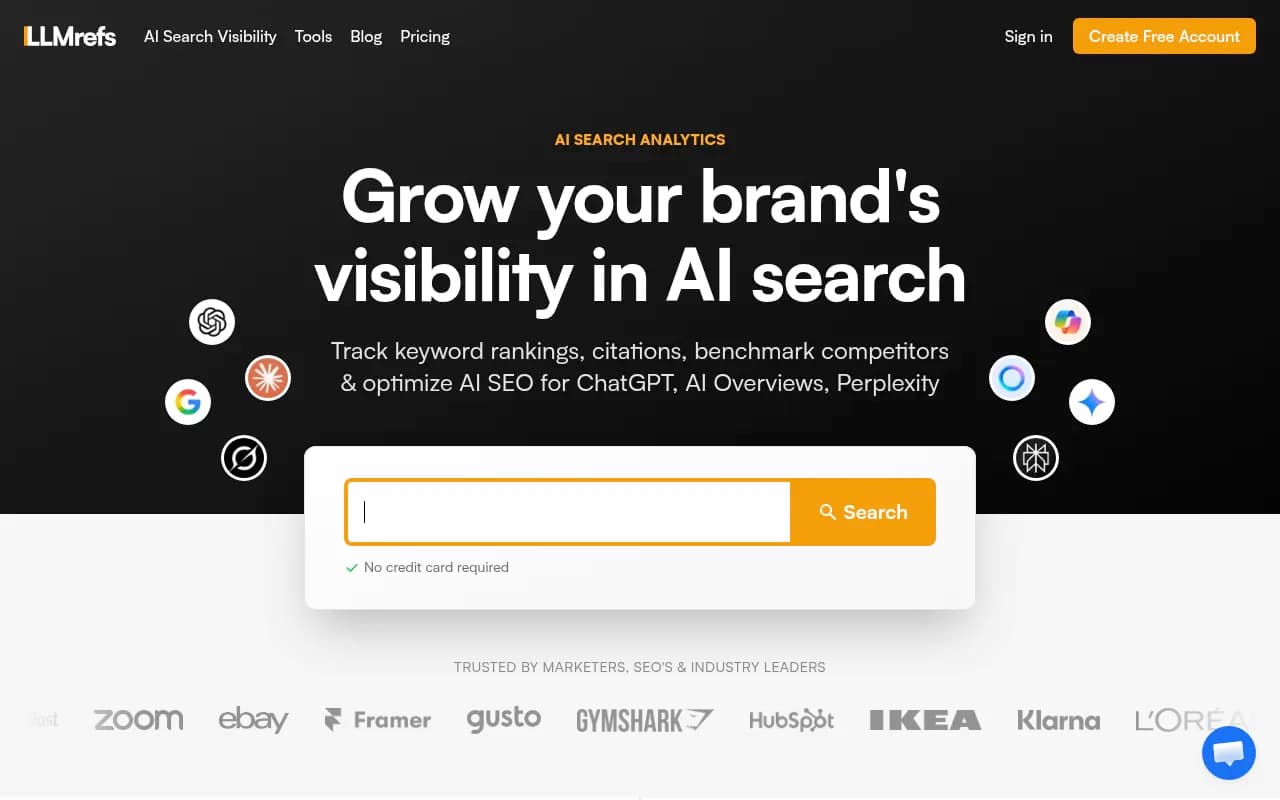

AI visibility tracking was where small teams most often cut corners -- and paid for it. Many were still relying entirely on Google Search Console and a rank tracker in early 2025. By mid-year, the smarter small teams had added a lightweight AI monitoring tool. Options like Otterly.AI, LLMrefs, and Airefs gave basic visibility into whether their brand was appearing in ChatGPT and Perplexity responses without requiring enterprise budgets.

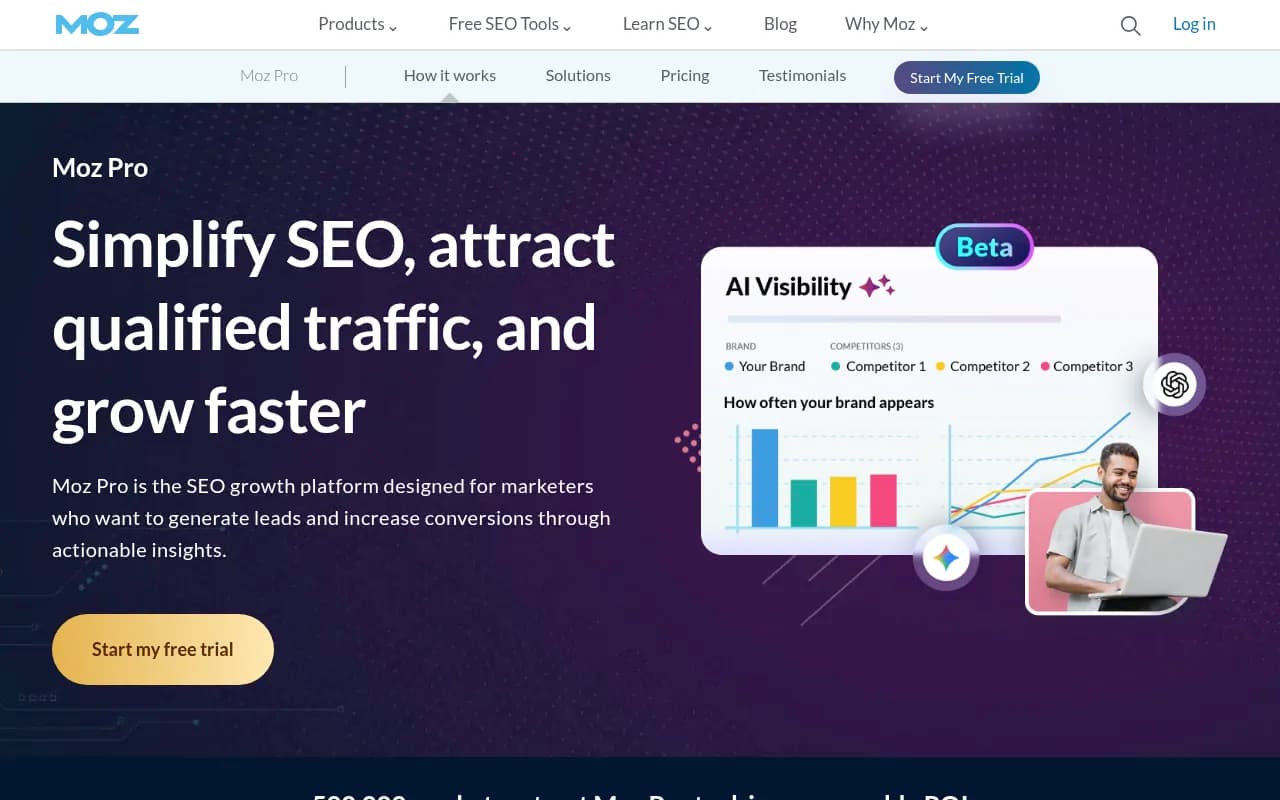

Technical SEO at the small-team level usually meant Google Search Console plus a periodic Screaming Frog crawl. Some teams added Moz Pro for keyword tracking and domain authority monitoring.

What small teams got right

The best small teams treated content creation and AI visibility as one workflow, not two separate things. They'd identify a topic, check what AI models were saying about it, write content specifically designed to answer those prompts, and then monitor whether their brand started appearing in responses.

They also moved faster. A two-person team can test a new content format, publish it, and see results in weeks. Enterprise teams often need months to get the same piece through approvals.

Where small teams struggled

The honest answer: most small teams were flying blind on AI visibility for most of 2025. They knew they needed to "optimize for AI search" but didn't have clear data on which specific prompts their competitors were appearing for, what content was getting cited, or why their brand was invisible in certain categories.

The tools they were using showed them that they weren't visible. They didn't show them why or what to do about it.

What enterprise teams built instead

Enterprise teams (100+ person companies, large agencies, brands with significant marketing budgets) had a different problem: too many tools, not enough integration, and organizational complexity that slowed everything down.

The teams that performed well in 2025 solved this by building stacks with clear ownership. Each tool had a job, a team, and a reporting cadence.

The enterprise stack breakdown

Technical SEO and crawling at enterprise scale meant dedicated platforms. Botify was a common choice for large sites -- it handles JavaScript rendering, log file analysis, and crawl budget optimization in ways that general-purpose tools can't.

For brands with complex site architectures, seoClarity and BrightEdge provided enterprise-grade rank tracking alongside AI visibility features.

AI visibility and GEO was where enterprise teams invested most heavily. The shift from "do we appear in AI search?" to "how do we systematically improve our AI visibility?" required platforms that could do more than monitor. Teams needed to understand which prompts competitors were winning, what content was driving those citations, and how to close the gap.

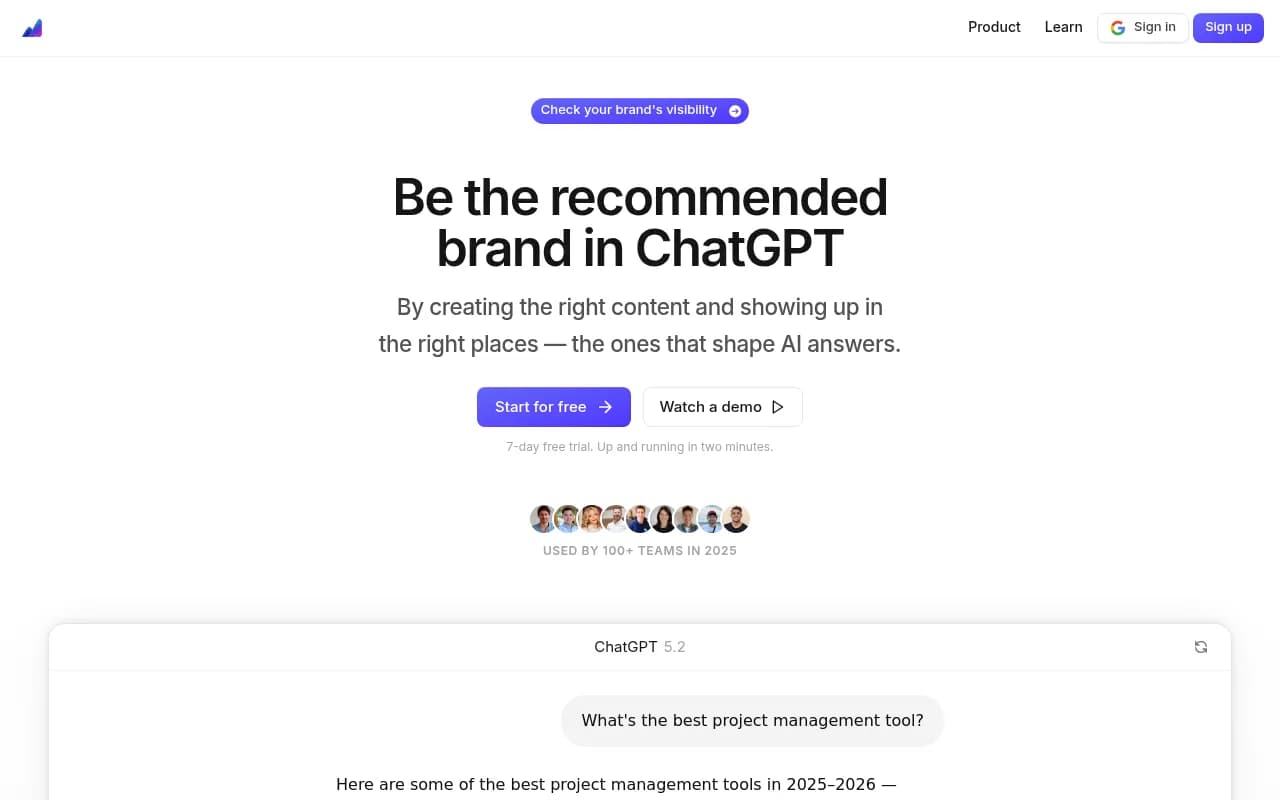

Promptwatch became a go-to for teams that needed this full picture -- not just tracking where they appeared, but identifying the specific content gaps that were keeping them invisible, then generating content engineered to get cited.

Profound and Goodie AI were also used at the enterprise level, particularly for brands that needed custom analytics and dedicated support.

Content at scale was handled differently than at small teams. Enterprise content teams used platforms like MarketMuse for content strategy and topical authority planning, then fed those briefs into AI writing tools like Jasper or Writer for first drafts.

Competitive intelligence got a dedicated layer in most enterprise stacks. Tools like Crayon or Semrush's competitive features tracked what competitors were publishing and where they were gaining AI visibility.

What enterprise teams got right

The best enterprise teams in 2025 treated AI search visibility as a measurable channel with its own KPIs, not an extension of traditional SEO. They tracked citation rates by AI model, monitored which pages were being cited and which weren't, and tied AI visibility improvements to actual traffic and revenue.

They also invested in understanding the why behind AI citations. Which Reddit threads were influencing ChatGPT's recommendations? Which YouTube videos were getting referenced? That kind of source analysis -- looking beyond just their own website -- gave them a real edge.

Where enterprise teams struggled

Ironically, the biggest enterprise failure mode was over-monitoring and under-acting. Teams had beautiful dashboards showing their AI visibility scores across 10 models, but no clear process for turning that data into content that actually improved those scores.

The other common failure: treating AI SEO as a separate workstream from traditional SEO. The teams that won integrated both -- using traditional keyword data to inform AI content strategy, and AI visibility data to prioritize which traditional SEO gaps to close first.

Side-by-side comparison: small team vs enterprise stacks

| Category | Small team approach | Enterprise approach |

|---|---|---|

| Content optimization | Surfer SEO, Frase, NeuronWriter | MarketMuse, Clearscope, seoClarity |

| AI visibility tracking | Otterly.AI, LLMrefs, Airefs | Promptwatch, Profound, Goodie AI |

| Technical SEO | Google Search Console, Moz Pro | Botify, BrightEdge, Conductor |

| Content creation | Jasper, ChatGPT | Writer, Jasper (at scale), Content at Scale |

| Competitive intel | Semrush (basic) | Semrush + Crayon, dedicated CI platforms |

| Monthly budget | $150-$400 | $2,000-$10,000+ |

| Primary gap | Lack of AI visibility data | Over-monitoring, under-acting |

The tools that crossed both segments

A few tools appeared in both small-team and enterprise stacks in 2025, which says something about their value.

Semrush remained the backbone of many stacks regardless of team size. Its AI Toolkit add-on added some AI Overview tracking, though teams doing serious AI visibility work typically layered a dedicated GEO tool on top.

ChatGPT itself was used by teams at every level -- not just for writing, but for prompt research. Teams would manually query ChatGPT with their target prompts to see what came back, who got cited, and what content structure the model seemed to prefer.

Topical Map AI was popular with small teams trying to build topical authority quickly -- a key factor in getting cited by AI models.

SE Ranking offered a middle ground -- more capable than entry-level tools, less expensive than enterprise platforms, with decent AI visibility features built in.

The action loop: what actually separated winners from everyone else

Here's the pattern that showed up consistently across the teams that improved their ChatGPT visibility in 2025, regardless of size:

- Find the gaps. Which prompts are competitors appearing for that you're not? What questions are users asking AI models that your content doesn't answer?

- Create content that fills those gaps. Not generic SEO content -- specific, structured, citation-worthy content built around the exact prompts and angles AI models are responding to.

- Track whether it worked. Did your new content get cited? By which models? How did your visibility score change?

Teams that ran this loop consistently -- even with modest budgets -- outperformed teams with bigger budgets that were only doing step one.

The monitoring-only approach was the most common failure mode of 2025. A team would sign up for an AI visibility tool, watch their scores, and feel like they were "doing AI SEO." But without the content creation and optimization steps, the scores didn't move.

What's changed heading into 2026

A few things are worth flagging as you build or refine your stack this year.

AI crawler logs are now a real signal. Knowing which pages ChatGPT's crawler has visited, how often, and whether it encountered errors gives you a direct line of sight into how AI models are discovering your content. This was a niche capability in 2025; it's becoming table stakes in 2026.

Reddit and YouTube matter more than most teams realize. AI models don't just cite brand websites -- they cite discussions, reviews, and video content. Teams that monitored and influenced these channels saw meaningfully better AI visibility than teams focused only on their own site.

Prompt volume data is getting better. Early AI visibility tools could tell you whether you appeared for a prompt but not how many people were actually using that prompt. Better volume and difficulty scoring is now available, which means you can prioritize high-value prompts instead of optimizing for queries nobody's asking.

The gap between optimized and unoptimized brands is widening. Teams that started building AI visibility in 2024 have 12+ months of citation data, content, and optimization history. Starting from zero in 2026 is harder than it was -- but the upside is also larger, because most brands still haven't figured this out.

Building your stack for 2026

If you're starting fresh or auditing what you have, here's a practical framework:

For small teams on a tight budget: Start with one solid content optimization tool (Surfer SEO or Frase), add Google Search Console for technical basics, and pick one AI visibility tracker that fits your budget. The key is having some visibility into how you appear in AI search -- even basic data beats flying blind. Once you have data, use it to drive content decisions, not just to report on scores.

For growing teams: Add topical authority planning (Topical Map AI or MarketMuse) and upgrade your AI visibility tool to something that shows you competitor gaps, not just your own scores. Start tracking which specific pages are getting cited and why.

For enterprise teams: The priority is closing the loop between visibility data and content production. If your team can see gaps but can't act on them quickly, that's the bottleneck to fix. Look for platforms that combine gap analysis with content generation and traffic attribution -- so you can connect AI visibility improvements to actual business outcomes.

Whatever your budget, the principle is the same: monitoring is the starting point, not the destination. The teams that win in AI search are the ones that use visibility data to create better content, then track whether that content actually gets cited.

That loop -- find gaps, create content, track results -- is what separates the teams that grew their AI visibility in 2025 from the ones that just watched their dashboards.