Summary

- Start with visibility audits: Use tools like Promptwatch or Conductor to identify where you're invisible in AI search, then prioritize prompts by volume and difficulty

- Close content gaps systematically: Build a repeatable process for generating AI-optimized content that addresses missing topics, not just keyword variations

- Measure what matters: Track citations, traffic attribution, and page-level visibility -- not vanity metrics like "brand mentions"

- Automate the repetitive work: Use workflow automation (Zapier, Make, n8n) to connect your GEO stack and eliminate manual data transfers

- Scale with process, not just people: Document your workflow, build templates, and create feedback loops so your team can handle 10x the volume without 10x the headcount

Why most GEO workflows break at scale

I've watched dozens of teams start GEO programs in 2025 and early 2026. The pattern is always the same: initial excitement, a few quick wins, then a slow collapse under the weight of manual work.

The problem isn't the tools. It's that most teams treat GEO like a side project instead of a repeatable system. They run one-off audits, write a few articles, check a dashboard occasionally, and wonder why results plateau.

GEO at scale requires a workflow -- a documented, repeatable process that connects discovery, creation, measurement, and iteration. Without that structure, you're just throwing content at AI engines and hoping something sticks.

The four phases of a scalable GEO workflow

A working GEO workflow has four phases that loop continuously:

- Audit: Identify where you're invisible and why

- Create: Generate content that fills those gaps

- Measure: Track what's working and what's not

- Iterate: Refine your approach based on results

Let's break down each phase with specific tools and processes.

Phase 1: Audit your AI visibility

The first step is understanding where you're invisible. Not "check a dashboard once" -- build a systematic audit process you can repeat monthly or quarterly.

What to audit

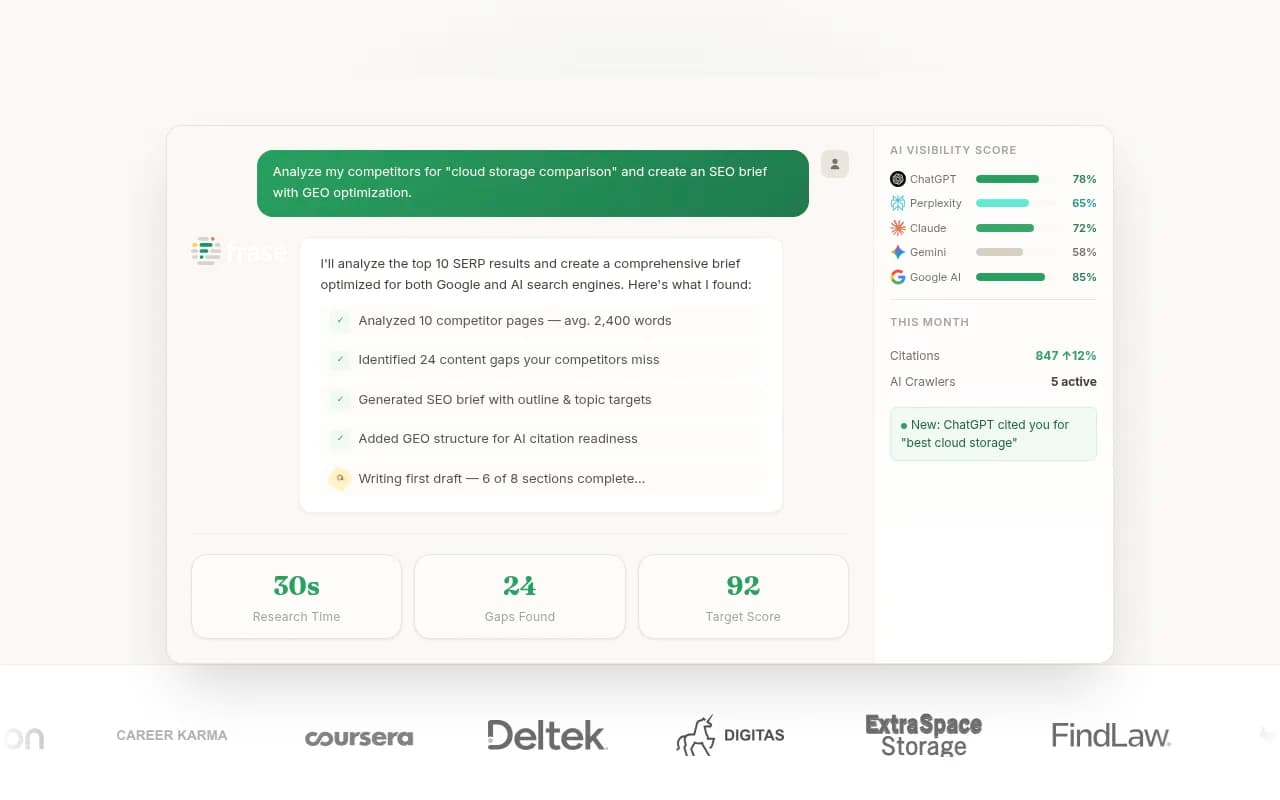

Prompt coverage: Which prompts are your competitors visible for that you're not? This is the single most valuable audit you can run. Tools like Promptwatch show you exactly which prompts competitors rank for and you don't -- the "answer gap" that represents missing content on your site.

Citation sources: When AI engines do cite you, which pages are they pulling from? Are they citing your best content or random blog posts from 2019? Page-level tracking shows you what's working.

AI crawler behavior: Are AI crawlers (ChatGPT, Claude, Perplexity) actually visiting your site? How often? Which pages? Crawler logs reveal indexing issues before they become visibility problems.

Competitor heatmaps: Compare your visibility vs competitors across different AI models. You might dominate ChatGPT but be invisible in Perplexity -- that's actionable intelligence.

Tools for the audit phase

| Tool | Best for | Key feature | Price |

|---|---|---|---|

| Promptwatch | Answer gap analysis | Shows exact prompts competitors rank for | $99-579/mo |

| Conductor | Enterprise visibility tracking | Industry benchmarks and trend analysis | Custom |

| Semrush | Traditional SEO + AI visibility | Combines keyword and AI search data | $139+/mo |

| Otterly.AI | Budget-friendly monitoring | Basic visibility tracking | $49+/mo |

The audit phase should produce a prioritized list of content gaps -- specific topics, angles, and questions your site doesn't answer but AI engines want to cite. That list becomes your content roadmap.

Phase 2: Create content that closes the gaps

Once you know what's missing, you need a repeatable process for creating content that AI engines will actually cite. This is where most workflows break -- teams either produce generic SEO filler or spend weeks on each article.

The solution: structured content generation that balances quality and speed.

The content creation loop

1. Start with the prompt, not the keyword: Traditional SEO optimizes for "best project management software." GEO optimizes for "what project management tool should a 10-person remote team use if they're switching from Asana?" The specificity matters.

2. Use AI to draft, humans to refine: Tools like Jasper, Copy.ai, or Promptwatch's built-in AI writer can generate first drafts based on citation data and competitor analysis. Your job is to add expertise, examples, and specificity that AI can't fake.

3. Structure for citation: AI engines prefer content with clear headings, concise answers, and supporting evidence. Use:

- Direct answers in the first 100 words

- Comparison tables for multi-option questions

- Numbered lists for process-oriented queries

- Inline citations to authoritative sources

4. Optimize for multiple models: ChatGPT, Claude, and Perplexity have different citation preferences. ChatGPT favors authoritative sources and structured data. Perplexity loves recent content and diverse perspectives. Claude prefers nuanced, well-reasoned arguments. Write for all three.

Tools for content creation

| Tool | Best for | Workflow integration |

|---|---|---|

| Promptwatch AI Writer | GEO-specific content generation | Built into visibility platform |

| Jasper | Long-form SEO content | Standalone, requires manual input |

| Frase | Content briefs and optimization | Integrates with Google Docs |

| Clearscope | On-page optimization | Chrome extension for real-time feedback |

| MarketMuse | Content strategy and gap analysis | API for workflow automation |

The goal is to produce 5-10 high-quality articles per month that directly address your top content gaps. That's enough to move the needle without overwhelming your team.

Phase 3: Measure results and attribution

You can't optimize what you don't measure. The measurement phase tracks three things: visibility, citations, and traffic.

Visibility metrics

Visibility score: How often does your brand appear in AI responses for your target prompts? Track this weekly or monthly. A 10-point increase in visibility score might not sound impressive, but it often correlates with a 30-50% increase in AI-driven traffic.

Citation frequency: How many times per month are AI engines citing your content? Which pages are being cited most often? Page-level tracking reveals which content formats and topics work best.

Model-specific performance: Are you visible in ChatGPT but invisible in Claude? Track each AI model separately -- they have different citation preferences and your content strategy should reflect that.

Traffic attribution

Visibility is great, but traffic pays the bills. You need to connect AI visibility to actual website visits and conversions.

Code snippet tracking: Add a tracking parameter to your site that identifies AI-referred traffic. Promptwatch and other platforms provide code snippets that tag visitors from ChatGPT, Perplexity, etc.

Google Search Console integration: GSC now shows some AI-referred traffic in the "Discover" category. It's incomplete, but better than nothing.

Server log analysis: The most accurate method -- parse your server logs to identify AI crawler visits and subsequent user traffic. This requires technical setup but gives you ground truth data.

Tools for measurement

HockeyStack and Usermaven both offer AI traffic attribution, though you'll need to configure custom tracking for AI-specific sources. The investment is worth it -- knowing which AI channels drive revenue changes how you allocate resources.

Phase 4: Iterate and scale

The final phase is where most teams fail: turning insights into action and scaling the workflow without adding headcount.

Build feedback loops

Monthly reviews: Compare this month's visibility scores, citation counts, and traffic to last month. Which content performed? Which flopped? What changed in competitor visibility?

Content audits: Every quarter, review your top-performing pages. What do they have in common? Can you replicate that structure for other topics?

Prompt trend analysis: Are new prompts emerging in your space? Are old prompts declining in volume? Adjust your content roadmap accordingly.

Scale with automation

Manual workflows don't scale. Automate the repetitive parts so your team can focus on strategy and content quality.

Automate data collection: Use Zapier, Make, or n8n to pull visibility data from your GEO platform into a central dashboard. No more logging into five tools to check metrics.

Automate content briefs: Connect your GEO platform to your content management system. When a new content gap is identified, automatically create a brief with target prompts, competitor analysis, and suggested structure.

Automate reporting: Build a weekly or monthly report that pulls data from your GEO platform, Google Analytics, and CRM. Stakeholders get updates without you spending hours in spreadsheets.

Document everything

The difference between a workflow and chaos is documentation. Write down:

- How to run a visibility audit (step-by-step)

- Content creation checklist (what makes a GEO-optimized article)

- Measurement dashboard setup (which metrics to track and why)

- Monthly review process (what questions to ask, what decisions to make)

When someone new joins your team, they should be able to follow your documentation and execute the workflow without constant supervision. That's how you scale.

The GEO tech stack for 2026

Here's a realistic tech stack for a mid-sized team (5-20 people) running GEO at scale:

Core platform: Promptwatch or Conductor for visibility tracking, content gap analysis, and AI crawler logs. This is your source of truth.

Content creation: Jasper or Frase for drafting, Clearscope for optimization. Use the AI writer in your GEO platform if it's good enough -- fewer tools means less context-switching.

Workflow automation: Zapier for simple integrations, Make or n8n for complex workflows. Connect your GEO platform to your CMS, analytics, and reporting tools.

Analytics: Google Analytics 4 for traffic, HockeyStack or Usermaven for attribution, your GEO platform for visibility and citations.

Project management: Notion, Asana, or Monday.com to track content production, assign tasks, and manage deadlines.

Total cost: $500-1,500/month depending on team size and platform tiers. That's less than one full-time hire and gives you the infrastructure to scale to 50+ articles per month.

Common workflow mistakes (and how to avoid them)

Mistake 1: Optimizing for the wrong prompts Don't chase high-volume prompts if they're not relevant to your business. A prompt with 1,000 monthly searches that converts at 0.1% is worse than a prompt with 100 searches that converts at 5%.

Mistake 2: Treating GEO like SEO SEO optimizes for keywords. GEO optimizes for questions and contexts. The content structure is different -- more direct answers, more comparisons, more nuance.

Mistake 3: Ignoring AI crawler logs If AI crawlers aren't visiting your site, your visibility won't improve no matter how much content you publish. Check crawler logs monthly and fix indexing issues immediately.

Mistake 4: No attribution tracking Visibility metrics are interesting, but traffic and conversions are what matter. Set up attribution tracking from day one so you can connect GEO efforts to revenue.

Mistake 5: Manual workflows If you're copying data between tools or manually creating content briefs, you're wasting time. Automate the repetitive parts and focus human effort on strategy and quality.

What a mature GEO workflow looks like

After 6-12 months, a mature GEO workflow should:

- Run audits automatically every month, surfacing new content gaps without manual analysis

- Generate 10-20 content briefs per month based on prompt trends and competitor gaps

- Produce 5-15 high-quality articles per month that directly address priority prompts

- Track visibility, citations, and traffic in a single dashboard updated daily

- Attribute AI-referred traffic to specific prompts and content pieces

- Iterate based on data -- double down on what works, kill what doesn't

At that point, you're not "doing GEO" -- you have a GEO machine that runs with minimal oversight. Your team focuses on edge cases, strategic decisions, and content quality, not manual data entry.

Start small, scale deliberately

You don't need a perfect workflow on day one. Start with:

- One visibility audit to identify your top 10 content gaps

- One content brief template that captures what AI engines want

- One measurement dashboard that tracks visibility and traffic

- One monthly review to assess what's working

Run that loop for three months. Document what works. Automate the repetitive parts. Add tools as you identify bottlenecks, not because they're trendy.

By month six, you'll have a workflow that scales. By month twelve, you'll be producing more content, with better results, than teams twice your size.

That's the difference between doing GEO and building a GEO workflow.