Key takeaways

- Traditional SEO tools alone no longer determine who gets found -- AI search engines like ChatGPT, Perplexity, and Google AI Overviews now operate on different signals entirely

- Top brands in 2025 ran a three-layer stack: SEO foundation tools, AI content platforms, and dedicated GEO trackers

- A Surfer SEO study found only 24% of brands overlapped between ChatGPT API and UI results -- meaning most AI rank trackers are showing you incomplete data

- The brands gaining the most AI visibility weren't just monitoring their rankings -- they were actively closing content gaps and publishing material that AI models want to cite

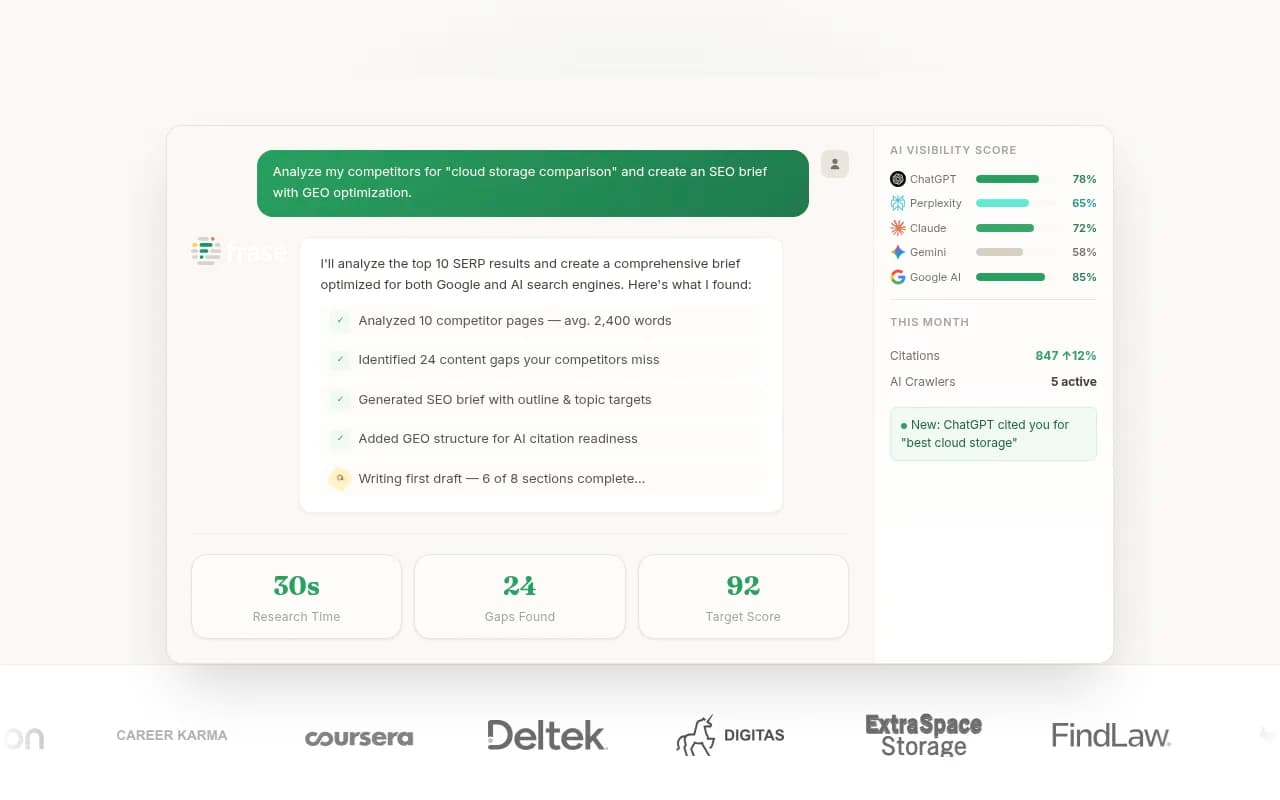

- Tools like Promptwatch combine monitoring, gap analysis, and content generation in one loop, which is why standalone trackers often fall short

Why 2025 forced brands to rethink their entire stack

For most of the past decade, SEO was a relatively stable game. You picked a rank tracker, ran your keyword research, optimized your pages, and watched the numbers move. The tools changed at the margins but the underlying logic stayed the same.

2025 broke that logic.

Google AI Overviews started absorbing over 35% of clicks on many queries before users ever reached organic results. ChatGPT became a product research tool for millions of consumers. Perplexity started pulling citations from sources that had never ranked in traditional search. And Gemini, Claude, and Grok each developed their own citation patterns that didn't map neatly onto Google's ranking signals.

Lily Ray, one of the more credible voices in the SEO space, wrote about this shift extensively -- her observation being that 2025 wasn't just a year of algorithm updates but a structural change in how information gets surfaced. The brands that adapted built new stacks. The ones that didn't are watching their traffic erode.

What did the adapted stack look like? Three distinct layers, each doing a different job.

Layer 1: The SEO foundation (still essential, just not sufficient)

The instinct to abandon traditional SEO tools entirely is wrong. They still matter -- just for different reasons than before.

Research consistently shows that roughly 52% of sources cited in Google AI Overviews already rank in the top 10 organic results. So your traditional SEO foundation directly feeds your AI visibility. If your pages aren't technically sound, well-structured, and authoritative on their topics, AI models are less likely to pull from them.

The tools that held up best in 2025 were the ones with strong content optimization and topical authority features.

Surfer SEO remained a go-to for content teams that needed data-driven optimization. Its content editor scores pages against top-ranking competitors and flags semantic gaps -- which turns out to be directly relevant to AI citation patterns too, since AI models favor comprehensive, well-structured content.

Clearscope took a similar approach but with a cleaner interface that content writers actually enjoyed using. It grades content against NLP-derived term lists and is particularly good at catching the conceptual gaps that cause AI models to skip your page in favor of a competitor's.

Frase sat at the intersection of research and optimization -- useful for teams that needed to understand what questions a topic should answer before writing anything.

For teams that needed a full SEO suite rather than a point solution, Semrush and Moz Pro remained the defaults, though both were playing catch-up on the AI visibility side.

The honest limitation of this layer: these tools tell you how to rank in Google. They don't tell you why ChatGPT recommends your competitor instead of you, or which questions Perplexity is answering with someone else's content. That's where layer two comes in.

Layer 2: AI content platforms (writing for citation, not just ranking)

The content strategy that worked in 2025 was different from what worked in 2022. Writing for AI citation means something specific: comprehensive answers to specific questions, structured so an AI model can extract a clean, quotable response.

This isn't about keyword density or word count. It's about whether your page actually answers the question better than anything else available -- and whether it does so in a way that's easy for a language model to parse and reference.

Several content platforms evolved to address this directly.

MarketMuse was one of the more sophisticated options for teams that needed to understand topical authority at scale. It maps content gaps across an entire site and prioritizes what to write based on competitive difficulty and relevance -- the kind of strategic view that helps you decide where to invest content resources.

Content at Scale leaned into volume, generating long-form content that was designed to pass AI detection while covering topics comprehensively. For brands that needed to build out large content libraries quickly, it was a practical option.

Jasper remained popular with marketing teams that needed brand-consistent content at speed. Its templates and workflows made it easier to maintain quality across large teams.

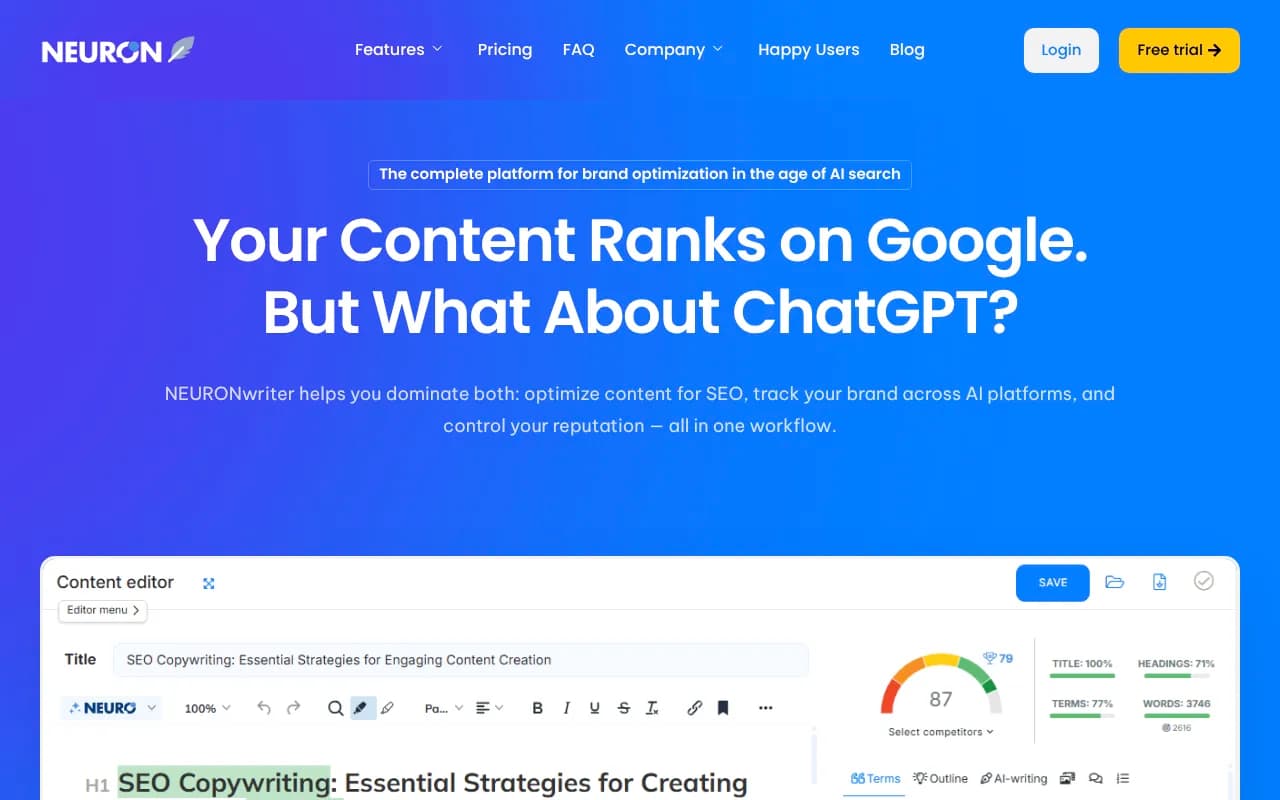

NeuronWriter was a quieter player that earned a strong reputation for semantic optimization -- particularly useful for European markets where AI search adoption was accelerating.

The pattern across all these tools: the best-performing brands weren't using them to produce generic SEO filler. They were using them to answer specific questions that AI models were being asked -- questions they identified through their GEO tracking layer.

Which brings us to the third layer, and honestly the most important one.

Layer 3: GEO trackers (the part most brands got wrong)

This is where 2025 got complicated.

The market for GEO tracking tools exploded. Dozens of platforms launched promising to show you your "AI rank" across ChatGPT, Perplexity, Claude, and others. Brands rushed to sign up. Many were disappointed.

The core problem: most of these tools were monitoring dashboards. They showed you data. They didn't help you do anything with it.

There's also a deeper accuracy issue. A Surfer SEO study found that only 24% of brands overlapped between ChatGPT API results and ChatGPT UI results. For sources and citations specifically, the overlap was just 4%. This means that if your GEO tracker is querying the API to simulate what users see in the actual interface, it's showing you a fundamentally different picture than reality.

This doesn't mean GEO tracking is useless -- it means you need to be careful about which tools you trust and what you do with the data.

Here's how the main players stacked up:

| Tool | Monitoring | Content gap analysis | Content generation | Crawler logs | ChatGPT Shopping | Price |

|---|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | Yes | From $99/mo |

| Profound | Yes | Limited | No | No | Yes | Custom |

| Otterly.AI | Yes | No | No | No | No | From ~$29/mo |

| Peec AI | Yes | No | No | No | No | From $49/mo |

| AthenaHQ | Yes | Limited | No | No | No | Custom |

| SE Ranking | Yes | Limited | No | No | No | From $129/mo |

| LLMrefs | Yes | No | No | No | No | Free–$79/mo |

| Scrunch AI | Yes | No | No | No | No | Custom |

The brands that made real progress in 2025 weren't the ones with the most monitoring data. They were the ones that closed the loop: find the gaps, create content to fill them, track whether it worked.

Promptwatch was the platform that most consistently did all three. Its Answer Gap Analysis shows exactly which prompts competitors are visible for that you're not -- not as an abstract score, but as specific questions and topics. The built-in content generation then creates articles grounded in citation data from over 880 million analyzed citations. And page-level tracking shows which new pages are actually getting cited by which AI models.

For brands that wanted a pure monitoring solution without the content layer, Otterly.AI was the most accessible entry point.

SE Ranking's AI visibility features were solid for teams already using it as their primary SEO platform -- the unified dashboard reduced tool sprawl even if the GEO features weren't as deep.

Profound had strong enterprise features and was one of the few tools tracking ChatGPT Shopping, but the pricing put it out of reach for most mid-market brands.

What the winning stack actually looked like

The brands that gained the most AI visibility in 2025 weren't running a dozen tools. They were running tight stacks with clear responsibilities at each layer.

A typical mid-market brand stack looked something like this:

- SEO foundation: Surfer SEO or Clearscope for content optimization, Google Search Console for technical health

- Content production: Jasper or MarketMuse for content strategy and drafting

- GEO tracking and optimization: Promptwatch for monitoring, gap analysis, and content generation grounded in real citation data

An agency managing multiple clients often added AgencyAnalytics for consolidated reporting and Topical Map AI for building out topical authority frameworks before diving into content production.

Enterprise teams tended to layer in BrightEdge or seoClarity for their existing SEO infrastructure, then add a dedicated GEO layer on top.

The mistakes brands made (and how to avoid them)

A few patterns showed up repeatedly among brands that struggled.

Treating GEO as a separate strategy from SEO. The brands that won treated them as the same problem. If your content is genuinely comprehensive and authoritative, it tends to perform in both traditional search and AI citations. The tools are different but the underlying content quality requirement is the same.

Optimizing for API results instead of real user experience. Given the 4% citation overlap between API and UI results noted in the Surfer study, brands that built their entire GEO strategy around API-based tracking were often optimizing for a signal that didn't reflect what actual users were seeing.

Monitoring without acting. This was the most common mistake. Brands would set up a GEO tracker, watch their visibility scores, and feel informed. But knowing you're invisible in ChatGPT for "best [product category]" doesn't help you unless you know specifically what content to create and then actually create it.

Ignoring Reddit and YouTube. AI models don't just cite brand websites. They cite Reddit threads, YouTube videos, and forum discussions heavily. Brands that only optimized their own properties missed a significant portion of the citation graph. Tools that surface these signals -- including Promptwatch's Reddit and YouTube insights -- gave teams a more complete picture of where to invest.

Where to start if you're building this stack now

If you're starting from scratch in 2026, the priority order matters.

Start with your GEO tracking layer first, not your SEO foundation. You need to understand where you're invisible before you can decide what content to create. Run a prompt audit: what questions are people asking AI models in your category? Which competitors are getting cited and why?

Then use that data to inform your content strategy. The content you create should be answering specific questions that AI models are being asked -- not generic SEO articles that happen to include keywords.

Finally, make sure your technical SEO foundation is solid enough that AI crawlers can actually read and index your content. Tools like Promptwatch's AI Crawler Logs show you exactly which pages AI crawlers are visiting, how often, and what errors they're hitting -- which is more actionable than most technical SEO audits.

The brands that figured this out in 2025 are now compounding their advantage. The gap between visible and invisible in AI search is widening faster than it did in traditional SEO, partly because the content that gets cited tends to get cited repeatedly, and partly because AI models are slow to update their knowledge of new content.

The stack isn't complicated. The discipline to actually close the loop -- find gaps, create content, track results -- is what separates the brands that are winning from the ones still watching their dashboards.