Key takeaways

- Most brands publish content without first checking whether ChatGPT, Perplexity, or other AI models mention them at all -- this audit fixes that.

- AI models cite brands that are clearly associated with specific problems, consistently mentioned in credible sources, and easy to understand without jargon.

- The 10 questions below cover your current visibility baseline, content gaps, source credibility, and whether your content is actually structured for AI citation.

- Running this audit before every content sprint saves you from publishing into a void -- and helps you prioritize work that moves the needle in AI search.

Most marketers treat content publishing like a conveyor belt. Brief goes in, article comes out, post to blog, repeat. In 2025 that was already a questionable strategy. In 2026, with AI search handling a growing share of discovery and recommendation, it's close to self-defeating.

Here's the uncomfortable reality: ChatGPT, Perplexity, Claude, and the rest don't rank your content the way Google does. They don't crawl your pages on a schedule and slot you into position 4 for a keyword. They synthesize answers from patterns in their training data and live web access -- and if your brand isn't clearly, consistently, and credibly associated with the problems you solve, you simply won't come up.

The good news is that this is auditable. You can systematically check where you stand, find the gaps, and fix them before you waste another content budget on articles that don't move the needle.

These are the 10 questions to answer before you publish anything else.

Question 1: Does ChatGPT actually mention your brand right now?

This sounds obvious, but most brands have never checked. Open ChatGPT (or Perplexity, or Claude) and ask the 10 most common questions your customers type into search. Note whether your brand appears, where it appears, and how it's described.

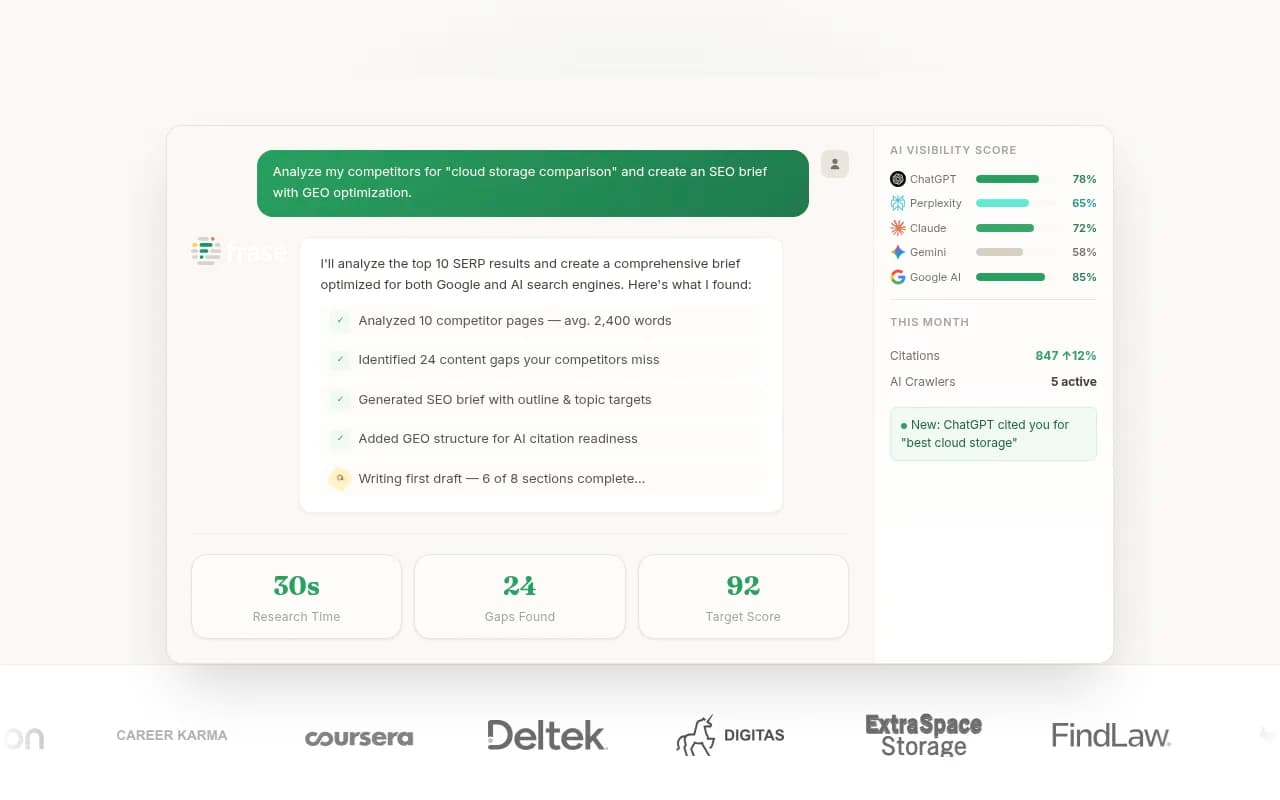

Do this across multiple AI models -- a mention in ChatGPT doesn't guarantee a mention in Perplexity. Each model has different training data, different web access patterns, and different citation tendencies.

Promptwatch can automate this across 10 models simultaneously, tracking mentions, sentiment, and position over time rather than requiring you to do it manually every week.

If you're not appearing at all, that's your baseline. Everything else in this audit is about closing the gap between where you are and where you need to be.

Question 2: What does ChatGPT say about you when it does mention you?

Getting mentioned isn't enough. The description matters enormously.

If ChatGPT describes you as "a software company" when you want to be known as "the leading tool for X use case," that's a positioning problem. AI models tend to reflect the consensus of what's written about you across the web. If your own website, press coverage, and third-party reviews all use vague language, the AI will too.

Ask ChatGPT: "What is [your brand]?" and "What is [your brand] best known for?" Read the answers critically. Are they accurate? Are they specific? Do they match how you'd describe yourself to a prospect?

If the description is fuzzy or wrong, the fix is usually content -- clearer positioning on your own site, more specific case studies, and third-party coverage that uses the language you want associated with your brand.

Question 3: Which prompts are your competitors winning that you're not?

This is the gap analysis question, and it's where most brands find the most actionable insights.

Your competitors are almost certainly appearing in AI responses where you aren't. The question is: for which prompts, and why?

Manually testing this is tedious but possible. Ask ChatGPT questions like "What's the best tool for [use case]?" and "Which companies offer [specific service]?" across every relevant category in your market. Note who comes up and who doesn't.

Tools like Promptwatch have an Answer Gap Analysis feature that surfaces exactly this -- the specific prompts where competitors are visible and you're not, along with the content your site is missing that would help you compete for those prompts.

The output of this question should be a prioritized list of prompts to target. Not every gap is worth closing -- focus on the ones with real volume and commercial intent.

Question 4: Is your content written for humans asking questions, or for search bots processing keywords?

There's a meaningful difference between content optimized for keyword density and content that actually answers questions well. AI models strongly favor the latter.

Read your last five published articles out loud. Do they answer a specific question clearly and directly? Or do they dance around the topic, bury the answer in paragraph six, and pad the word count with definitions nobody asked for?

ChatGPT and similar models are trained to synthesize good answers. They cite sources that contain good answers. If your content is structured as "keyword + 1,500 words of loosely related information," it won't get cited -- even if it ranks on Google.

The fix: rewrite your content to lead with the answer. Use real questions as subheadings. Keep explanations tight. Plain English beats jargon every time.

Question 5: Are credible third-party sources talking about you?

AI models don't just read your website. They read everything -- review sites, industry publications, Reddit threads, YouTube transcripts, news coverage. If the only place your brand is discussed is your own blog, that's a credibility problem.

Ask yourself: if someone searched for your brand on Reddit right now, what would they find? Are there genuine discussions about your product? Are you mentioned in industry roundups and comparison articles on sites your customers actually read?

This matters because AI models weight consensus. A brand mentioned positively across multiple independent sources is more likely to be cited than one that only appears on its own domain.

The practical implication: PR, community engagement, and third-party review generation aren't just brand-building activities anymore. They directly influence AI visibility.

Question 6: Does your content cover the full question, or just part of it?

AI models often handle what researchers call "query fan-outs" -- a single prompt that branches into multiple sub-questions. If someone asks "what's the best project management tool for remote teams," the AI might internally process that as: what are the best project management tools generally, which ones have strong remote collaboration features, what do users say about remote work specifically, and what's the price range.

If your content only answers one of those sub-questions, you're less likely to be cited for the full prompt.

Before publishing, map out the sub-questions your target prompt implies. Make sure your content addresses all of them -- not exhaustively, but clearly. A single well-structured article that covers the full question beats three separate articles that each cover a fragment.

Question 7: Is your brand clearly associated with a specific category or use case?

Generalist positioning is an AI visibility killer.

AI models cite brands when they have a clear, consistent association with a specific problem or solution. "We help businesses grow" tells an AI model nothing useful. "We help e-commerce brands reduce cart abandonment through exit-intent popups" gives it something to work with.

Look at your homepage, your about page, and your most-linked content. What category do they signal? What problem do they most clearly solve? If the answer is "it depends" or "we do a lot of things," that's a problem.

This doesn't mean you need to narrow your actual product. It means your content strategy needs to establish clear topical authority in specific areas. Publish enough depth on a focused set of topics that AI models can reliably associate you with those topics.

Question 8: Are AI crawlers actually reading your pages?

Here's a question most brands never think to ask: are the AI crawlers even getting to your content?

ChatGPT's crawler (OAI-SearchBot), Perplexity's crawler, and others visit websites to inform their responses. But they can encounter the same issues as Google's crawler -- blocked by robots.txt, slow load times, JavaScript rendering problems, or pages that simply aren't being indexed.

If AI crawlers can't read your pages, your content doesn't exist from their perspective -- regardless of how good it is.

Check your robots.txt file. Make sure you're not accidentally blocking AI crawlers. Look at your server logs for crawler activity. If you're on a platform like Promptwatch, the AI Crawler Logs feature shows you exactly which pages each AI crawler has visited, how often, and what errors they encountered.

Question 9: Is your content being published in the right places?

Your blog is not the only place AI models look for information about your category.

Reddit is a significant source. YouTube transcripts get indexed. Industry publications, comparison sites, and community forums all feed into the training data and live web access that AI models use. If your content strategy is entirely blog-centric, you're missing a lot of surface area.

Before publishing your next piece, ask: where do people in my audience actually discuss this topic? Where do the AI models seem to pull citations from when I ask questions in my category?

The answer might be that you need a Reddit presence, a YouTube channel, or guest posts on industry publications -- not just more blog content on your own domain.

Question 10: How will you know if this content improved your AI visibility?

This is the question most brands skip entirely -- and it's the most important one.

Publishing content without a way to measure its impact on AI visibility is like running ads without tracking conversions. You might be doing the right things, or you might be wasting your budget. You genuinely won't know.

Before you publish, decide: which AI models will you check for mentions? Which prompts are you trying to rank for? What's your baseline visibility score today? How will you attribute traffic from AI search to specific pages?

Tools that track AI visibility over time -- monitoring which prompts you appear in, which pages get cited, and how your visibility changes after new content goes live -- are what turn content publishing from guesswork into a measurable program.

Putting it all together: a pre-publish checklist

Running through 10 questions before every article sounds like a lot. In practice, most of these become quick checks once you've done the initial audit. Here's a condensed version you can use as a pre-publish checklist:

| Question | What to check | Red flag |

|---|---|---|

| 1. Current visibility | Does ChatGPT mention you for key prompts? | No mentions at all |

| 2. Brand description | Is the description accurate and specific? | Vague or wrong |

| 3. Competitor gaps | Which prompts are competitors winning? | No gap analysis done |

| 4. Content quality | Does it answer the question directly? | Keyword-padded, buries the answer |

| 5. Third-party coverage | Are credible sources discussing you? | Only your own site |

| 6. Full question coverage | Does it address sub-questions? | Covers only one angle |

| 7. Category association | Is your brand clearly tied to a specific problem? | Generalist positioning |

| 8. Crawler access | Can AI crawlers actually read your pages? | Blocked or unindexed |

| 9. Distribution | Are you publishing in the right places? | Blog-only strategy |

| 10. Measurement | How will you track visibility changes? | No tracking in place |

The bigger shift this audit represents

Running this audit isn't really about ChatGPT specifically. It's about recognizing that content now needs to serve two audiences: the humans who read it and the AI models that synthesize it into recommendations.

Those two audiences want the same things: clear answers, credible sources, specific expertise, and content that actually addresses the question being asked. The brands that get this right aren't doing anything exotic. They're just being genuinely useful at scale, in the right places, with enough consistency that AI models can reliably associate them with the problems they solve.

The audit is just a forcing function to make sure you're doing that before you publish -- not after you wonder why the traffic didn't come.