Key takeaways

- Ranking in ChatGPT is fundamentally different from ranking in Google — AI models cite sources, not pages, so your content needs to be structured to be quotable

- Traditional SEO tools (Semrush, Ahrefs, Surfer) are still useful for foundational work but don't tell you whether you're actually appearing in AI-generated answers

- A new category of GEO (Generative Engine Optimization) tools emerged in 2025 specifically to track and improve AI visibility — and they vary wildly in depth

- The tools that delivered real results combined monitoring with content gap analysis and actual content creation, not just dashboards

- In 2026, the winning stack combines a solid traditional SEO foundation with a dedicated AI visibility platform that closes the loop from gap to content to tracking

Why "ranking in ChatGPT" is a different problem

If you spent 2024 and early 2025 trying to figure out why your Google rankings weren't translating into ChatGPT mentions, you're not alone. The mechanics are genuinely different, and most SEO tools weren't built for this.

Google ranks pages. ChatGPT cites sources. That distinction sounds subtle but it changes everything about your strategy.

When someone asks ChatGPT a question, the model doesn't crawl the web in real time and return a ranked list. It generates a response based on its training data, retrieval-augmented content (in the case of tools like Perplexity or ChatGPT with browsing), and what it "knows" about authoritative sources. To appear in that response, your content needs to be:

- Structured clearly enough that an AI can extract a direct answer

- Authoritative enough that the model associates your domain with the topic

- Specific enough to match the exact phrasing of how people prompt AI tools

None of the traditional rank trackers tell you any of this. That's the gap that defined 2025 SEO.

What the traditional tools got right (and where they fell short)

Let's be honest: Semrush, Ahrefs, Surfer SEO, and their peers are still genuinely useful. They didn't become irrelevant overnight.

What they got right in 2025:

- Keyword research and search intent analysis still matter for creating content AI models want to cite

- Backlink profiles still influence domain authority, which influences AI model trust signals

- On-page optimization tools like Surfer SEO helped writers produce content that's semantically rich and well-structured — both good for Google and for AI readability

- Technical SEO (crawlability, page speed, schema markup) remained a prerequisite for AI crawlers to even find your content

Where they fell short:

- None of them could tell you whether ChatGPT was mentioning your brand in response to a specific prompt

- Keyword rankings in Google don't correlate cleanly with AI citation frequency

- They had no visibility into which prompts your competitors were winning in AI answers

- Ahrefs Brand Radar added some AI monitoring but uses fixed prompts — you can't customize them to your actual customer questions

The honest summary: traditional SEO tools are table stakes, not the solution. You still need them. But if you stopped there in 2025, you were flying blind on AI visibility.

The tools that actually moved the needle in 2025

AI visibility and GEO platforms

This is where the real action was. A new category of tools emerged specifically to track brand mentions in AI-generated answers, and by mid-2025 there were dozens of them. Quality varied enormously.

The monitoring-only tools — platforms that show you a dashboard of where you appear in AI answers but don't help you do anything about it — were useful for awareness but limited for actual improvement. Knowing you're invisible in ChatGPT is step one. Knowing what to do about it is step two. Most tools stopped at step one.

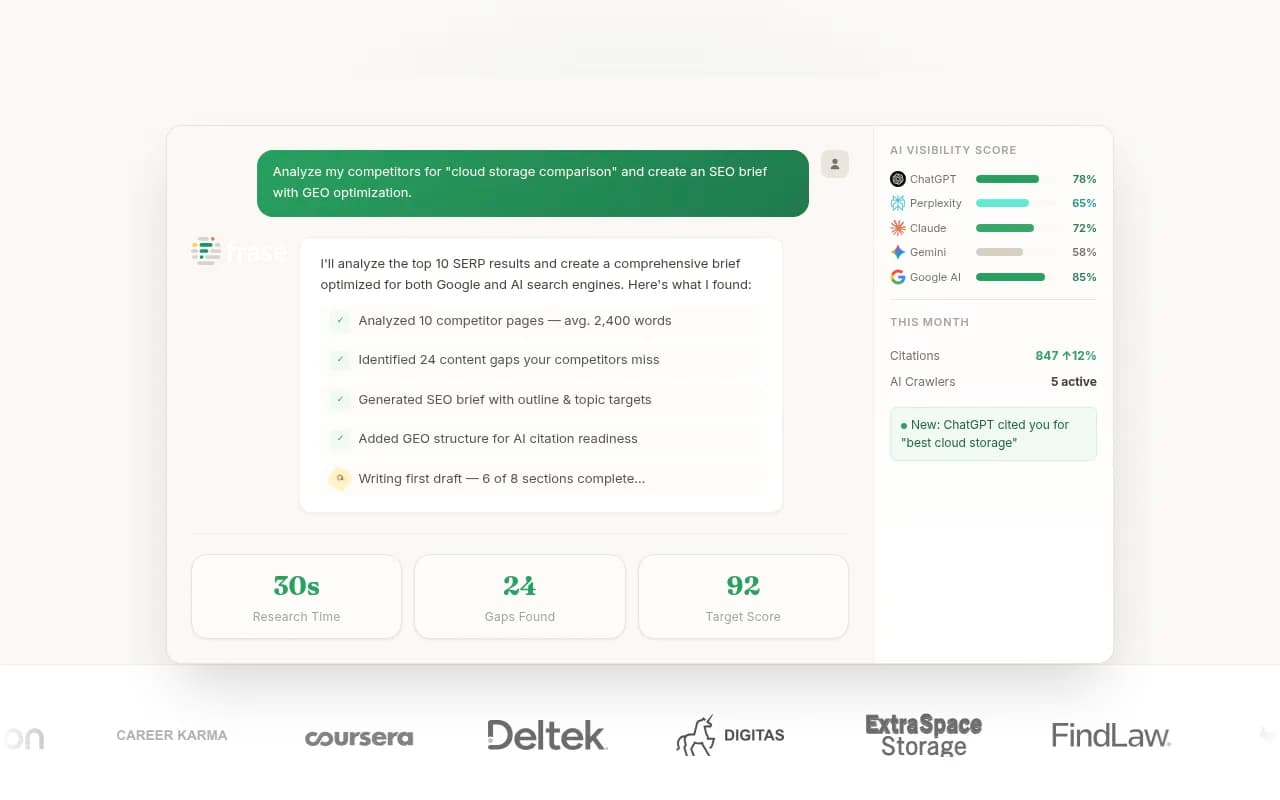

Promptwatch stood out as one of the few platforms that completed the loop. It tracks visibility across 10 AI models (ChatGPT, Claude, Perplexity, Gemini, Grok, DeepSeek, and more), but more importantly, it shows you the specific prompts where competitors are getting cited and you're not — and then helps you generate content designed to close those gaps. That combination of gap analysis, content generation grounded in citation data, and page-level tracking is what made it the most actionable platform in the category.

Other platforms worth knowing:

Otterly.AI is a solid entry-level option for teams that just want to start monitoring AI visibility without a big investment. It's affordable and straightforward, but it's monitoring-only — no content gap analysis, no crawler logs, no content generation.

Profound AI targets enterprise brands with a strong feature set and clean reporting. The price point reflects that. If you're a Fortune 500 brand with a dedicated SEO team, it's worth evaluating. For mid-market teams, it may be more than you need.

Peec AI is worth a look if you're operating across multiple languages and regions. Multi-language AI visibility is genuinely hard to do well, and Peec handles it better than most.

AthenaHQ covers monitoring across 8+ AI search engines and has clean UX, but like many competitors, it's primarily a tracking tool rather than an optimization platform.

Content optimization tools

Getting cited by AI models requires content that's actually good — specific, well-structured, and directly answering the questions people ask. These tools helped teams produce that kind of content at scale.

Clearscope remained one of the best tools for semantic content optimization in 2025. Its grading system pushes writers to cover a topic comprehensively, which happens to be exactly what AI models want when deciding what to cite.

MarketMuse goes deeper on content strategy — it shows you topical authority gaps across your entire site, not just individual pages. For teams trying to become the definitive source on a topic (which is how you get consistently cited by AI), this kind of site-wide view is genuinely valuable.

Frase is a practical middle ground: content briefs, SERP analysis, and AI-assisted writing in one tool. It's less expensive than MarketMuse and faster to get started with. The AI writing quality improved significantly through 2025.

NeuronWriter uses NLP-based content scoring similar to Clearscope but at a lower price point. It's popular with freelancers and smaller teams who want semantic optimization without the enterprise price tag.

Technical SEO and crawlability

AI models can't cite content they can't find. Technical SEO became even more important in 2025 because AI crawlers (GPTBot, ClaudeBot, PerplexityBot) have their own crawl patterns, and many sites were inadvertently blocking them.

Botify added AI search visibility features to its already strong enterprise crawl platform. For large sites with complex architectures, it's one of the few tools that can show you how AI crawlers are actually navigating your site versus how Google does.

Google Search Console remains free and essential. It doesn't track AI visibility directly, but it's still the best source of truth for crawl errors, indexing status, and organic performance — all of which feed into your AI visibility foundation.

Prerender.io became increasingly relevant for JavaScript-heavy sites. If your content is rendered client-side, AI crawlers often can't read it. Prerender solves that by serving pre-rendered HTML to bots, including AI crawlers.

Content creation at scale

Once you know what content gaps to fill, you need to produce that content efficiently. The tools that worked best in 2025 were the ones that combined AI writing with SEO context — not just generic AI text generation.

Content at Scale produces long-form content that's designed to pass AI detection and rank in search. It's not the cheapest option, but the output quality is consistently higher than most pure AI writers.

Jasper remained a strong choice for marketing teams that need brand-consistent content at volume. Its SEO integrations (Surfer SEO, Google Search Console) make it more useful than a standalone AI writer.

Scalenut combines keyword research, content briefs, and AI writing in one workflow. It's particularly good for teams that want to go from "keyword idea" to "published article" without switching between multiple tools.

The comparison: which tool for which job

| Tool | Best for | AI visibility tracking | Content generation | Price range |

|---|---|---|---|---|

| Promptwatch | Full GEO optimization loop | Yes (10 models) | Yes (AI writing agent) | $99-$579/mo |

| Otterly.AI | Entry-level AI monitoring | Yes (limited) | No | Low |

| Profound AI | Enterprise AI visibility | Yes | No | High |

| Semrush | Traditional SEO + basic AI | Partial | Yes (ContentShake) | $130+/mo |

| Surfer SEO | On-page content optimization | No | Yes | $89+/mo |

| Clearscope | Semantic content optimization | No | No | $170+/mo |

| MarketMuse | Topical authority strategy | No | Yes | $149+/mo |

| Frase | Content briefs + AI writing | No | Yes | $15+/mo |

| Botify | Enterprise technical SEO | Partial | No | Enterprise |

| Content at Scale | Long-form AI content | No | Yes | $250+/mo |

What the Reddit SEO community was actually using

The r/AskMarketing and r/SEO communities in 2025 were pretty honest about the gap between what vendors promised and what practitioners actually found useful.

A few consistent themes:

Beginners gravitated toward Ubersuggest and Mangools for keyword research because they're genuinely less overwhelming than Semrush or Ahrefs. That's a fair assessment — those tools have steep learning curves.

More experienced practitioners were mixing traditional tools with newer GEO platforms, often running Semrush or Ahrefs for foundational research alongside a dedicated AI visibility tracker. The consensus was that no single tool covered everything yet.

There was also real skepticism about AI content tools producing generic, low-quality output. The practitioners who got good results were using AI writing tools as a starting point, not a finished product — and they were feeding those tools with specific briefs grounded in real search and citation data rather than just asking for "an article about X."

Building a practical stack for 2026

Here's what a sensible stack looks like now, organized by team size:

Solo practitioners and small teams

- Google Search Console (free, non-negotiable)

- Ubersuggest or Mangools for keyword research

- Frase or NeuronWriter for content optimization

- Otterly.AI or a basic tier of Promptwatch for AI visibility monitoring

Total cost: roughly $50-150/month. This covers the basics without overcomplicating things.

Mid-market marketing teams

- Semrush or Ahrefs for comprehensive SEO research

- Surfer SEO or Clearscope for content optimization

- Promptwatch Professional for AI visibility tracking, gap analysis, and content generation

- Google Search Console + Promptwatch's traffic attribution for connecting visibility to revenue

Total cost: roughly $400-700/month. The key addition here is a platform that actually helps you act on AI visibility data, not just observe it.

Enterprise teams and agencies

- Semrush or Ahrefs at scale

- MarketMuse for topical authority planning

- Botify for technical SEO at scale

- Promptwatch Business or Enterprise for multi-site AI visibility, crawler logs, and custom reporting

- Looker Studio integration for stakeholder reporting

The enterprise tier is where the depth of Promptwatch's data (1.1B+ citations analyzed, crawler logs showing exactly which AI bots are hitting which pages) becomes genuinely differentiating versus lighter-weight monitoring tools.

The one thing most teams got wrong in 2025

They treated AI visibility as a separate project from their regular content strategy.

The teams that saw real results figured out that the same content that gets cited by ChatGPT also tends to rank well in Google. Comprehensive, well-structured, genuinely useful content that directly answers specific questions performs across both channels. The difference is that for AI visibility, you need to be more deliberate about which questions you're answering and whether your content is structured in a way that's easy for an AI to extract a clean answer from.

That means:

- Clear headings that match how people actually phrase questions

- Direct answers near the top of sections, not buried in paragraphs

- Specific data, examples, and claims that give AI models something concrete to cite

- Schema markup that helps AI crawlers understand what your content is about

None of this requires a complete overhaul of your content strategy. It's mostly about being more intentional with structure and specificity.

Where things are heading

The line between "SEO tool" and "AI visibility tool" is blurring fast. Semrush and Ahrefs are both adding AI monitoring features. Pure-play GEO platforms are adding content creation. The category is consolidating.

What's becoming clear is that the platforms with the best data win. Tracking AI visibility requires running thousands of prompts across multiple models, analyzing the responses, and building a picture of who's getting cited and why. That's expensive to do well, which is why the quality gap between the leading platforms and the lightweight trackers is significant.

For most teams in 2026, the practical question isn't "should I care about AI visibility" — it's "which platform gives me the best combination of data depth and actionability for my budget." The answer to that depends on your team size, but the direction is clear: monitoring alone isn't enough. You need tools that help you close the gap.