Key takeaways

- AI models favor content that gives direct, specific answers -- not content that buries the point in preamble

- Structure matters enormously: question-answer formats, clear entity definitions, and logical chunking all improve citation rates

- Depth beats length -- thorough topic coverage signals authority, but padding does not

- Original data, named frameworks, and first-person expertise are among the strongest citation triggers

- Tracking which pages AI models actually cite (and which they ignore) is the only way to close the loop and improve over time

Getting cited by an AI model is not the same as ranking on Google. The signals are different, the format preferences are different, and the content that wins looks noticeably different from what dominated search results five years ago.

This guide is about writing content that ChatGPT, Perplexity, Claude, Gemini, and similar models actually pull from when answering user questions. Not content that could theoretically be cited -- content that is cited, repeatedly, across multiple models.

Here's what actually works.

Why AI models cite some content and skip most of it

AI models don't browse the web the way a human does. They're pattern-matching machines trained on enormous corpora, and when they generate a response, they're looking for sources that clearly, confidently, and specifically answer the question at hand.

The content they skip tends to share a few traits: vague introductions, hedged claims, no clear structure, and nothing that couldn't be found in a hundred other places. The content they cite tends to be specific, well-organized, and authoritative in a way that's immediately obvious.

Think of it this way: if you were an AI model trying to answer "what is the average open rate for B2B email campaigns," you'd want a source that says "B2B email open rates average 21.5% according to Mailchimp's 2025 benchmark report" -- not a source that says "email marketing remains an important channel for businesses looking to engage their audiences."

One of those is citable. The other is filler.

Start with a direct answer, every time

The single most common mistake in content that doesn't get cited: burying the answer.

AI models are extracting information to synthesize into a response. If your answer to a question is on paragraph seven, after three paragraphs of context-setting and two paragraphs of "why this matters," the model may never find it -- or may find a competitor's cleaner version first.

The fix is simple: answer the question in the first sentence or two, then provide context and depth below it. This mirrors the inverted pyramid structure journalists have used for decades, and it works just as well for AI citation as it does for skimmable news articles.

Bad opening: "In today's rapidly evolving digital landscape, understanding AI search behavior has become increasingly important for content marketers who want to stay competitive."

Good opening: "AI models cite content that gives direct, specific answers near the top of the page -- not content that buries the point in introductory paragraphs."

The second version is extractable. The first is not.

Structure your content so AI can chunk it

LLMs process content in chunks. They're looking for discrete, self-contained units of information they can extract and synthesize. Content that's structured to support this gets cited more often.

Practical ways to do this:

- Use clear H2 and H3 headings that describe exactly what the section covers (not clever wordplay)

- Write paragraphs that can stand alone -- each one should contain a complete thought

- Use Q&A formatting for sections that address specific questions your audience asks

- Keep list items specific and factual, not vague and general

- Define terms explicitly when you introduce them ("Entity salience refers to how clearly a piece of content establishes the identity and context of the people, places, and concepts it discusses")

The goal is to make it easy for a model to extract a clean, accurate answer without needing to read your entire article.

Tools like Clearscope and Surfer SEO can help you analyze whether your content covers a topic comprehensively enough, while tools like Frase are useful for structuring content around the questions your audience is actually asking.

Go deep, not just long

There's a persistent myth that longer content ranks better. Length is a proxy for depth, and depth is what actually matters -- to both traditional search engines and AI models.

Shallow content that hits 3,000 words by repeating itself doesn't get cited. Thorough content that covers every meaningful angle of a topic in 1,200 words does.

"Thorough" means:

- Covering the main question and the adjacent questions someone would naturally ask

- Addressing common misconceptions or edge cases

- Including specific numbers, examples, or case studies rather than vague generalizations

- Explaining why something is true, not just that it's true

A useful test: after writing a section, ask yourself "could someone read this and still not know how to apply it?" If yes, it's not thorough enough.

MarketMuse is worth looking at here -- it analyzes topic coverage and shows you which subtopics your content is missing compared to what's already ranking.

Use original data and named frameworks

This is one of the most underused citation triggers. AI models love citing specific data points, and they especially love citing data that can only be traced back to one source.

If you publish original research -- a survey, an analysis of your own platform's data, a benchmark report -- you create something that is inherently unique and citable. Every time another piece of content references your data, you become the primary source. AI models follow the same citation logic.

Named frameworks work similarly. If you develop a specific methodology and give it a name (say, "the Content Citation Loop" or "the Answer-First Framework"), you create a concept that can only be attributed to you. Other writers will reference it. AI models will cite it.

This is what thought leadership actually means in practice -- not writing about trends, but creating the concepts other people use to describe those trends.

Write with entity clarity

AI models build knowledge graphs. They understand content in terms of entities -- specific people, companies, products, places, and concepts -- and the relationships between them.

Content that's vague about entities is harder to cite accurately. Content that's specific gets placed correctly in the model's understanding of the world.

Practical implications:

- Name the specific tool, company, or person you're discussing rather than using pronouns or vague references

- When you introduce a concept, define it explicitly rather than assuming the reader knows what you mean

- Include context that helps a model understand who you are and why you're a credible source on this topic (your role, your experience, your data)

- Link to authoritative sources when you reference external claims -- this signals that your content is grounded in verifiable information

Match the format to the query type

Different types of queries favor different content formats. Getting this right is one of the more nuanced parts of writing for AI citation.

| Query type | Best format | Example |

|---|---|---|

| Definition ("what is X") | Short direct definition + expansion | "X is... It works by... It differs from Y in..." |

| How-to ("how do I X") | Numbered steps with specific actions | Step 1: Do this. Step 2: Do that. |

| Comparison ("X vs Y") | Side-by-side table + narrative analysis | Table of key differences + when to use each |

| Best of ("best tools for X") | Structured list with specific criteria | Tool name, what it does, who it's for |

| Opinion/analysis | Clear thesis + supporting evidence | "The reason X matters is... Here's the data..." |

If your content format doesn't match what the query expects, it's less likely to be extracted cleanly -- even if the information is accurate.

Build topical authority, not just individual pages

AI models don't just evaluate individual pages. They evaluate sources. A website that covers a topic comprehensively and consistently is more likely to be cited across multiple queries than a website that has one good article on a topic surrounded by unrelated content.

This is topical authority, and it matters more in the AI search era than it did in traditional SEO.

Building it means publishing a cluster of related content that covers a topic from multiple angles -- the overview, the how-to, the comparison, the case study, the FAQ. Each piece reinforces the others and signals to AI models that your site is a reliable, comprehensive source on this subject.

Topical Map AI is a useful tool for planning this kind of content architecture before you start writing.

Write for humans first, AI second

This sounds obvious but gets violated constantly. Content written primarily to satisfy AI citation signals tends to be robotic, repetitive, and unpleasant to read. And ironically, AI models trained on human feedback tend to prefer content that humans actually find useful and engaging.

The practical version of this: write the best possible answer to the question, in a voice that sounds like a knowledgeable person talking. Then check that it's structured in a way that's easy to extract. Don't reverse the order.

Good writing is specific. It uses concrete examples. It has a point of view. It acknowledges complexity where complexity exists. It doesn't pad.

Tools like Hemingway App and Grammarly are useful for catching readability issues after you've written the substance.

The technical side: what AI crawlers actually see

Your content can be perfectly written and still not get cited if AI crawlers can't access it properly.

A few things worth checking:

- Make sure your

robots.txtisn't blocking AI crawlers (GPTBot, ClaudeBot, PerplexityBot, etc.) if you want to be cited - Ensure your pages load cleanly and don't rely on JavaScript rendering for the main content

- Use schema markup to help AI models understand the structure and context of your content

- Check that your most important pages are being crawled regularly, not just indexed once and forgotten

Most content teams don't have visibility into which pages AI crawlers are actually visiting and how often. This is a real blind spot -- you can publish great content and have no idea whether AI models are even seeing it.

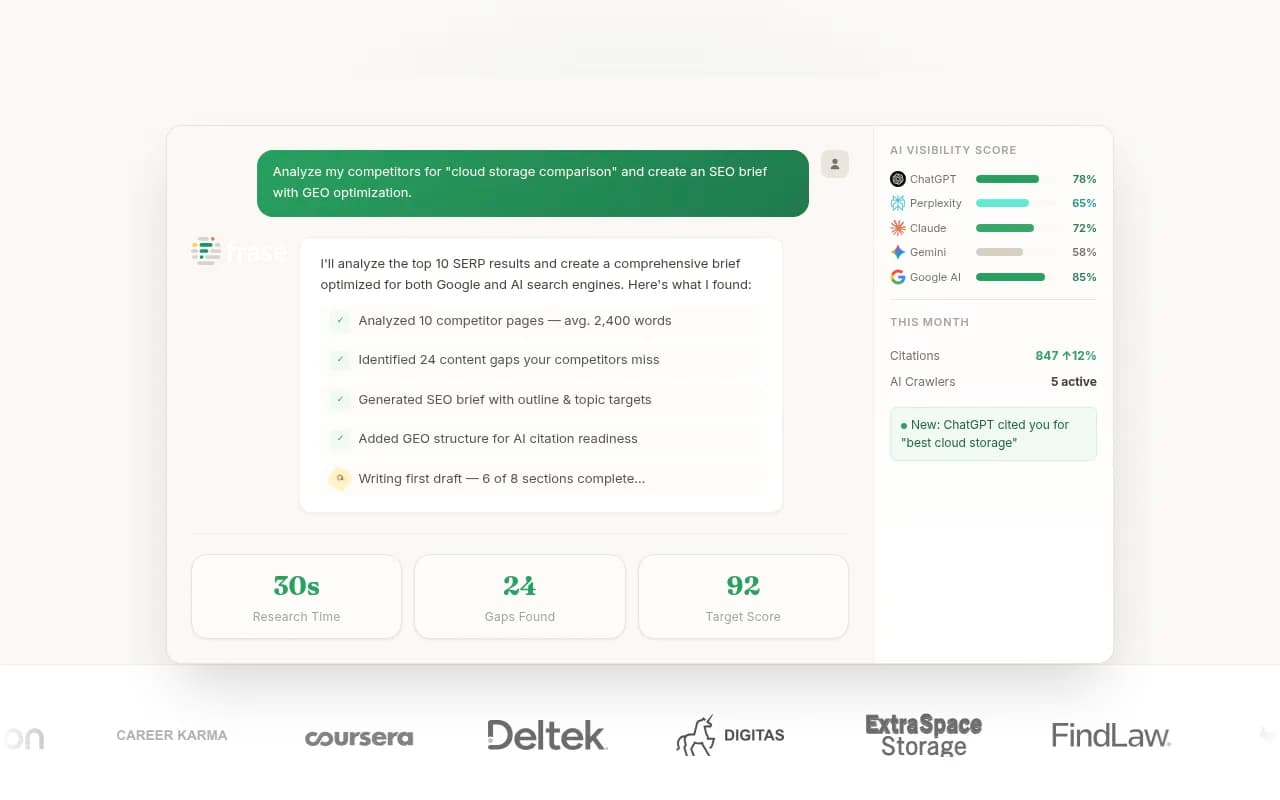

Promptwatch has a crawler log feature that shows you exactly which pages ChatGPT, Claude, Perplexity, and other AI crawlers are hitting, how often they return, and any errors they encounter. It's one of the few ways to actually verify that your content is being discovered, not just assume it is.

Measure what's actually getting cited

Writing for AI citation without tracking results is like running ads without conversion data. You're guessing.

The feedback loop matters: publish content, see which pages get cited by which models, identify what those pages have in common, apply those patterns to new content. Repeat.

This is harder than it sounds because most analytics tools don't capture AI-driven traffic clearly. A user who asks ChatGPT a question and then clicks through to your site may show up in your analytics as direct traffic or as a referral from chat.openai.com -- but the connection between the citation and the visit is often invisible.

Closing that loop requires tools built specifically for AI visibility tracking. Beyond Promptwatch, tools like Otterly.AI, Peec AI, and Profound offer varying levels of citation monitoring.

The difference worth knowing: most monitoring tools show you where you're visible and where you're not. Promptwatch goes further by showing you which specific prompts competitors are being cited for that you're missing, then helping you generate content to close those gaps. For teams that want to act on the data rather than just look at it, that distinction matters.

A practical checklist before you publish

Run through this before hitting publish on any piece of content you want AI models to cite:

- Does the page answer the primary question in the first two paragraphs?

- Are the headings descriptive and specific (not clever or vague)?

- Does each section contain a self-contained, extractable answer?

- Are all claims specific and attributed (no "experts say" or "studies show")?

- Is there at least one piece of information that can only be found here (original data, a named framework, a specific case study)?

- Are entities (people, companies, tools, concepts) named explicitly and defined clearly?

- Does the content format match the query type it's targeting?

- Is the page accessible to AI crawlers?

If you can check all of these, you've written content that gives AI models a reason to cite you. The rest is tracking, iterating, and doing it again.