Key takeaways

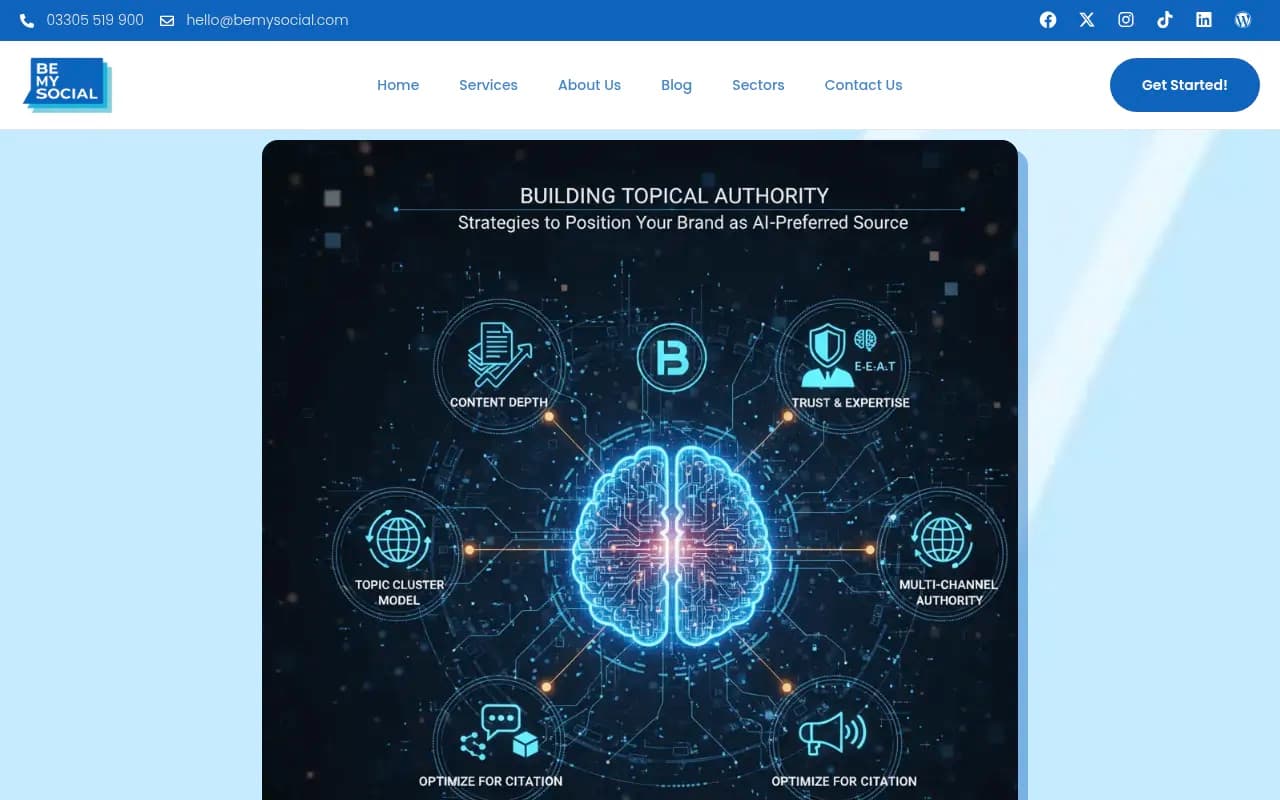

- Topical authority -- not backlinks or keyword density -- became the primary signal AI models like ChatGPT and Perplexity used to decide which sources to cite in 2025.

- Tools like Topical Map AI and MarketMuse helped brands map content gaps, build topic clusters, and produce the kind of depth that AI engines reward with citations.

- Zero-click searches now account for roughly 65% of all Google queries, meaning visibility in AI-generated answers matters more than ever for organic traffic.

- Building topical authority for AI search requires a different approach than traditional SEO: entity coverage, semantic depth, and consistent publishing across a topic cluster all matter more than domain authority alone.

- Tracking whether your content actually gets cited by AI models requires dedicated tooling -- traditional rank trackers won't show you this.

Why 2025 was the year topical authority finally took over

For years, SEOs debated whether topical authority would ever truly displace backlinks as the dominant ranking signal. In 2025, that debate ended.

The shift wasn't gradual. AI search engines -- ChatGPT, Perplexity, Claude, Google's AI Overviews -- don't rank pages the way Google's traditional algorithm does. They don't count links. They read content, assess whether a source demonstrates genuine expertise across a topic, and decide whether to cite it. The question they're effectively asking is: "Does this site actually know this subject, or is it just optimized for it?"

That's a fundamentally different question than "does this page have enough backlinks?" And it changed what brands needed to do to stay visible.

The brands that figured this out early -- and used the right tools to execute -- saw their citations in AI responses climb steadily through 2025. The ones that kept chasing keyword density and link building watched their AI visibility stagnate, even as their traditional rankings held.

What topical authority actually means for AI search

Topical authority isn't a new concept. It's been part of SEO thinking since Google's Hummingbird update back in 2013. But its application in AI search is different enough that it's worth being precise about what it means here.

In traditional SEO, topical authority is roughly "does this site have a lot of content about this subject?" In AI search, it's closer to "does this site cover this subject comprehensively enough that an AI model can trust it as a primary source?"

That means a few specific things:

- Breadth and depth together. Covering 50 subtopics shallowly doesn't work. Neither does one very long article. AI models want to see that you've addressed the full range of questions a user might have about a topic -- including the adjacent, nuanced, and follow-up questions.

- Entity coverage. AI models think in entities (people, places, concepts, relationships between them). Content that clearly defines entities and explains how they relate to each other tends to get cited more often than content that just targets keywords.

- Consistency of expertise signals. If your site covers 10 topics at equal depth, you're probably not authoritative on any of them. Brands that picked a lane and went deep saw better results.

- Freshness within the cluster. AI models are trained on data with cutoffs, but retrieval-augmented systems like Perplexity actively crawl the web. Content that's regularly updated within a topic cluster signals ongoing expertise.

How Topical Map AI approached the problem

Topical Map AI took a specific angle on the authority problem: start with the map, not the content.

The idea is that most brands don't have a clear picture of what their topic cluster actually looks like -- which subtopics they cover, which ones they're missing, and how the pieces connect. Before you can build authority, you need to know what you're building.

The tool generates visual topic maps that show the full landscape of a subject: core topics, supporting topics, related entities, and the gaps between what a brand currently covers and what a comprehensive resource would include. In practice, this meant content teams could see at a glance that they'd published 20 articles about "email marketing" but had nothing on deliverability, list hygiene, or re-engagement sequences -- all topics that ChatGPT regularly pulls from when answering email marketing questions.

That gap-identification function turned out to be more valuable than most teams expected. It's one thing to know you need more content. It's another to see exactly which subtopics are missing and understand why those gaps matter for AI citation.

What MarketMuse brought to the table

MarketMuse has been around longer and takes a more data-driven approach. Its core value in 2025 was helping brands understand content quality relative to the competitive landscape -- not just "do you cover this topic?" but "do you cover it better than the sources AI models currently cite?"

MarketMuse scores content against a topic model built from analyzing what's already ranking and being cited. A page with a low content score for a topic is a page that's likely getting passed over by AI models in favor of more comprehensive sources. A high score means you've covered the topic thoroughly enough to compete.

What made this particularly useful in 2025 was the shift in how AI models select sources. Early AI search systems leaned heavily on domain authority as a proxy for trustworthiness. As these systems matured, they got better at evaluating content quality directly. MarketMuse's approach -- optimizing for topic coverage rather than keyword matching -- aligned well with how AI models actually evaluate sources.

Several content teams reported using MarketMuse's topic briefs to rebuild thin pages that had been ignored by AI models, then seeing those pages start appearing in ChatGPT and Perplexity citations within a few months. The pattern was consistent enough to suggest the tool's scoring methodology was capturing something real about what AI models reward.

The broader toolkit: what else teams were using

Topical Map AI and MarketMuse weren't the only tools in the mix. A few others showed up consistently in how teams approached topical authority for AI search.

Content optimization tools like Clearscope and Surfer SEO helped teams ensure individual pages covered the right semantic territory. These tools analyze top-ranking content and identify the concepts, entities, and related terms that comprehensive coverage requires.

Content brief and research tools like Frase and Content Harmony helped teams build briefs that went beyond keyword targeting to cover the full range of questions a user might have about a topic -- which is exactly what AI models are looking for when they decide whether to cite a source.

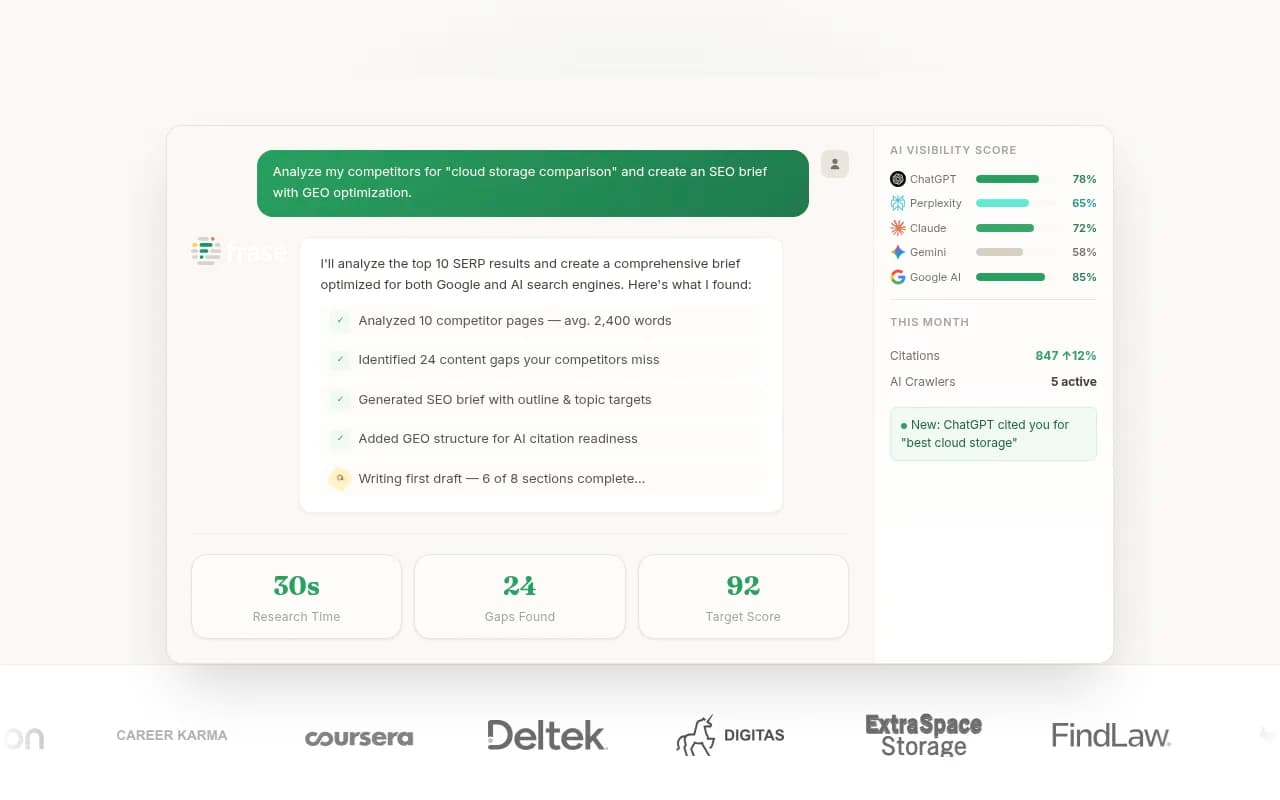

AI writing tools like Jasper and Scalenut helped teams produce content at the volume required to build genuine topic clusters. The key distinction in 2025 was that teams using these tools effectively weren't using them to generate generic content -- they were using them to execute against specific topical maps and briefs, producing content that was genuinely comprehensive rather than just long.

NeuronWriter was another tool that gained traction specifically for its semantic SEO capabilities -- helping teams understand how entities and concepts relate within a topic, which is increasingly how AI models evaluate content.

The gap that most teams missed: tracking whether it worked

Here's where a lot of brands stumbled. They invested in topical authority tools, built out their content clusters, and then... had no reliable way to know whether any of it was actually getting cited by AI models.

Traditional rank trackers show you Google positions. They don't show you whether ChatGPT cited your article when someone asked about your topic. They don't show you which pages Perplexity is pulling from. They don't tell you whether Claude is recommending your brand or a competitor.

This is a real blind spot. You can do everything right from a topical authority standpoint and still have no idea whether it's translating into AI visibility.

Tools built specifically for AI search visibility -- like Promptwatch -- fill this gap. Promptwatch tracks which pages are being cited by ChatGPT, Claude, Perplexity, and other AI models, and shows you how your visibility changes over time as you publish new content. That feedback loop is what turns topical authority work from a hope into a measurable strategy.

Without that kind of tracking, you're building in the dark. You might be doing everything right and not know it. Or you might be missing something important and have no signal to tell you.

A practical comparison: what each tool does best

| Tool | Primary function | Best for | AI search relevance |

|---|---|---|---|

| Topical Map AI | Topic cluster mapping and gap analysis | Planning content architecture | High -- identifies what's missing |

| MarketMuse | Content quality scoring vs. topic model | Optimizing existing and new content | High -- scores against what AI cites |

| Clearscope | Semantic content optimization | Improving individual pages | Medium-high -- entity and concept coverage |

| Surfer SEO | On-page optimization with NLP analysis | Content writers optimizing drafts | Medium -- keyword and semantic signals |

| Frase | Content briefs and research | Brief creation and SERP analysis | Medium -- question coverage |

| Content Harmony | AI-powered content briefs | Research-heavy brief creation | Medium -- intent and topic coverage |

| NeuronWriter | Semantic SEO and entity optimization | Writers focused on semantic depth | Medium-high -- entity relationships |

| Promptwatch | AI search visibility tracking | Measuring citation performance | Essential -- closes the feedback loop |

What the data actually showed in 2025

A few patterns emerged clearly from teams that tracked their AI visibility through 2025:

Depth beat breadth, but only up to a point. Pages that covered a topic comprehensively -- answering the main question plus common follow-up questions -- consistently outperformed both thin pages and extremely long pages that covered too much ground. The sweet spot was thorough coverage of a specific subtopic, not a 10,000-word guide trying to cover everything.

Entity-rich content got cited more. Pages that clearly defined key entities, explained relationships between them, and used consistent terminology throughout the piece performed better in AI citations than pages that were keyword-optimized but entity-light. This validated the approach tools like MarketMuse and NeuronWriter were pushing.

Topic clusters outperformed standalone articles. A brand with 15 well-connected articles on a topic consistently got cited more than a brand with one excellent article on the same topic. AI models seem to weight the presence of a comprehensive resource hub more heavily than any single piece of content.

Freshness mattered for retrieval-based systems. For AI models that actively crawl the web (Perplexity being the clearest example), content that was recently updated within a topic cluster got cited more often than older content, even when the older content was higher quality. This pushed teams toward regular content maintenance, not just new content production.

How to build topical authority for AI search in 2026

If you're starting or rebuilding your topical authority strategy now, here's what the 2025 data suggests actually works:

Start with the map. Before writing anything, understand the full topic landscape. What are all the subtopics, questions, and entities that belong to your core subject? Tools like Topical Map AI make this visual and systematic. This step is where most teams underinvest.

Audit what you have. Before creating new content, understand what you already have and how it performs. MarketMuse's content audit function is useful here -- it shows you which existing pages have low topic coverage scores and are worth rebuilding before you invest in new content.

Prioritize gaps that AI models actually care about. Not all topic gaps are equal. Some subtopics get asked about constantly in AI search; others are niche. Prompt intelligence tools can help you understand which questions are being asked most often, so you can prioritize the gaps that will have the most impact on your AI visibility.

Build the cluster systematically. Once you know what to write, execute against the map. The goal is comprehensive coverage of your topic, with each piece of content clearly connected to the others through internal linking and consistent entity usage.

Measure citation performance, not just rankings. This is the step most teams skip. Use a tool that tracks AI citations -- not just Google positions -- so you can see whether your topical authority work is actually translating into AI search visibility. Without this, you're guessing.

Iterate based on what you see. AI search visibility changes as models update and as competitors publish new content. The brands that maintained strong AI visibility through 2025 treated it as an ongoing process, not a one-time project.

The bottom line

Topical authority tools like Topical Map AI and MarketMuse didn't just influence ChatGPT rankings in 2025 -- they changed how serious content teams think about content strategy entirely. The shift from "what keywords should we target?" to "what does comprehensive expertise on this topic look like?" is real, and the tools that support that shift proved their value.

The brands that combined strong topical authority work with actual AI visibility tracking -- closing the loop between content creation and citation performance -- are the ones that came out ahead. The ones that treated topical authority as a content production exercise without measuring the AI search outcome are still guessing.

In 2026, that gap between the teams that measure and the teams that don't will only get wider.