Key takeaways

- AI crawlers like GPTBot, ClaudeBot, and PerplexityBot don't execute JavaScript -- dynamic content is invisible to them, and most traditional SEO tools won't flag this for you.

- Google Search Console and Screaming Frog remain essential for technical health, but neither tells you whether ChatGPT is actually citing your pages.

- Schema markup, clean site structure, and static HTML rendering are the three technical factors that most directly influence AI citation rates.

- Monitoring AI crawler activity in your server logs is one of the fastest ways to diagnose why an LLM is skipping your content.

- A complete stack in 2026 combines a traditional crawl tool, a rendering/schema layer, and an AI visibility platform that closes the loop between technical fixes and actual citation results.

Why "ranking for ChatGPT" is a different problem than ranking for Google

When most SEO teams talk about technical health, they mean Core Web Vitals, crawl budget, canonical tags, and redirect chains. All of that still matters for Google. But ChatGPT, Claude, Perplexity, and other LLMs have a completely different relationship with your website.

These AI systems don't run a continuous crawler the way Googlebot does. They pull content during training runs and retrieval cycles, and the bots they send -- GPTBot, ClaudeBot, PerplexityBot -- behave differently from Googlebot in one critical way: they don't execute JavaScript. Research from Vercel covering crawlers from OpenAI, Anthropic, Meta, ByteDance, and Perplexity confirmed this. If your content lives inside a React or Next.js component that only renders client-side, those AI crawlers see a blank page.

That's the core technical problem. And it's one that most traditional SEO audit tools weren't designed to surface.

This guide covers the tools that actually help -- both the established ones that handle the fundamentals, and the newer ones built specifically for AI crawler readiness.

The three technical failures that block AI citations

Before getting into tools, it's worth being specific about what you're trying to fix. There are three technical issues that consistently block AI models from reading and citing your content:

JavaScript-rendered content. If your page content only appears after JavaScript executes, AI crawlers miss it entirely. This includes dynamically loaded FAQs, product descriptions pulled from a CMS via API, and any content that requires user interaction to display.

Weak site structure. AI systems use passage retrieval to select cited content. Pages without clear entity hierarchy -- logical headings, semantic relationships between topics, clear topical focus -- are harder for these systems to parse and attribute correctly.

Missing or broken schema markup. Structured data helps AI models map your brand to a category, understand what your pages are about, and connect your content to specific use cases. Without it, you're relying on the model to infer everything from raw text.

Fix these three things and you've done more for your AI citation rate than most brands have.

Core technical SEO tools (still essential in 2026)

Google Search Console

Free, authoritative, and irreplaceable. Google Search Console gives you ground truth on what Google has indexed, which pages have Core Web Vitals issues, and where crawl errors are occurring. It won't tell you anything about ChatGPT or Claude, but it's the baseline. If Google can't index your pages properly, AI crawlers probably can't either.

The URL Inspection tool is particularly useful for diagnosing rendering issues -- you can see what Googlebot actually sees when it visits a page, which gives you a proxy for what AI crawlers might encounter.

Screaming Frog SEO Spider

At $279/year, Screaming Frog is the most cost-effective developer-grade crawl tool available. It's been the industry standard for technical audits for years, and in 2026 it's still the right tool for:

- Identifying broken links, redirect chains, and orphaned pages

- Validating schema markup at scale

- Auditing hreflang implementation for multilingual sites

- Checking canonical tag consistency

- Rendering pages with JavaScript to compare what a crawler sees vs. what a browser renders

That last feature is important. Screaming Frog can render pages using a headless Chrome instance, which lets you compare the rendered DOM against the raw HTML. If there's a big difference, that's a signal that AI crawlers are missing content.

It doesn't have an AI citation dashboard or LLM visibility tracking, but for diagnosing the technical foundations that affect AI crawlability, it's still the right starting point.

Botify

Botify sits at the enterprise end of the crawl tool spectrum. It goes beyond what Screaming Frog does by adding log file analysis, JavaScript rendering diagnostics, and crawl budget optimization at scale. For large sites with hundreds of thousands of pages, Botify's ability to correlate crawl data with actual traffic is genuinely useful.

It's also one of the few traditional SEO platforms that has started building AI search visibility features into its product, making it a reasonable choice for enterprise teams that want to consolidate tooling.

Semrush and Ahrefs

Both platforms offer site audit functionality that covers the standard technical checklist: broken pages, duplicate content, missing meta tags, slow pages, and so on. Semrush starts at $139.95/month; Ahrefs from $108/month billed annually.

The honest assessment: their site audit features are solid but not dramatically better than Screaming Frog for pure technical diagnosis. Where they add value is in combining technical audits with keyword research, backlink analysis, and competitive intelligence in one interface. If you're already paying for one of these platforms for keyword research, use the site audit too. But don't buy either one primarily for technical SEO.

Neither platform provides meaningful AI citation tracking or LLM crawler diagnostics.

The AI crawler readiness layer: what traditional tools miss

Here's where the gap opens up. Traditional technical SEO tools tell you whether Google can crawl your site. They don't tell you:

- Which AI crawlers are visiting your pages and how often

- Whether those crawlers are encountering errors

- Which pages are actually being cited in ChatGPT, Claude, or Perplexity responses

- What content gaps are causing AI models to cite competitors instead of you

For that, you need a different category of tool.

Prerender.io

Prerender.io solves one specific but critical problem: it pre-renders JavaScript-heavy pages into static HTML that AI crawlers can actually read. If your site is built on a JavaScript framework and you can't do server-side rendering at the infrastructure level, Prerender is the fastest fix.

It sits between your server and incoming crawlers, detects bot user agents (including GPTBot, ClaudeBot, and PerplexityBot), and serves a pre-rendered static version of the page instead of the JavaScript bundle. Your users still get the full dynamic experience; the crawlers get readable HTML.

This is one of the most direct technical interventions you can make for AI crawler readiness.

Yoast SEO (for WordPress sites)

If your site runs on WordPress, Yoast SEO handles a lot of the schema markup and technical hygiene that affects AI readability. It generates structured data for articles, breadcrumbs, and organization entities automatically, and its content analysis gives writers real-time feedback on readability and keyword use.

It won't solve JavaScript rendering issues or give you AI citation data, but for WordPress sites it's the easiest way to ensure your schema markup is consistently implemented.

AIOSEO

Another strong WordPress option, AIOSEO has added AI-focused features in recent versions including schema templates for FAQ, HowTo, and Product markup -- all of which help AI models understand your content structure. It also has a TruSEO score that evaluates on-page optimization in real time.

Closing the loop: AI visibility platforms

Technical SEO gets your content readable by AI crawlers. But knowing whether it's actually working -- whether ChatGPT is citing your pages, which competitors are getting cited instead of you, and what content you're missing -- requires a different kind of tool.

This is where AI visibility platforms come in. Most of them are monitoring dashboards that show you citation data. A smaller number actually help you act on it.

What to look for

The most useful AI visibility platforms in 2026 do at least three things:

- Track which prompts trigger citations of your brand vs. competitors across multiple LLMs

- Show you which pages are being cited and flag technical issues affecting those pages

- Help you create content that fills the gaps -- not just identify that gaps exist

Platforms that only do step one are useful for reporting but won't move your numbers.

Promptwatch

Promptwatch is one of the few platforms that covers all three steps. Its AI Crawler Logs feature is particularly relevant here: it gives you real-time logs of GPTBot, ClaudeBot, PerplexityBot, and other AI crawlers hitting your site -- which pages they read, what errors they encounter, and how frequently they return. That's the kind of data that connects technical SEO decisions to actual AI citation outcomes.

Beyond the crawler logs, Promptwatch tracks citation rates across 10 AI models, shows you which competitor pages are getting cited for prompts you're not appearing in, and has a built-in content generation tool that creates articles engineered to get cited -- grounded in its database of over 880 million analyzed citations.

Other AI visibility tools worth knowing

Several other platforms in this space are worth a look depending on your budget and use case:

Otterly.AI is a more affordable entry point for teams that primarily need citation monitoring without the content generation layer.

Profound targets enterprise teams with deeper analytics and multi-stakeholder reporting.

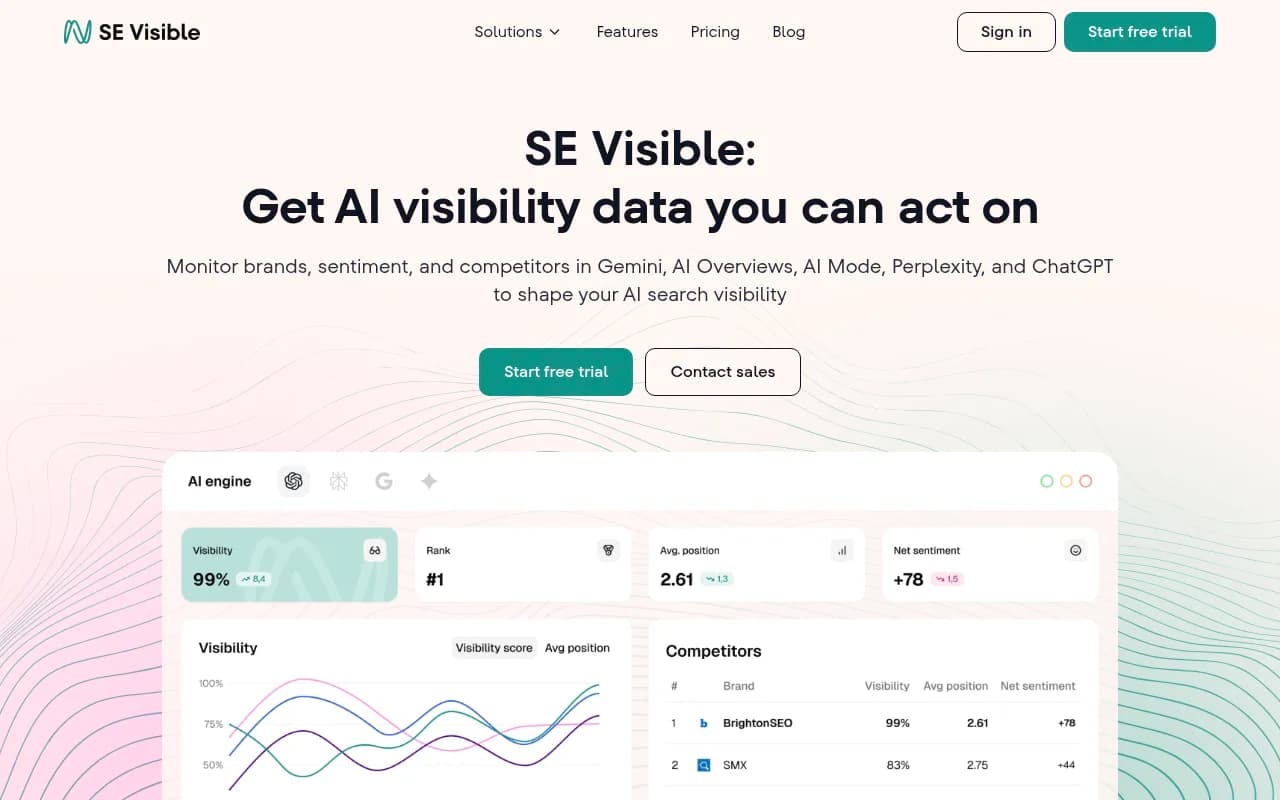

SE Ranking's AI visibility module (SE Visible) is a reasonable option if you're already using SE Ranking for traditional SEO and want to add AI citation tracking without switching platforms.

Botify (mentioned above) is worth revisiting here -- its log file analysis can surface AI crawler activity if you configure it correctly, making it a bridge between traditional technical SEO and AI visibility.

Comparison: technical SEO tools for AI crawler readiness

| Tool | Crawl audit | JS rendering | Schema validation | AI crawler logs | AI citation tracking | Price |

|---|---|---|---|---|---|---|

| Google Search Console | Yes | Partial | No | No | No | Free |

| Screaming Frog | Yes | Yes (headless Chrome) | Yes | No | No | $279/yr |

| Botify | Yes | Yes | Yes | Partial | Limited | Enterprise |

| Semrush | Yes | No | Partial | No | No | $139.95/mo+ |

| Ahrefs | Yes | No | No | No | Brand Radar only | $108/mo+ |

| Prerender.io | No | Yes (serves static HTML) | No | No | No | Usage-based |

| Yoast SEO | No | No | Yes (WordPress) | No | No | Free/Premium |

| Promptwatch | No | No | No | Yes | Yes (10 LLMs) | $99/mo+ |

| Otterly.AI | No | No | No | No | Yes | Lower tier |

| Profound | No | No | No | No | Yes | Enterprise |

Building a practical stack in 2026

You don't need every tool in this guide. Here's how to think about it by team size and budget:

Small teams and solo SEOs

Start with Google Search Console (free) and Screaming Frog ($279/year). Between the two, you can diagnose most technical issues affecting both Google and AI crawlers. Add Yoast or AIOSEO if you're on WordPress for schema coverage.

For AI visibility, Otterly.AI or Promptwatch's Essential plan ($99/month) gives you enough citation tracking to know whether your technical fixes are translating into actual LLM appearances.

Mid-size marketing teams

The same core stack applies, but add Prerender.io if your site is JavaScript-heavy -- this is the single highest-leverage technical fix for AI crawler readiness. Upgrade to Promptwatch's Professional plan ($249/month) to get the AI crawler logs, which let you see exactly which bots are visiting which pages and where they're hitting errors.

Pair that with a content optimization tool like Surfer SEO or Clearscope to ensure the pages AI crawlers can now read are actually well-optimized.

Enterprise teams

At this scale, Botify makes sense for crawl management and log analysis. Combine it with Promptwatch at the Business tier or above for full AI visibility coverage -- crawler logs, citation tracking, content gap analysis, and traffic attribution that connects AI citations to actual revenue.

The robots.txt question

One thing worth addressing directly: should you block AI crawlers in your robots.txt?

Some brands have done this, particularly around training data concerns. But if you block GPTBot or ClaudeBot, you're also blocking those models from retrieving your content for real-time responses -- which means you won't appear in ChatGPT or Claude answers even when someone asks a question your content directly answers.

The better approach is to allow AI crawlers on your content pages while being selective about what you expose. Block thin pages, duplicate content, and internal search results -- the same things you'd block Googlebot from wasting crawl budget on. Let the crawlers reach your substantive content.

What to prioritize first

If you're starting from scratch on AI crawler readiness, here's the order that makes the most practical sense:

-

Run a Screaming Frog crawl and compare rendered vs. raw HTML for your most important pages. Any page where the rendered version has significantly more content than the raw HTML is a page AI crawlers are probably missing.

-

Check your robots.txt to confirm you're not accidentally blocking GPTBot, ClaudeBot, or PerplexityBot.

-

Audit your schema markup. At minimum, you want Organization, WebPage, and Article schema on your key pages. FAQ schema on pages that answer specific questions is particularly effective for AI citation.

-

If you're on a JavaScript framework without server-side rendering, implement Prerender.io or move to SSR. This is the highest-impact technical change you can make for AI crawler readiness.

-

Set up AI citation tracking so you can measure whether your technical fixes are working. Without this, you're flying blind.

The technical foundations haven't changed that much -- clean HTML, good structure, proper schema. What's changed is that the stakes for getting them right now extend beyond Google into every AI model your potential customers are using to make decisions.