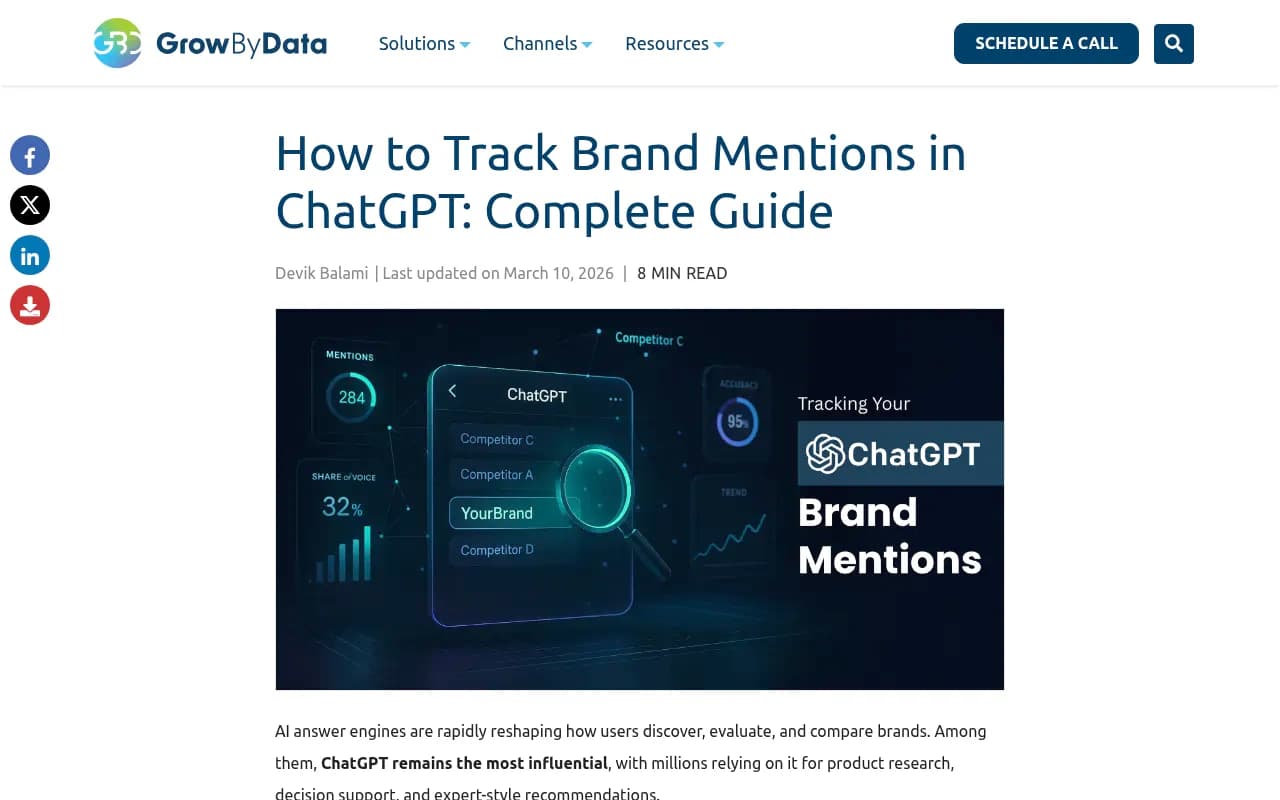

Key takeaways

- Manual prompt testing is a starting point, but it doesn't scale -- you need a systematic tracking setup to measure progress over time

- The metrics that matter most are share of voice, citation frequency, sentiment, and which specific pages AI models are pulling from

- Tracking ChatGPT alone isn't enough -- Perplexity, Claude, Gemini, and Google AI Overviews all drive traffic independently

- Connecting AI visibility to actual website traffic (via server logs, UTM parameters, or GSC) is what separates real measurement from vanity metrics

- Dedicated GEO platforms close the loop between monitoring and action -- the best ones tell you what's missing and help you fix it

You've been publishing content, building citations, getting mentioned on third-party sites -- all the things people say will get your brand into ChatGPT's answers. But how do you actually know if any of it is working?

This is the question most marketing teams can't answer right now. They're doing the work but measuring nothing, or measuring the wrong things. A few people in SEO communities have figured out the basics -- manually testing prompts, logging when their brand appears or disappears -- but that approach breaks down fast when you're tracking dozens of prompts across multiple AI models.

This guide walks through a proper tracking system: what to measure, how to measure it, which tools are worth using, and how to connect AI visibility data to outcomes your business actually cares about.

Why tracking ChatGPT brand mentions is harder than it sounds

Traditional SEO tracking is relatively straightforward. You pick keywords, check rankings, watch traffic in Google Search Console. The feedback loop is tight.

AI search doesn't work that way. ChatGPT doesn't have a "position 1" you can monitor. Responses vary based on how the question is phrased, who's asking, what model version is running, and what's been indexed recently. The same prompt can produce different answers on the same day.

There's also no native analytics layer. ChatGPT doesn't tell you when it mentioned your brand or how many users saw that mention. You're working from the outside in -- querying the model, observing outputs, and building your own data layer on top.

That's why most brands are flying blind. They assume their content strategy is working because they're publishing more, but they have no baseline, no trend data, and no way to attribute traffic to AI-driven discovery.

The good news: the tooling has matured significantly in 2026. You don't have to build this from scratch.

Step 1: Define what you're actually tracking

Before touching any tool, get specific about what you want to know. Vague goals produce useless data.

The core questions to answer:

- Which prompts should trigger a mention of my brand? (e.g. "best project management software for remote teams")

- Who are my top 5 competitors, and which prompts are they appearing in that I'm not?

- Which AI models matter most for my audience? (ChatGPT is the obvious one, but Perplexity drives significant referral traffic for research-heavy queries)

- What does a "good" mention look like? Being named in a list of 10 is very different from being the primary recommendation

Start by building a prompt list. Think about the questions your customers actually ask when evaluating solutions like yours. These fall into a few categories:

- Category-level queries: "What's the best [category] tool?"

- Problem-based queries: "How do I solve [specific problem]?"

- Comparison queries: "[Your brand] vs [Competitor]"

- Use-case queries: "Best [category] for [specific use case or persona]"

Aim for 30-50 prompts to start. That's enough to get meaningful data without becoming unmanageable.

Step 2: Establish a baseline

You can't measure improvement without knowing where you started. Run your prompt list across the AI models you care about and record:

- Whether your brand appears at all

- Where in the response it appears (first mention, buried in a list, not mentioned)

- What's said about your brand (accurate, inaccurate, positive, neutral, negative)

- Which competitors appear in the same response

- Whether your website is cited as a source

Do this manually first if you're just starting out. It's tedious but it forces you to actually read the responses, which is valuable. You'll notice patterns -- certain prompt types where you consistently appear, others where a competitor dominates every time.

Document everything in a spreadsheet. Columns for: prompt, model, date, brand mentioned (Y/N), position, sentiment, competitors mentioned, source cited. This becomes your baseline.

Step 3: Set up automated monitoring

Manual testing works for a baseline, but you need automation to track changes over time. Running 50 prompts across 5 AI models every week by hand isn't realistic.

This is where dedicated AI visibility platforms come in. The category has exploded -- there are now 15+ tools that claim to track brand mentions in AI search. They vary enormously in what they actually do.

Here's a practical breakdown of the main options:

| Tool | Monitors ChatGPT | Monitors other LLMs | Content gap analysis | Content generation | Crawler logs | Free tier |

|---|---|---|---|---|---|---|

| Promptwatch | Yes (10 models) | Yes | Yes | Yes (AI agent) | Yes | Trial |

| Otterly.AI | Yes | Yes | No | No | No | Yes |

| Peec AI | Yes | Yes | No | No | No | Limited |

| Athena HQ | Yes | Yes | Limited | No | No | Trial |

| Profound AI | Yes | Yes | Limited | No | No | No |

| Mentions.so | Yes | Limited | No | No | No | Yes |

| LLMrefs | Yes | Yes | No | No | No | Limited |

| Trakkr.ai | Yes | Yes | No | No | No | Trial |

Most of these tools are monitoring dashboards. They show you when and where your brand appears, track share of voice over time, and alert you to changes. That's genuinely useful -- but it's only half the job.

The other half is doing something about what you find. If you discover your brand is invisible for 40 prompts where a competitor appears consistently, you need to know why and what content to create. Most monitoring tools leave you to figure that out yourself.

Promptwatch is the platform that closes this loop. It monitors across 10 AI models (ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews, Grok, DeepSeek, Copilot, Meta AI, Mistral), but it also runs answer gap analysis to show you exactly which prompts your competitors are winning that you're not -- and then generates content designed to fix those gaps.

For teams that just need basic monitoring on a budget, Otterly.AI and Peec AI are reasonable starting points.

If you're at the enterprise end, Profound AI and Athena HQ have strong monitoring capabilities, though neither has the content generation layer that makes Promptwatch different.

Step 4: The metrics that actually matter

Not all visibility data is equally useful. Here's what to focus on:

Share of voice

What percentage of relevant prompts include your brand, compared to competitors? This is the headline metric. If you appear in 20 out of 50 tracked prompts and your main competitor appears in 35, that gap is your target.

Track this weekly or bi-weekly. The trend matters more than the absolute number.

Citation rate

When your brand is mentioned, is your website cited as a source? A mention without a citation is weaker -- it means the AI model knows about you but isn't actively pulling from your content. Citation rate tells you whether your pages are actually being indexed and trusted by AI crawlers.

Mention position

Being the first brand named in a response is meaningfully different from being fifth in a list. Some platforms track this as "prominence" or "ranking position." It's worth monitoring separately from raw mention frequency.

Sentiment accuracy

What is the AI saying about your brand, and is it accurate? This matters for two reasons. First, negative or inaccurate descriptions directly affect purchasing decisions. Second, if ChatGPT is saying something wrong about your product, that's a content gap -- there's probably a page on your site that should exist but doesn't.

Prompt coverage

Of your 50 tracked prompts, how many trigger at least one mention? This is your "coverage" score. A brand with 80% coverage is in a much stronger position than one at 30%, even if the 30% brand appears prominently when it does show up.

Step 5: Connect AI visibility to actual traffic

This is where most tracking setups fall short. Share of voice is interesting, but what you really want to know is: is AI search driving visitors to my website, and are those visitors converting?

There are three ways to do this:

Server log analysis

AI crawlers (ChatGPT's GPTBot, Perplexity's PerplexityBot, Claude's ClaudeBot, etc.) visit your website before generating responses. Your server logs record every visit. Analyzing these logs tells you which pages AI models are reading, how often, and whether they're encountering errors.

This is the most direct signal of AI indexing health. If GPTBot is hitting your homepage but never crawling your product pages, that explains why ChatGPT knows your brand exists but can't describe what you actually do.

Most platforms don't offer crawler log analysis. Promptwatch does, with real-time logs showing which AI bots are visiting which pages and flagging crawl errors. That's genuinely useful data that most competitors can't provide.

UTM parameters and referral traffic

When users click through from an AI-generated response to your website, the referral source is typically logged as chat.openai.com, perplexity.ai, or similar. Set up segments in your analytics platform to isolate this traffic.

It's imperfect -- many AI interactions don't result in a click, and some referral data gets stripped -- but it gives you a directional read on AI-driven traffic volume.

Google Search Console integration

Some platforms integrate directly with GSC to correlate AI visibility changes with organic traffic changes. If your brand visibility in AI search increases for a cluster of queries and you see a corresponding lift in branded search traffic, that's a reasonable signal that AI mentions are influencing discovery.

Step 6: Diagnose why you're not appearing

Once you have baseline data and automated monitoring running, the real work begins: understanding why you're invisible for certain prompts.

The most common reasons:

No content covering the topic. If a user asks "best CRM for solopreneurs" and you have no page that addresses that specific use case, AI models have nothing to cite. The fix is creating that content.

Content exists but isn't being crawled. Check your server logs. If AI bots aren't visiting certain pages, look at your robots.txt, page load times, and internal linking structure.

Content exists but doesn't answer the question directly. AI models prefer content that directly and specifically answers the question being asked. A generic product page won't win over a detailed comparison article or a specific use-case guide.

Competitors have more authoritative third-party coverage. AI models heavily weight external sources -- review sites, Reddit discussions, industry publications. If your competitors are mentioned on G2, Reddit, and TechCrunch and you're not, that's a citation gap.

The model has outdated information. If your brand has changed significantly and AI models are still describing the old version, you need fresh content that explicitly addresses what's changed.

Step 7: Build a feedback loop

Tracking is only useful if it changes what you do. The goal is a repeatable cycle:

- Run your prompt list, record results

- Identify the prompts where you're losing to competitors

- Diagnose why (missing content, crawl issues, citation gaps)

- Create or update content to address the gap

- Wait 4-6 weeks for AI models to re-crawl and update

- Re-run the same prompts and measure change

This cycle takes time. AI models don't update in real time -- there's a lag between publishing content and seeing it reflected in responses. Expect 4-8 weeks before new content shows up consistently.

The implication: you need to be patient and systematic. Don't publish one article and expect immediate results. Build a content calendar based on your gap analysis and track the cohort of prompts each piece of content is targeting.

Step 8: Watch the channels most people ignore

ChatGPT doesn't only pull from your website. It also draws from Reddit discussions, YouTube videos, review platforms, and industry publications. If you're only optimizing your own content, you're missing a significant part of the picture.

Reddit in particular has outsized influence on AI responses for product and service recommendations. A thread where your brand is discussed positively (or negatively) can directly affect what ChatGPT says about you. Monitoring Reddit for brand mentions and participating in relevant communities is part of a complete AI visibility strategy.

The same logic applies to G2, Capterra, Trustpilot, and similar review sites. AI models treat these as high-authority sources. A strong review profile on these platforms makes it more likely your brand gets recommended.

Putting it together: a practical tracking stack

For most marketing teams, a reasonable setup looks like this:

- A dedicated AI visibility platform for automated prompt monitoring and share of voice tracking (Promptwatch for teams that want the full loop; Otterly.AI or Peec AI for budget-conscious monitoring-only setups)

- Google Search Console for correlating AI visibility changes with organic traffic

- Server log analysis (either through your platform or a separate tool) to understand AI crawler behavior

- A spreadsheet or dashboard to track your key metrics weekly

The brands that are winning in AI search right now aren't doing anything magical. They're just measuring consistently, identifying gaps faster than their competitors, and creating content that directly answers the questions AI models are being asked.

That's the whole game. The tracking infrastructure is what makes it possible to play it systematically rather than guessing.