Key takeaways

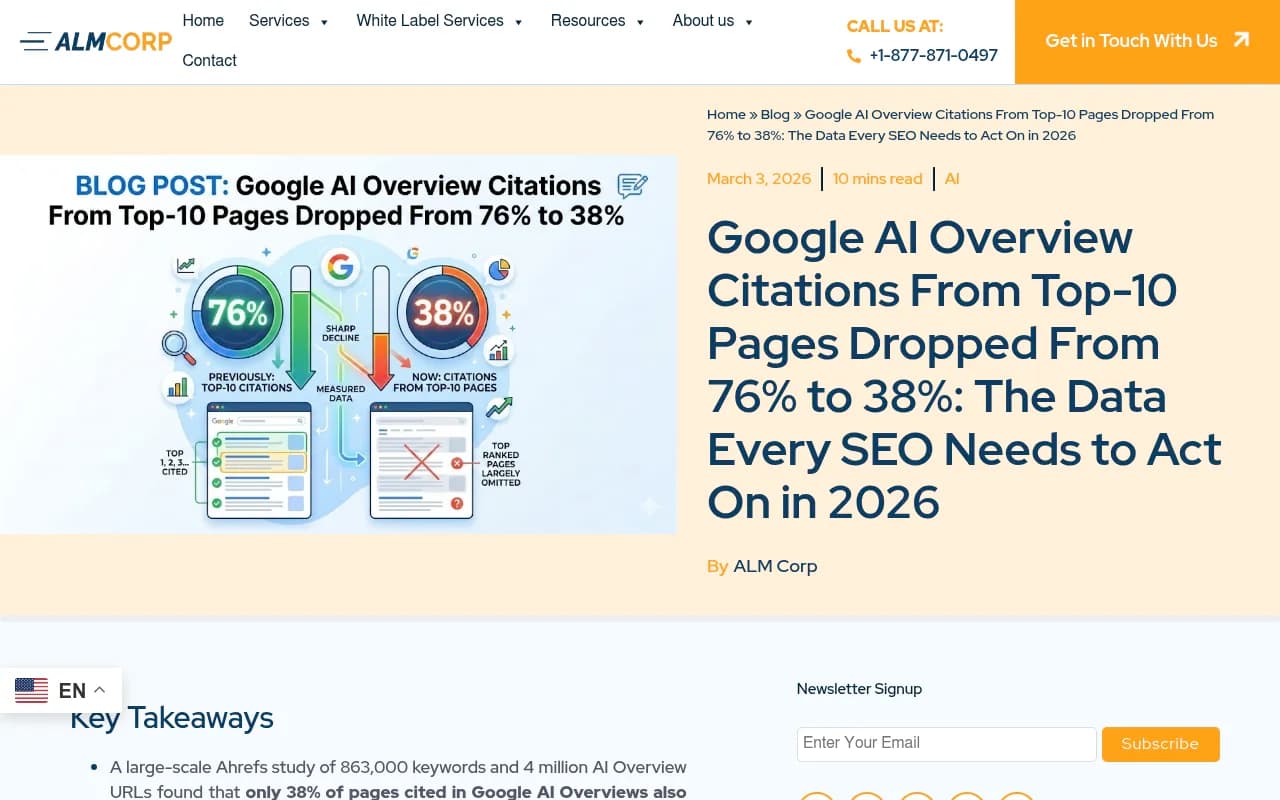

- Top-10 organic rankings now account for only 38% of Google AI Overview citations, down from 76% in mid-2025. Ranking high helps, but it's no longer the deciding factor.

- The three factors that actually drive citations are entity authority (clear brand identity), content extractability (structured, summarizable content), and topical differentiation (focused expertise).

- AI Overviews appear in roughly 30% of U.S. searches and cause a 61% drop in organic CTR for non-cited pages. Being cited, on the other hand, correlates with 35% more organic clicks and 91% more paid clicks.

- YouTube is now the single most-cited domain in AI Overviews, accounting for 18.2% of citations from outside the top 100. Multi-format content matters.

- Google's switch to Gemini 3 as the default AI Overview model in January 2026 is a likely driver of the citation behavior shift.

Something changed in how Google's AI Overviews select sources, and the data from early 2026 makes it hard to ignore.

In mid-2025, roughly 75% of AI Overview citations came from pages ranking in the top 10 organic results. By early 2026, that figure had dropped to 38% according to an Ahrefs study of 863,000 keywords. A separate BrightEdge analysis puts the overlap even lower, at around 17%. Either way, the direction is clear: organic rank and AI citation are decoupling.

This matters a lot. If you built your AI visibility strategy on the assumption that ranking well automatically earns you citations, that assumption is now wrong. Understanding what actually drives citations in 2026 requires looking at the data differently.

Why the citation-ranking relationship broke down

The short version: Google upgraded to Gemini 3 as the global default for AI Overviews on January 27, 2026. Gemini 3 is better at synthesizing information across multiple sources, which means it no longer needs to lean on the top-ranked pages as heavily as earlier models did.

The longer version involves something called query fan-out.

When a user submits a search query, Google's AI doesn't just look at results for that exact query. It splits the original question into multiple sub-queries and pulls citations from across all of those sub-query result sets. A page that ranks #47 for the original query might rank #3 for one of the sub-queries. That page gets cited.

This is why 31% of cited pages don't appear in the top 100 organic results at all for the original query. They're being surfaced through fan-out sub-queries, not the primary keyword.

The practical implication: optimizing for a single keyword is no longer sufficient. You need topical depth across the full range of questions your audience might ask.

The three core factors that actually determine citations

Based on analysis of real citation patterns, three factors consistently separate cited pages from non-cited ones. These aren't theoretical. They show up in the data.

1. Entity authority

AI models need to clearly understand what your brand is, what it covers, and where it fits in its category. Not from a single page, but from the totality of signals across your site, your third-party mentions, your structured data, and your knowledge graph presence.

Weak or inconsistent entity signals mean the AI can't confidently identify you as a reliable source. It moves on.

This is why smaller, focused brands sometimes appear in AI Overviews alongside much larger competitors. A niche brand with crystal-clear entity signals can outperform a sprawling domain that covers everything loosely. The AI isn't impressed by size. It's looking for clarity.

Practical steps here include: consistent NAP data across directories, a well-structured Wikipedia or Wikidata presence where applicable, clear "About" and author pages, and schema markup that explicitly defines your brand's topical scope.

2. Content extractability

AI doesn't read your content the way a person does. It extracts. It's looking for clearly segmented, directly answerable chunks of information that can be summarized and synthesized with other sources.

If your content is written as flowing prose without clear structure, it's harder to extract from. If your answers are buried inside long paragraphs, they're harder to surface. If your page requires significant context to understand, it won't be used.

Extractable content tends to share these characteristics:

- Clear H2 and H3 headings that signal what each section answers

- Short, direct answers near the top of each section (before elaboration)

- FAQ sections with explicit question-and-answer formatting

- Numbered lists and structured comparisons where appropriate

- Tables for data-heavy comparisons

This is also why FAQ schema and HowTo schema continue to matter. They don't just help traditional SEO. They make your content machine-readable in a way that AI models can work with directly.

3. Topical differentiation

AI Overviews synthesize from multiple sources. Each cited source is typically contributing something specific: a definition, a data point, a step-by-step process, a comparison. The AI is assembling a complete answer from parts.

If your content covers the same ground as every other page on the topic, you're competing for the same citation slot. If your content covers a specific angle that other pages don't, you become the obvious source for that angle.

This is topical differentiation. It doesn't mean writing about obscure topics. It means having a clear, specific perspective or depth on your topic that other sources lack. Original research, proprietary data, first-hand experience, and specific use-case coverage all contribute to this.

What the traffic data actually means for your strategy

The CTR numbers are stark. When AI Overviews appear, organic CTR drops by an average of 61% for non-cited pages. Paid CTR drops 68%. These figures come from Seer Interactive's analysis of 25.1 million impressions.

For cited pages, the picture reverses. Cited brands see 35% more organic clicks and 91% more paid clicks compared to their baseline. The AI Overview essentially acts as an endorsement, and users follow the cited links at higher rates than they click traditional results.

This creates a two-tier search result. Pages that get cited benefit. Pages that don't get cited lose traffic even if they rank well organically. The middle ground is shrinking.

AI Overviews now appear in roughly 48% of tracked queries according to BrightEdge's February 2026 data, up 58% year-over-year. That coverage is still accelerating. The queries where AI Overviews don't appear are increasingly the exception.

The YouTube factor

One finding that surprises most SEOs: YouTube is now the single most-cited domain in Google AI Overviews, accounting for 18.2% of all citations that come from outside the top 100 organic results.

This makes sense when you think about how Gemini processes information. Google owns YouTube, and YouTube content is deeply indexed. Video content often covers topics in depth, with clear structure (chapters, timestamps, transcripts). For many topics, the most authoritative and extractable content exists on YouTube, not on traditional web pages.

The implication is that multi-format content strategy isn't optional anymore. If your brand only publishes written content, you're missing a citation channel that's now responsible for nearly a fifth of AI Overview citations from non-top-100 sources.

E-E-A-T signals and third-party presence

Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) has always mattered for traditional search. For AI Overviews, it matters in a slightly different way.

AI models are more likely to cite sources that have strong third-party corroboration. This means:

- Author credentials that are verifiable (LinkedIn profiles, published work, institutional affiliations)

- Brand mentions on authoritative third-party sites (Wikipedia, industry publications, review platforms)

- Reddit and forum discussions that reference your brand positively

- Review site presence (Google Business Profile, Trustpilot, G2, etc.)

The Reddit angle is particularly interesting. Reddit content appears in AI Overviews with notable frequency, and discussions that mention your brand or content can influence how AI models perceive your authority. This is a channel most traditional SEO strategies don't account for at all.

Technical factors that affect citation eligibility

Beyond content quality, a few technical factors determine whether AI crawlers can even access and process your pages.

AI crawlers (Googlebot, but also the crawlers for ChatGPT, Perplexity, and others) need to be able to reach your pages, render them correctly, and extract structured content. Common issues that block citation eligibility include:

- JavaScript-heavy pages that don't render cleanly for crawlers

- Slow page load times that cause crawlers to abandon the page

- Robots.txt rules that inadvertently block AI crawlers

- Thin or duplicate content that signals low value

- Missing or incorrect structured data markup

Monitoring your crawler logs to see which pages AI bots are actually visiting, how often, and whether they're encountering errors is increasingly important. Most SEO teams don't have visibility into this. Tools like Promptwatch provide real-time AI crawler logs that show exactly which pages AI engines are reading and where they're hitting problems.

Content freshness

AI Overviews favor current information. For queries where recency matters (statistics, product comparisons, industry developments), pages with recent publication or update dates have a clear advantage.

This doesn't mean rewriting every page constantly. It means:

- Updating statistics and data points when new research is published

- Adding a "last updated" date that's accurate and visible

- Revisiting pages that cover time-sensitive topics at least annually

- Publishing new content that covers recent developments in your topic area

The freshness signal is particularly important for queries that include year references (like "best X in 2026") or that implicitly require current information.

How to audit your current AI Overview visibility

Before optimizing, you need to know where you stand. A few approaches:

Manual checking is the simplest: search your target queries in Google and note whether AI Overviews appear, and whether your pages are cited. This doesn't scale, but it gives you a quick baseline.

Google Search Console shows impressions and clicks from AI Overviews as a separate search type filter. This is free and gives you actual traffic data, though it doesn't show you competitor citations or the specific prompts driving visibility.

Dedicated AI visibility platforms go further. They track which prompts your brand appears in across multiple AI models, show competitor citation patterns, and surface the content gaps you need to fill. For teams that are serious about AI search visibility, this level of insight is worth having.

Tools worth knowing about

Several platforms have emerged specifically for tracking and improving AI search visibility. Here's a quick comparison of the main options:

| Tool | AI models tracked | Content gap analysis | Crawler logs | Best for |

|---|---|---|---|---|

| Promptwatch | 10+ (ChatGPT, Perplexity, Gemini, Claude, etc.) | Yes | Yes | Full optimization loop |

| Otterly.AI | 4-5 | No | No | Basic monitoring |

| Profound | 6+ | Limited | No | Enterprise monitoring |

| SE Ranking | 5+ | Limited | No | SEO teams |

| BrightEdge | 5+ | Limited | No | Enterprise SEO |

| Ahrefs Brand Radar | 3-4 | No | No | Traditional SEO teams |

The meaningful difference between these tools is whether they stop at showing you data or help you act on it. Monitoring-only tools tell you where you're not being cited. Optimization platforms help you figure out why and what to create to fix it.

A practical optimization checklist for 2026

Based on everything above, here's what actually moves the needle:

Entity clarity

- Consistent brand name, description, and category across all pages and directories

- Schema markup (Organization, Person, BreadcrumbList) on key pages

- Clear "About" page with verifiable credentials

- Wikipedia/Wikidata presence if your brand qualifies

Content structure

- H2/H3 headings that directly answer questions

- FAQ sections with explicit Q&A formatting

- FAQ schema markup

- Tables for comparisons, numbered lists for processes

- Direct answers near the top of each section, before elaboration

Topical depth

- Cover the full range of sub-questions your audience asks, not just the primary keyword

- Original data, research, or first-hand experience where possible

- Specific use-case coverage that differentiates your content from generic alternatives

Multi-format presence

- YouTube content covering your core topics

- Active presence on Reddit and relevant forums

- Third-party mentions on authoritative sites

Technical health

- Clean rendering for JavaScript-heavy pages

- Fast load times

- Accurate robots.txt that doesn't block AI crawlers

- Regular content freshness updates for time-sensitive pages

Measurement

- Google Search Console AI Overview filter for traffic data

- Dedicated AI visibility tracking for competitive intelligence and gap analysis

The bottom line

The data from 2026 is telling a consistent story: AI Overviews are selecting sources based on clarity, structure, and topical relevance, not just organic rank. The brands showing up are the ones that made their content easy to extract, built clear entity signals, and covered their topics with enough depth to be useful across the full range of sub-queries AI models generate.

Ranking #1 still helps. It's not irrelevant. But it's no longer sufficient, and for many queries it's not even the primary driver of citation probability. The 38% figure from Ahrefs is the clearest signal yet that a different optimization strategy is needed.

The good news is that the factors driving AI Overview citations are things you can actually control and improve. Content structure, entity clarity, topical depth, and multi-format presence are all within reach for any team willing to audit their current state and make targeted changes.