Key takeaways

- Yoast, Surfer, and NeuronWriter were designed to optimize for Google's ranking signals -- not for how LLMs like ChatGPT select and cite sources.

- These tools still add real value for traditional SEO, and that indirectly helps with AI visibility (more on this below), but they don't directly influence ChatGPT citations.

- Ranking in AI search requires a different strategy: topical authority, citation-worthy content structure, and monitoring which prompts trigger your brand.

- In 2025, most SEO teams discovered this gap the hard way -- their Google-optimized content was invisible in ChatGPT, Perplexity, and Gemini.

- Dedicated GEO (Generative Engine Optimization) tools now exist to fill this gap, and they work very differently from traditional content optimizers.

The question sounds almost provocative: did the tools you've been using for years -- the ones your team swears by, the ones that drove your Google rankings -- actually do anything for your visibility in ChatGPT?

The honest answer is: sort of, sometimes, and not in the way you'd hope.

2025 was the year a lot of SEO professionals had to sit with an uncomfortable realization. Their content was well-optimized. Their Surfer scores were high. Their Yoast indicators were green. And yet when they asked ChatGPT to recommend a product in their category, their brand didn't come up. A competitor with half the domain authority did.

Let's break down what actually happened.

What these tools were built to do

Before judging them by a standard they weren't designed for, it's worth being clear about what Yoast, Surfer, and NeuronWriter actually do.

Yoast SEO

Yoast is a WordPress plugin that handles the fundamentals: meta titles, meta descriptions, canonical tags, XML sitemaps, readability scoring, and basic keyword density checks. It's been around since 2010 and is installed on tens of millions of sites. It's good at what it does.

What it doesn't do: Yoast has no concept of how LLMs consume content. It doesn't know what ChatGPT is looking for when it decides which sources to cite. It optimizes for Googlebot, not for AI crawlers from OpenAI, Anthropic, or Perplexity.

Surfer SEO

Surfer is a content optimization platform that analyzes the top-ranking pages for a given keyword and tells you what to include in your content -- which terms to use, how many headings, roughly how long the piece should be, what questions to answer. It's genuinely useful for closing content gaps against Google competitors.

The Surfer team themselves published a piece in 2025 making the distinction clear: ChatGPT generates content, Surfer helps content rank. They're not the same thing, and Surfer's optimization engine is built around live SERP data, not LLM citation patterns.

NeuronWriter

NeuronWriter sits in a similar space to Surfer -- semantic content optimization using NLP analysis, competitor breakdowns, and content scoring. A YouTube experiment from late 2025 showed a piece going from a content score of 22/100 (written purely with ChatGPT) to 78/100 after NeuronWriter optimization. That's a meaningful improvement for Google SEO purposes.

The workflow the creator recommended -- ChatGPT for drafting, NeuronWriter for optimization -- is a solid one for traditional search. But the video was titled "ChatGPT SEO: How to Make AI Content Rank on Google." Not rank in ChatGPT. That distinction matters enormously.

How ChatGPT actually decides what to cite

This is where things get interesting -- and where most SEO teams got caught off guard in 2025.

Google ranks pages based on signals like backlinks, on-page optimization, page speed, and E-E-A-T. You can influence those signals with tools like Surfer and Yoast. The feedback loop is relatively clear.

LLMs work differently. When ChatGPT generates a response and cites a source (or recommends a brand), it's drawing on a combination of:

- What it learned during training (which sources appeared frequently and authoritatively across the web)

- Real-time retrieval (for models with browsing enabled, like ChatGPT with search)

- The structure and clarity of content that AI crawlers have indexed

None of these factors map neatly to a Surfer content score or a Yoast green light.

What actually influences LLM citations tends to be:

- Topical authority: Does your site cover a topic comprehensively, across multiple angles and related questions? LLMs favor sources that look like genuine subject matter experts, not thin pages optimized for one keyword.

- Citation patterns across the web: If Reddit threads, industry blogs, and YouTube comments mention your brand in the context of a topic, LLMs pick that up. This is why some brands with modest Google rankings still show up in ChatGPT.

- Content structure: Clear headings, direct answers to questions, FAQ sections, and well-organized information make it easier for AI models to extract and cite your content accurately.

- Crawlability by AI bots: OpenAI's GPTBot, Anthropic's ClaudeBot, and Perplexity's bot all crawl the web. If they're hitting errors on your site, or if you've accidentally blocked them in robots.txt, your content won't be in the training or retrieval pool at all.

Surfer and NeuronWriter can help with content structure and topical depth -- indirectly. But they don't tell you which prompts ChatGPT users are typing that relate to your category, which competitors are being cited for those prompts, or whether AI crawlers are successfully reading your pages.

The indirect benefit: why good SEO still matters

Here's where it gets nuanced. Traditional SEO tools aren't useless for AI visibility -- they're just insufficient on their own.

A few ways they still contribute:

Better content = more citations elsewhere. If your Surfer-optimized article ranks well on Google and gets linked to from other sites, those links and mentions feed into the broader web corpus that LLMs train on. High-quality, well-structured content tends to accumulate the kind of web presence that LLMs recognize as authoritative.

Readable structure helps AI extraction. NeuronWriter's emphasis on semantic terms, heading structure, and FAQ sections isn't just good for Google. It also makes content easier for AI models to parse and quote accurately. A page with clear H2s and direct answers is more likely to be cited verbatim than a wall of text.

Yoast's technical basics matter. If your site has broken canonical tags, missing sitemaps, or pages that return errors, AI crawlers will have the same problems Googlebot does. Getting the fundamentals right with Yoast creates a foundation that AI indexing can build on.

So the honest framing is: these tools are necessary but not sufficient. They handle the baseline. They don't handle the new game.

What the 2025 data actually showed

By mid-2025, a pattern had emerged across SEO communities on Reddit and LinkedIn: teams with strong Google rankings were often invisible in AI search, while some smaller brands with weaker traditional SEO metrics were showing up consistently in ChatGPT and Perplexity responses.

The difference usually came down to a few things:

- The smaller brands had invested in content that directly answered the questions people ask AI models -- conversational, question-based, comprehensive

- They were active in communities (Reddit, forums, YouTube) where AI models pull citation signals

- They had structured their content around topics rather than individual keywords

None of this is captured in a Surfer content score or a Yoast readability grade.

A LinkedIn comparison of NeuronWriter vs Surfer noted that "Surfer SEO provides more granular data and even lets you compare top-ranking pages by structure" -- which is genuinely useful for Google SEO. But neither tool shows you what's happening in AI search results.

The tools built for AI visibility

A new category of tools emerged specifically to address this gap. They work differently from content optimizers.

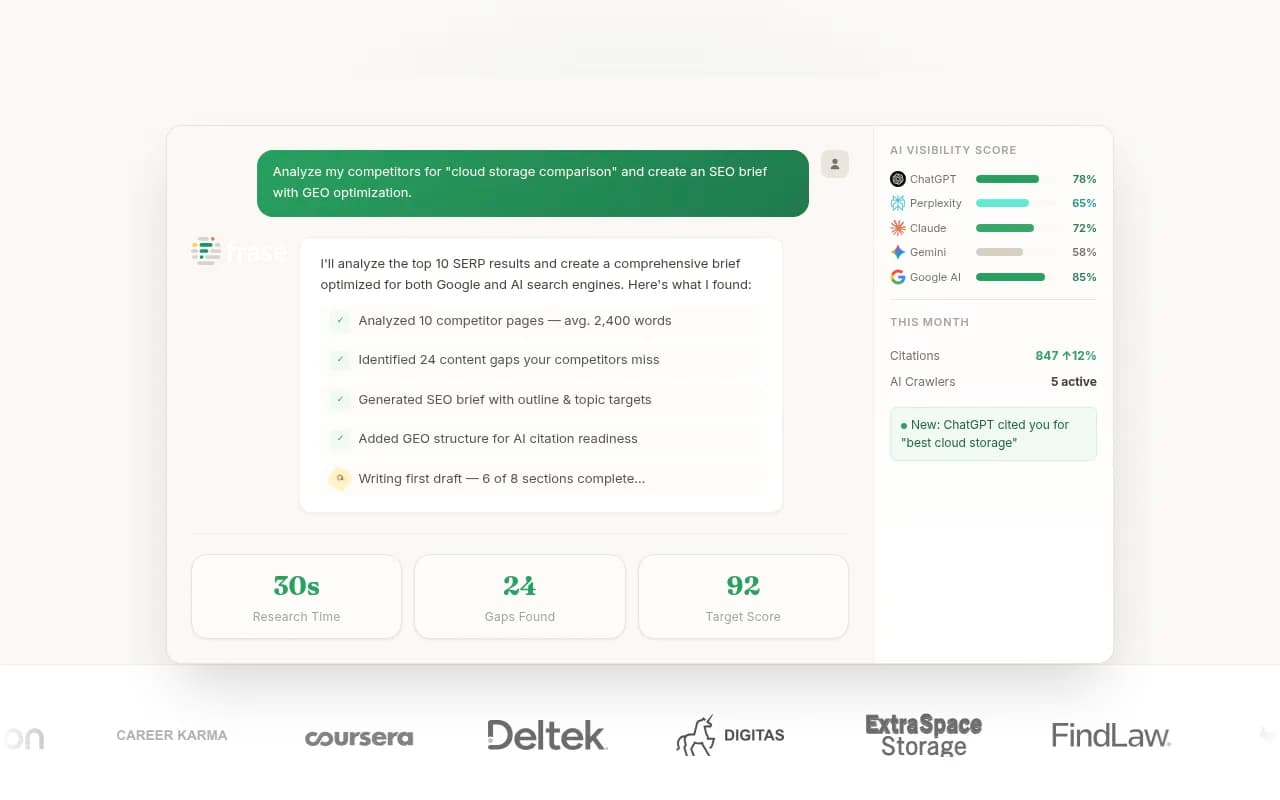

Promptwatch is one of the more complete platforms in this space. Rather than scoring your content against Google's top results, it tracks how your brand appears (or doesn't appear) across 10 AI models -- ChatGPT, Perplexity, Claude, Gemini, Grok, and others. The core workflow is: find the prompts where competitors are being cited but you're not, generate content specifically designed to fill those gaps, and track whether your visibility improves.

That's a fundamentally different loop from "optimize your content score from 22 to 78." It's asking: which questions are people asking AI models in your category, and is your brand in the answer?

A few other tools worth knowing about:

Frase sits in an interesting middle ground -- it's primarily a content research and brief tool, but its question-research features are useful for identifying the kinds of queries that show up in AI responses.

MarketMuse has long focused on topical authority and content gaps, which maps reasonably well to what LLMs reward. If you're building out a content strategy for AI visibility, its topic modeling is more relevant than keyword-by-keyword optimization.

Clearscope is another solid content optimization tool that emphasizes semantic coverage -- similar to Surfer and NeuronWriter, with the same indirect benefits for AI visibility.

A direct comparison: traditional SEO tools vs AI visibility tools

| Capability | Yoast | Surfer SEO | NeuronWriter | Promptwatch |

|---|---|---|---|---|

| On-page SEO optimization | Yes | Yes | Yes | No |

| Content scoring vs Google SERPs | No | Yes | Yes | No |

| Keyword research | Basic | Yes | Yes | No |

| Tracks ChatGPT/Perplexity citations | No | No | No | Yes |

| Identifies prompts competitors rank for | No | No | No | Yes |

| AI crawler log monitoring | No | No | No | Yes |

| Content generation for AI visibility | No | Partial | Partial | Yes |

| Reddit/YouTube citation tracking | No | No | No | Yes |

| Multi-LLM visibility dashboard | No | No | No | Yes |

The table tells the story. These are different tools solving different problems. Yoast, Surfer, and NeuronWriter are built for Google. Promptwatch and similar GEO platforms are built for AI search.

What actually works for ranking in ChatGPT

Based on what emerged from 2025, here's a practical framework:

Build topical authority, not just keyword coverage. Instead of optimizing individual pages for individual keywords, think about whether your site comprehensively covers a topic. If you sell project management software, do you have content covering every angle of that topic -- team collaboration, remote work, agile methodology, integrations, pricing comparisons? LLMs favor sources that look like genuine experts.

Answer the questions people ask AI models. These are often more conversational and specific than Google search queries. "What's the best project management tool for a 10-person startup?" is a different question from "project management software." Tools like Promptwatch can surface the actual prompts being used in your category.

Don't block AI crawlers. Check your robots.txt. GPTBot, ClaudeBot, PerplexityBot -- if these are blocked, your content isn't in the picture. Some site owners blocked them reflexively in 2023-2024 without thinking through the implications.

Get mentioned in the right places. Reddit threads, YouTube videos, and industry forums that discuss your category all feed into LLM training data and retrieval. A brand mentioned positively in a well-upvoted Reddit thread has a better chance of appearing in ChatGPT than a brand with a perfectly optimized landing page that nobody links to.

Structure content for extraction. Use clear headings, direct answers, and FAQ sections. If a human can quickly scan your page and find a specific answer, an AI model probably can too.

Track what's actually happening. This is the part most teams skipped in 2025. You can't improve what you don't measure. Knowing your Surfer content score is 78/100 tells you nothing about whether ChatGPT is citing you. You need visibility data from the AI models themselves.

The bottom line

Yoast, Surfer, and NeuronWriter are good tools. They do what they were built to do. In 2025, they helped a lot of sites rank well in Google, and that's not nothing -- Google is still the dominant search channel for most businesses.

But they weren't built for AI search, and they didn't magically transfer their benefits to ChatGPT visibility. Teams that assumed "if I rank in Google, I'll show up in AI" got a rude awakening.

The smarter move going into 2026 is to keep using these tools for what they're good at, while adding a dedicated AI visibility layer on top. That means understanding which prompts matter in your category, tracking where you're cited and where you're not, and creating content specifically designed to be picked up by LLMs.

The two strategies aren't in conflict. They're just different games, and you need to be playing both.