Key takeaways

- Over 75% of Perplexity citations pull from Reddit or YouTube, making video content a direct input into AI-generated answers

- AI models like ChatGPT and Perplexity use video transcripts, metadata, captions, and engagement signals -- not just the video itself -- to decide what to surface

- YouTube comments matter because they add keyword-rich, conversational text that AI crawlers can read and interpret as social proof

- Optimizing for AI citation requires treating your YouTube content like a text document: structured, specific, and built around questions people actually ask

- Tools like Promptwatch can show you which YouTube pages (and other sources) are being cited by AI models, so you can reverse-engineer what's working

Why AI models are pulling from YouTube at all

There's a common assumption that ChatGPT and Perplexity only cite articles, research papers, and official websites. That's not really true anymore.

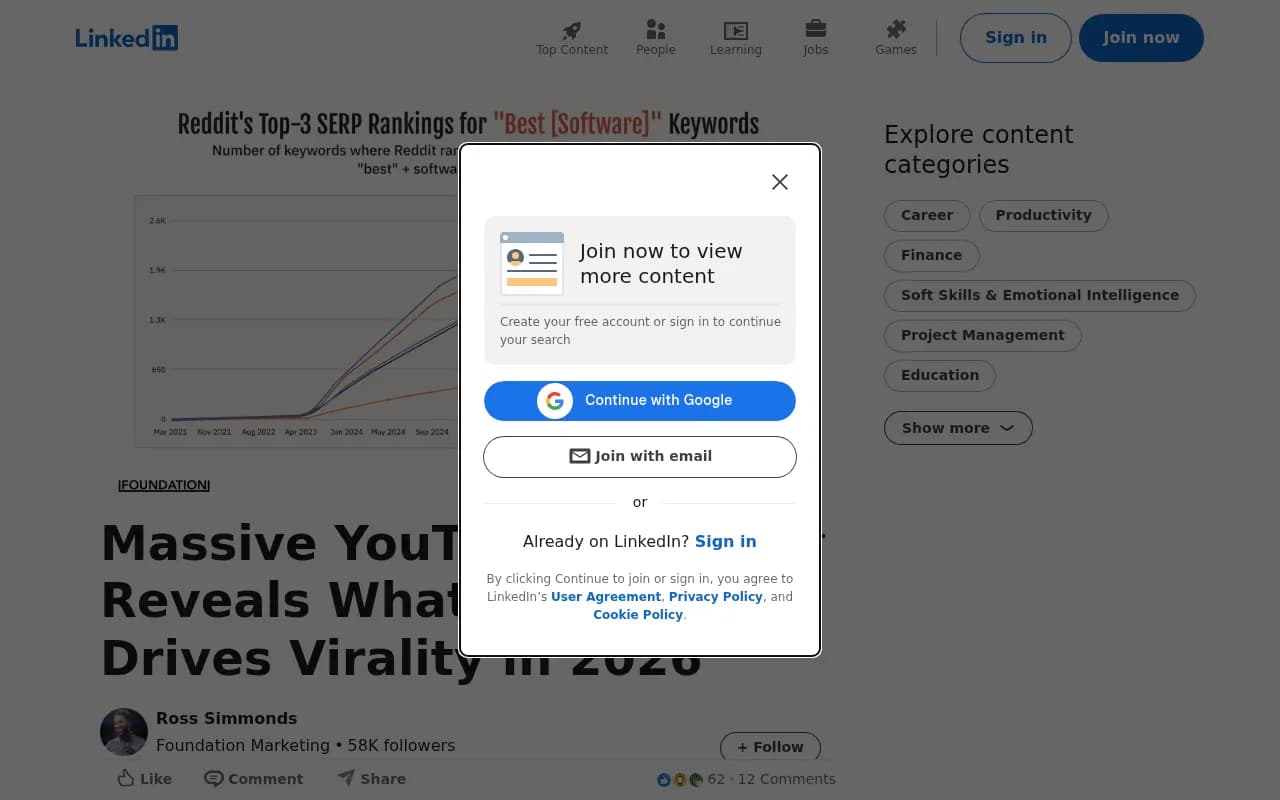

Perplexity in particular has always been aggressive about pulling from wherever the best answer lives -- and increasingly, that's YouTube. According to research from Ross Simmonds and the team at Foundation, over 75% of Perplexity citations include content from Reddit or YouTube. For ChatGPT, the number is lower but still significant, with 66% of citations including a Reddit URL.

The reason is straightforward: YouTube hosts an enormous amount of genuinely useful, structured content. How-to tutorials, product comparisons, expert walkthroughs -- these are exactly the kinds of answers AI models want to surface when someone asks "how do I do X" or "what's the best Y for Z."

What's changed in 2026 is that AI models have gotten better at reading YouTube content. They're not just scraping page titles. They're processing transcripts, reading descriptions, parsing comment threads, and weighing engagement signals. Your video is, in effect, a text document that happens to have a video attached.

What AI models actually read from your YouTube content

Transcripts are the most important signal

When ChatGPT or Perplexity evaluates a YouTube video for citation, the transcript is the primary text they're working with. If your video is a rambling, unstructured conversation, the AI has very little to latch onto. If it's a clear, well-organized walkthrough that directly answers a specific question, it becomes a strong citation candidate.

This means the way you speak in your videos matters as much as what you say. Structured explanations, clear definitions, and direct answers to questions all translate well into transcript text that AI models can parse.

Auto-generated captions are better than nothing, but manually edited transcripts or uploaded caption files are significantly cleaner. Garbled auto-captions introduce noise that can dilute the quality of the text signal.

Titles, descriptions, and tags

These are the metadata layer, and they work similarly to on-page SEO -- except the audience is now both human viewers and AI crawlers. A title that clearly states what question the video answers ("How to set up Google Search Console in 2026") is far more citable than something vague or clickbait-y ("I tried this and was SHOCKED").

Descriptions deserve more attention than most creators give them. A well-written description that summarizes the video's key points, uses natural language, and includes the specific terms someone might search for gives AI models a clean summary to work from. Think of it as a mini-article that accompanies your video.

Comments -- the underrated signal

This is where things get interesting. YouTube comments are publicly readable text, and AI crawlers can access them. A comment section full of substantive discussion -- people asking follow-up questions, sharing their own experiences, debating the finer points of what was covered -- adds a layer of conversational, keyword-rich content that reinforces the video's topical relevance.

Comments that include specific terminology, product names, or detailed questions signal to AI models that this video is a hub of genuine expertise on the topic. A comment saying "I tried this with [specific tool] and it worked perfectly" is more useful to an AI model than a hundred "great video!" replies.

This doesn't mean you should stuff your comment section with fake engagement. It means you should actively respond to comments with substantive answers, ask follow-up questions that prompt real discussion, and treat your comment section as an extension of the video's content.

Cross-platform signals

If your video is embedded in blog posts, linked from forum threads, or referenced in other YouTube videos, AI models treat that as an authority signal. It's the same logic as backlinks in traditional SEO -- external references suggest the content is trustworthy and worth surfacing.

This is why a YouTube-only strategy has limits. The brands getting cited most often are those whose videos exist within a broader content ecosystem: the video is supported by a blog post, the blog post is shared on LinkedIn, the LinkedIn post gets discussed on Reddit. Each connection strengthens the signal.

The content types that get cited most

Not all YouTube content is equally likely to get pulled into AI responses. Based on what we know about how AI models select sources, certain formats consistently outperform others.

Comparison videos perform well because they directly answer the "X vs Y" queries that are extremely common in AI searches. When someone asks Perplexity "ChatGPT vs Perplexity -- which is better for research?", a well-structured comparison video with a clear transcript is a natural citation target.

How-to tutorials are strong because they answer specific procedural questions. The more specific the better -- "how to add chapters to a YouTube video" will outperform "YouTube tips for beginners" every time.

Evergreen explainers have a long shelf life. AI models don't heavily discount older content the way social algorithms do. A well-made explainer from two years ago that still accurately describes how something works can continue getting cited indefinitely.

Opinion and trend analysis is trickier. These videos can get cited when they're from recognized experts or when the opinion has been widely shared and discussed, but they're harder to optimize for.

What tends not to get cited: highly produced entertainment content, vlogs, reaction videos, and anything where the value is primarily visual rather than informational.

How YouTube virality data connects to AI citation

The 1of10 study analyzing 62.6 billion views across 323,000+ outlier videos found that emotion drives YouTube performance more than information density. Titles triggering joy, controversy, or curiosity consistently outperformed neutral ones. Videos in the 15-25 minute range outperformed shorter content across most niches.

Here's the connection to AI citation that's easy to miss: videos that perform well on YouTube also tend to accumulate the engagement signals (watch time, comments, shares, embeds) that AI models interpret as quality indicators. Virality and AI citability aren't the same thing, but they share some underlying drivers.

The implication for content strategy is that you don't have to choose between making content that humans want to watch and making content that AI models want to cite. The overlap is larger than most people assume. A video that's genuinely useful, clearly structured, and emotionally resonant will tend to do well on both dimensions.

One nuance: the study found that titles with numbers saw roughly 11% fewer views on average. This runs counter to a lot of traditional SEO advice. For AI citation specifically, numbered titles ("5 ways to...") can still work well because they signal structured content, but the YouTube performance data suggests keeping them out of your primary title and using them in descriptions instead.

Practical optimization steps

Structure your videos like articles

Before recording, write an outline. Not a loose bullet list -- an actual structured outline with a clear introduction, distinct sections, and a conclusion that summarizes the key points. This structure will come through in your transcript and make the video far more parseable by AI models.

Open with a direct answer

Many creators build to their main point. For AI citation purposes, it's better to state the answer upfront and then explain it. This mirrors how AI models prefer to structure their own responses, and it makes the transcript more likely to contain the specific answer text that gets quoted.

Use chapter markers

YouTube chapters (added via timestamps in the description) create structured metadata that AI models can read. They also improve watch time, which feeds back into the engagement signals that influence citation likelihood.

Optimize your description as a standalone document

Write your description as if it's a short article that someone might read without watching the video. Include the key points, the specific terms, and the questions your video answers. 200-400 words is a reasonable target.

Respond to comments with depth

When someone asks a follow-up question in your comments, answer it thoroughly. These responses add more text content to the page and demonstrate genuine expertise. A comment thread where the creator engages substantively is a stronger signal than one where comments go unanswered.

Build cross-platform support

Publish a companion blog post for your most important videos. Share them in relevant communities. Get them embedded in other articles. Each external reference strengthens the authority signal that AI models use to evaluate citation-worthiness.

Tracking which YouTube content is actually getting cited

The gap between "I think this video might be getting cited" and "I know exactly which videos are being cited, by which AI models, and for which queries" is significant. Most brands are operating in the dark.

Promptwatch tracks citations across 10 AI models including ChatGPT, Perplexity, Claude, and Gemini, and it shows you exactly which pages -- including YouTube pages -- are appearing in AI responses for specific prompts. This lets you see what's working, identify which video formats and topics are getting traction, and prioritize your production accordingly.

For brands that want to monitor YouTube's influence on AI recommendations more broadly, tools like Brand24 can track mentions across platforms, while Perplexity itself is useful for manually testing whether your videos are being surfaced for specific queries.

Perplexity

The comment section as a competitive advantage

Most brands treat YouTube comments as a chore -- something to moderate, occasionally respond to, and mostly ignore. That's a mistake in 2026.

A rich comment section does several things simultaneously. It adds topical text content to the page. It signals genuine community engagement to both YouTube's algorithm and AI crawlers. And it creates a feedback loop where real user questions can inform your next video's content, which then answers those questions more directly, which makes it more citable.

The brands winning at YouTube-driven AI citation aren't just making good videos. They're building communities around those videos, and those communities generate the kind of authentic, substantive discussion that AI models find credible.

This is harder to fake than metadata optimization, which is partly why it works. You can stuff keywords into a description in an afternoon. Building a comment section full of genuine expert discussion takes time and consistent engagement.

A comparison of what signals matter most

| Signal | Impact on YouTube algorithm | Impact on AI citation | Difficulty to optimize |

|---|---|---|---|

| Transcript quality | Low | High | Medium |

| Title clarity | High | High | Low |

| Description depth | Low | High | Low |

| Comment volume | Medium | Medium | Medium |

| Comment quality/depth | Low | Medium-High | High |

| Watch time / retention | High | Medium | High |

| External embeds/links | Low | High | High |

| Chapter markers | Low | Medium | Low |

| Cross-platform mentions | Low | High | High |

| Engagement rate (likes) | High | Low-Medium | Medium |

The pattern here is worth noting: the signals that matter most for AI citation (transcript quality, description depth, external links) are different from the signals that drive YouTube's own algorithm (watch time, CTR, engagement rate). You need to optimize for both, but they require different tactics.

What this means for your content strategy

YouTube is no longer just a distribution channel. For brands that want to appear in AI-generated answers, it's a content format that AI models actively read, evaluate, and cite. The transcript is your article. The description is your meta summary. The comments are your community signals. The external links are your authority indicators.

The brands that treat YouTube production with the same rigor they apply to written content -- structured outlines, clear answers, optimized metadata, active community management -- are the ones showing up in ChatGPT and Perplexity responses. The ones treating it as a secondary channel where production quality doesn't matter are largely invisible to AI models.

That gap is only going to widen as AI search continues to grow. Getting your YouTube content citation-ready now is considerably easier than trying to catch up later.