Summary

- Page-level tracking shows which specific URLs AI models cite, not just brand mentions -- critical for understanding what content actually drives visibility

- Promptwatch leads with page-level citation tracking, AI crawler logs, and content gap analysis that shows what to create next

- Most competitors (Otterly.AI, Peec.ai, AthenaHQ) track brand-level visibility but lack granular page attribution

- Citation data reveals which content formats (listicles, comparisons, how-tos) AI models prefer to cite

- AI crawler logs (available in Promptwatch, missing in most tools) show which pages AI models actually read vs. which they cite

Why page-level tracking matters more than brand-level visibility

Most AI visibility tools tell you "your brand appeared 47 times this month in ChatGPT responses." That's a start. But it doesn't answer the question that actually matters: which pages are driving those citations?

Page-level tracking shows the specific URLs AI models cite when they mention your brand. This changes everything:

- You see which content formats work (product comparisons vs. tutorials vs. landing pages)

- You identify gaps -- topics where competitors get cited but you don't

- You connect visibility to traffic -- which cited pages actually drive clicks

- You know what to create next based on what AI models already prefer to cite

Brand-level tracking is a vanity metric. Page-level tracking is actionable.

The platforms that actually track citations at the page level

Promptwatch: page-level tracking + content gap analysis + AI crawler logs

Promptwatch is the only platform that combines page-level citation tracking with content gap analysis and AI crawler logs. You see:

- Which pages get cited across ChatGPT, Claude, Perplexity, Gemini, and 6 other AI models

- Which prompts trigger citations for each page (prompt-to-page mapping)

- Which pages AI crawlers visit but don't cite (indexing vs. citation gap)

- Which prompts competitors rank for that you don't (answer gap analysis)

- AI-generated content that fills those gaps, grounded in 880M+ citation data points

The action loop: find gaps, generate content, track results. Most tools stop at step one.

Pricing: Essential $99/mo (1 site, 50 prompts), Professional $249/mo (2 sites, 150 prompts, crawler logs), Business $579/mo (5 sites, 350 prompts). Free trial available.

Scrunch: broad AI engine coverage with page-level visibility

Scrunch tracks citations across multiple AI engines and shows page-level data in its reporting. You can see which URLs appear in responses and compare your citation footprint vs. competitors.

Where it's weaker: several reviews note it's stronger at monitoring than optimization. It shows you what's happening but doesn't prescribe what to do next. No content gap analysis or AI crawler logs.

Pricing: Starts around $300/mo for entry plans, $500/mo for team plans.

Profound: enterprise page-level tracking with governance features

Profound positions itself as an enterprise platform with deep page-level attribution. You can drill down into which pages AI models cite and why. Strong governance features for large teams that need audit trails and approval workflows.

Where it's weaker: higher price point, slower onboarding. Reviews mention it's built for enterprise buyers, not small teams that need to move fast.

Pricing: Custom enterprise pricing, typically $1,000+/mo.

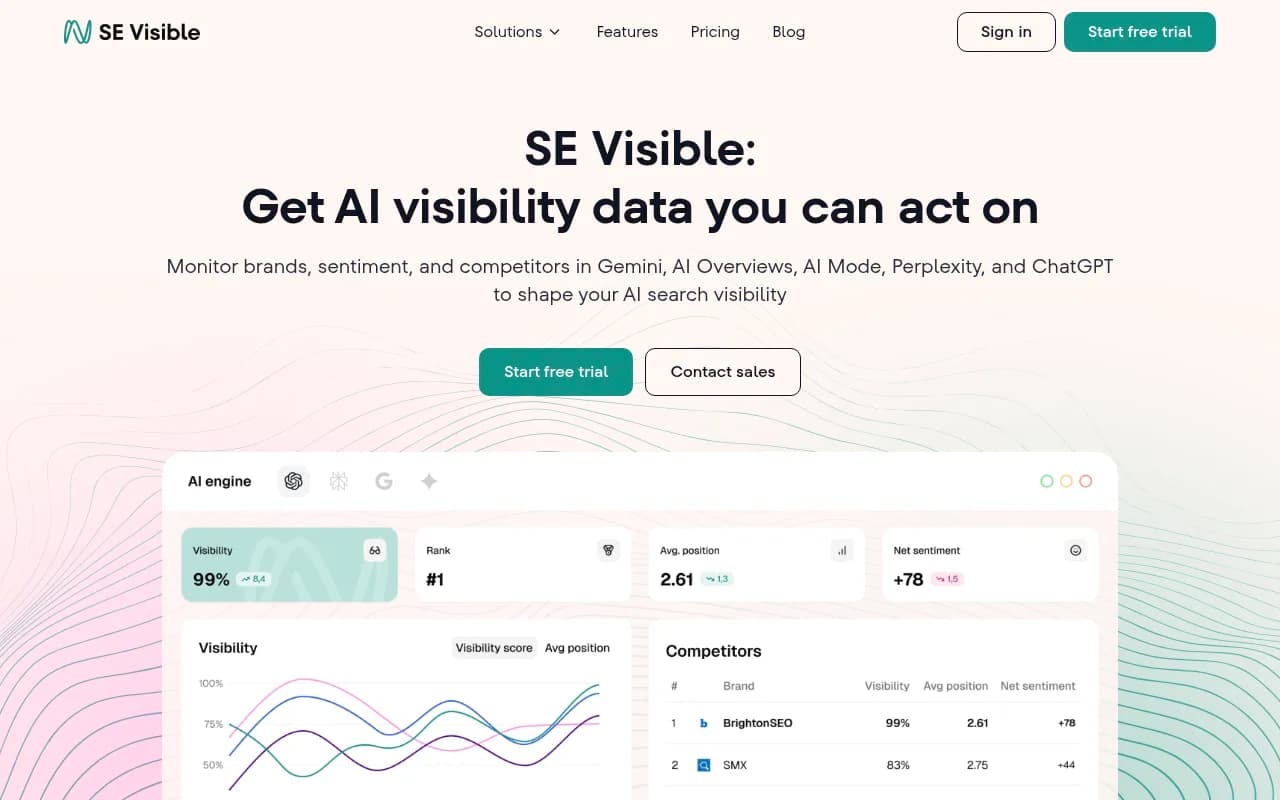

SE Visible (SE Ranking's AI visibility tool): page-level tracking with SEO integration

SE Visible tracks page-level citations and integrates with SE Ranking's broader SEO platform. You can see which pages get cited in AI responses and compare that data to traditional search rankings.

Where it's weaker: citation tracking is newer (launched 2025), so the feature set is less mature than Promptwatch or Scrunch. No AI crawler logs or content gap analysis.

Pricing: Included with SE Ranking Pro plans ($119/mo+).

What most "AI visibility tools" actually track (spoiler: not pages)

The majority of tools marketed as "AI visibility platforms" track brand mentions, not page-level citations. They tell you "your brand appeared in 50 responses" but don't show which URLs drove those mentions.

Tools that track brand-level visibility only:

- Otterly.AI: affordable brand monitoring across AI engines, no page-level attribution

- Peec.ai: multi-language brand tracking, no page-level data

- AthenaHQ: brand visibility across 8+ AI engines, no URL-level breakdown

- Airefs: budget brand monitoring, no page attribution

- Mentions.so: brand mention tracking, no citation URLs

These tools answer "are we visible?" but not "which content drives visibility?" For brand awareness monitoring, they're fine. For content strategy, they're insufficient.

Comparison table: page-level tracking capabilities

| Platform | Page-level citations | AI crawler logs | Content gap analysis | Pricing |

|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | $99-579/mo |

| Scrunch | Yes | No | No | $300-500/mo |

| Profound | Yes | No | No | $1,000+/mo |

| SE Visible | Yes | No | No | $119+/mo |

| Otterly.AI | No | No | No | $49-199/mo |

| Peec.ai | No | No | No | $99-299/mo |

| AthenaHQ | No | No | No | $149-499/mo |

AI crawler logs: the missing piece in most platforms

Page-level citation tracking shows which pages AI models cite. AI crawler logs show which pages they read.

The gap between these two metrics is critical:

- A page that gets crawled but not cited has an optimization problem (content quality, structure, or relevance)

- A page that gets cited without recent crawls means AI models are using stale data

- A page that gets neither crawled nor cited is invisible

Promptwatch is the only platform that surfaces AI crawler logs alongside citation data. You see:

- Which AI crawlers (ChatGPT, Claude, Perplexity) hit which pages

- How often they return (crawl frequency)

- Errors they encounter (404s, timeouts, blocked resources)

- Which pages they read but don't cite

This closes the loop between indexing and visibility. Most platforms assume AI models have perfect knowledge of your site. Crawler logs show the reality.

What to look for in a page-level tracking platform

1. Citation attribution, not just brand mentions

The platform should show the specific URL cited in each AI response. Brand-level metrics ("your brand appeared 50 times") are useless for content strategy.

2. Prompt-to-page mapping

You need to see which prompts trigger citations for each page. This reveals:

- Which topics each page ranks for in AI search

- Which angles (comparison, tutorial, review) drive citations

- Which competitor pages rank for prompts you don't

3. Multi-model coverage

Track citations across ChatGPT, Claude, Perplexity, Gemini, and Google AI Overviews at minimum. Each model has different citation patterns.

4. Historical data and trends

See how page-level visibility changes over time. Did a new article start getting cited? Did an old page lose visibility? Historical data shows what's working.

5. Integration with traffic data

Connect citation data to actual traffic. Which cited pages drive clicks? Which get cited but don't convert? Promptwatch offers code snippet, GSC integration, or server log analysis for attribution.

The content gap analysis advantage

Page-level tracking shows what's working. Content gap analysis shows what's missing.

Promptwatch's Answer Gap Analysis compares your citation footprint vs. competitors and surfaces:

- Prompts where competitors get cited but you don't

- The specific content angles AI models want (comparison, tutorial, listicle)

- The topics your site doesn't cover that competitors do

- Prompt volumes and difficulty scores to prioritize high-value gaps

Most platforms stop at "here's your visibility score." Promptwatch shows you the gaps, then generates content to fill them (AI writing agent grounded in 880M+ citation data points).

This is the difference between a monitoring tool and an optimization platform.

Real-world use case: tracking citations for a SaaS product

A B2B SaaS company tracking AI visibility for their project management tool:

Brand-level tracking (Otterly.AI, Peec.ai):

- "Your brand appeared in 120 AI responses this month"

- "Visibility increased 15% vs. last month"

- No insight into which content drove those mentions

Page-level tracking (Promptwatch, Scrunch):

- Product comparison page cited 45 times for "best project management tools" prompts

- Tutorial on Gantt charts cited 30 times for "how to create a Gantt chart" prompts

- Pricing page cited 15 times for "[competitor] pricing" prompts

- Homepage cited 10 times (mostly brand-specific prompts)

- Blog post on remote team management cited 20 times

Actionable insight: The comparison page and tutorial drive the most citations. Create more comparison content (vs. Asana, vs. Monday.com) and more how-to guides. The pricing page gets cited for competitor prompts -- opportunity to create "[competitor] alternative" content.

Brand-level tracking gives you a number. Page-level tracking gives you a strategy.

How AI models decide which pages to cite

AI models don't cite pages randomly. Citation patterns reveal what they prefer:

Content format preferences

- Listicles and comparisons get cited most often ("10 best X", "X vs. Y")

- How-to guides get cited for instructional prompts

- Product pages get cited for transactional prompts ("buy X", "X pricing")

- Landing pages rarely get cited unless they have unique data or research

Content depth signals

- Pages with 1,500+ words get cited more than short pages

- Pages with structured data (tables, lists, headings) get cited more

- Pages with original data or research get cited more

- Pages with clear authorship and expertise signals get cited more

Freshness matters

- AI models prefer recently updated content

- Pages with "2026" in the title get cited more for current-year prompts

- Stale content (last updated 2+ years ago) gets cited less

Page-level tracking reveals these patterns for your site. You see which formats and topics AI models prefer to cite, then create more of that content.

The Reddit and YouTube citation factor

AI models cite Reddit threads and YouTube videos more often than most brands realize. Promptwatch surfaces these citations alongside your owned content:

- Which Reddit discussions mention your brand and get cited by AI models

- Which YouTube videos about your product get cited

- Which competitor Reddit threads and videos get cited for prompts you want to rank for

This reveals opportunities to participate in Reddit discussions, create YouTube content, or sponsor creators in spaces where AI models already look for answers.

Most page-level tracking tools ignore Reddit and YouTube entirely. Promptwatch treats them as first-class citation sources.

Pricing comparison: page-level tracking platforms

| Platform | Entry price | Page tracking | AI crawler logs | Content gaps | Free trial |

|---|---|---|---|---|---|

| Promptwatch | $99/mo | Yes | Yes (Pro+) | Yes | Yes |

| Scrunch | $300/mo | Yes | No | No | No |

| Profound | $1,000+/mo | Yes | No | No | Custom |

| SE Visible | $119/mo | Yes | No | No | Yes |

| Otterly.AI | $49/mo | No | No | No | Yes |

| Peec.ai | $99/mo | No | No | No | Yes |

For small teams and agencies, Promptwatch offers the best price-to-feature ratio. For enterprise buyers with governance needs, Profound is the premium option. For teams already using SE Ranking, SE Visible is a natural add-on.

What page-level tracking reveals about AI search behavior

Tracking citations at the page level exposes patterns you can't see with brand-level metrics:

1. AI models prefer specific content formats

Comparison pages get cited 3-5x more than product pages. How-to guides get cited more than landing pages. Listicles get cited more than long-form articles.

2. Different AI models cite different pages

ChatGPT prefers listicles and comparisons. Perplexity cites research and data-heavy pages. Claude cites how-to guides and tutorials. Google AI Overviews cites pages with structured data.

3. Citation volume doesn't correlate with search traffic

Pages that get cited often in AI responses don't always drive clicks. The inverse is also true -- some pages drive traffic without many citations (brand-specific prompts).

4. Competitor pages reveal content gaps

Seeing which competitor pages get cited for prompts you want to rank for shows exactly what content you're missing.

Page-level tracking turns AI visibility from a vanity metric into a content strategy tool.

The future of page-level tracking: attribution and revenue

The next frontier is connecting page-level citations to revenue. Which cited pages drive conversions? Which drive qualified traffic vs. junk traffic?

Promptwatch's traffic attribution (code snippet, GSC integration, server logs) closes this loop. You see:

- Which cited pages drive the most traffic

- Which cited pages drive the highest-quality traffic (time on site, pages per session, conversions)

- Which prompts drive the most valuable traffic

- ROI of AI visibility efforts (traffic value from cited pages)

Most platforms stop at visibility metrics. Promptwatch connects visibility to business outcomes.

Choosing the right platform for your needs

Choose Promptwatch if:

- You need page-level tracking, content gap analysis, and AI crawler logs in one platform

- You want to generate content that ranks in AI search, not just monitor visibility

- You're a small team or agency that needs to move fast and see ROI quickly

- Budget: $99-579/mo

Choose Scrunch if:

- You need broad AI engine coverage with reliable page-level tracking

- You prioritize clean reporting and stakeholder-friendly dashboards

- You don't need content gap analysis or crawler logs

- Budget: $300-500/mo

Choose Profound if:

- You're an enterprise buyer with governance and audit trail requirements

- You need deep page-level attribution with advanced "why did we appear?" analysis

- Budget is not a constraint

- Budget: $1,000+/mo

Choose SE Visible if:

- You're already using SE Ranking for SEO and want to add AI visibility tracking

- You need page-level citations integrated with traditional search rankings

- You're okay with a newer, less mature feature set

- Budget: $119+/mo

Skip brand-level tools (Otterly.AI, Peec.ai, AthenaHQ) if:

- You need to understand which content drives visibility, not just whether you're visible

- You're building a content strategy based on AI search data

- You want to connect visibility to traffic and revenue

Page-level tracking is the difference between knowing you're visible and knowing what to do about it.