Key takeaways

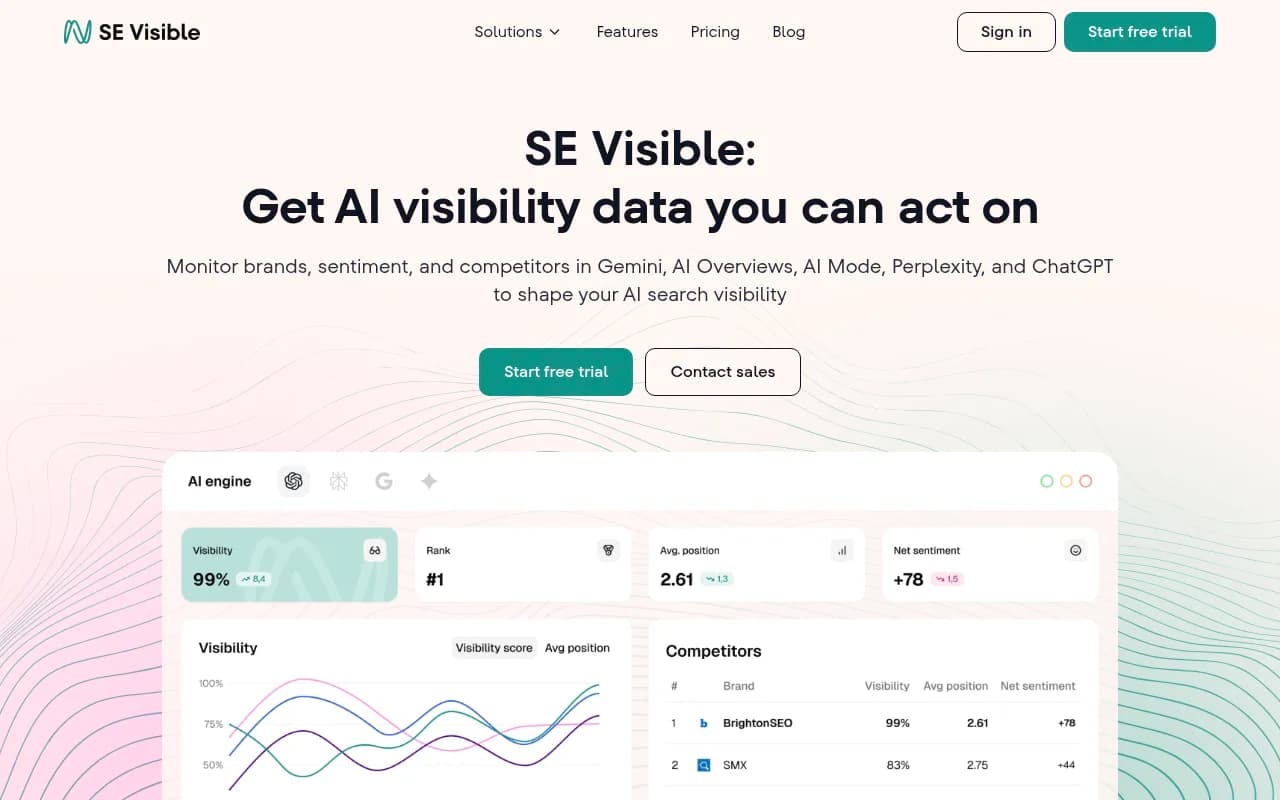

- Traditional SEO tracks rankings, clicks, and backlinks. AI search visibility tracks citations, mentions, and sentiment across LLM responses -- completely different metrics.

- Ranking first on Google doesn't mean you appear in ChatGPT or Perplexity answers. The two channels are increasingly independent.

- AI search rewards content clarity, topical authority, and structured answers. Backlink counts matter far less.

- Most traditional SEO tools (Google Search Console, Moz, Ahrefs) don't show you what's happening in AI search at all.

- Dedicated GEO/AI visibility platforms are now necessary to track and improve how AI models cite your brand.

The uncomfortable truth about rankings in 2026

Here's a scenario that's playing out for a lot of marketing teams right now: your Google rankings are holding steady, organic traffic looks fine in Search Console, and then someone on the team asks "why isn't our brand showing up when people ask ChatGPT for recommendations in our category?"

There's no answer in your existing dashboard. Because traditional SEO tools weren't built to answer that question.

This is the core tension in 2026. Search behavior has split into two distinct channels -- traditional web search (Google, Bing) and AI-generated answers (ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews). Brands that only track one are flying half-blind.

The good news is that the differences are now well understood. Let's go through what actually changes when you move from traditional SEO tracking to AI search visibility tracking.

What traditional SEO tracking actually measures

Traditional SEO has a well-established measurement framework built over two decades. The core metrics are:

- Keyword rankings (position 1-100 for specific queries)

- Organic click-through rates

- Backlink counts and domain authority

- Crawl health and indexation

- Page-level traffic from search

Tools like Google Search Console, Moz, Ahrefs, and Semrush are built around this model. You pick keywords, track where you rank, and optimize pages to move up.

The underlying assumption is that search results are a ranked list of links, and your goal is to appear near the top of that list. Higher position = more clicks = more traffic. The model is linear and measurable.

This still works for a significant portion of search traffic. But it's an incomplete picture of how people discover information in 2026.

How AI search visibility works differently

When someone asks ChatGPT "what's the best project management tool for remote teams?" or asks Perplexity "which CRM is best for a 10-person sales team?", they don't get a ranked list of links. They get a synthesized answer with a handful of cited sources -- or sometimes no visible sources at all.

Your brand either appears in that answer or it doesn't. There's no position 4 or position 7. It's binary in a way traditional SEO isn't.

Perplexity

This changes the tracking problem fundamentally. Instead of asking "where do I rank for this keyword?", you're asking:

- Does my brand get mentioned when users ask questions in my category?

- Which AI models cite me, and for which types of queries?

- How does the AI describe my brand when it does mention me?

- Which competitors appear instead of me, and why?

- What content on my site is actually being cited?

These questions require a completely different kind of data collection. You can't pull this from Google Search Console. You have to actually query the AI models, capture their responses, and analyze the patterns at scale.

The metrics gap: what you're missing without AI visibility tracking

Here's a side-by-side look at how the two tracking approaches differ:

| Dimension | Traditional SEO tracking | AI search visibility tracking |

|---|---|---|

| Core metric | Keyword ranking position | Brand citation rate / mention share |

| Data source | Google index data | Live LLM query responses |

| Query type | Keywords | Natural language prompts |

| Result format | Ranked link list | Synthesized text answer |

| Competitor view | SERP overlap | Citation share vs competitors |

| Content signal | Backlinks + on-page signals | Topical authority + answer quality |

| Brand sentiment | Not tracked | Sentiment in AI responses |

| Traffic attribution | Direct (GA4/GSC) | Requires separate attribution layer |

| Update frequency | Daily/weekly rank checks | Prompt-level response capture |

| Tools needed | GSC, Ahrefs, Moz, Semrush | Dedicated GEO/AI visibility platforms |

The gap in the bottom row is where most teams get stuck. Traditional SEO tools don't have the infrastructure to query ChatGPT 10,000 times a month, capture responses, extract brand mentions, and score sentiment. That's a different technical problem entirely.

Why backlinks matter less (but aren't irrelevant)

One of the most debated questions in 2026 is whether backlinks still matter for AI visibility. The honest answer: they matter, but not in the same way.

In traditional SEO, a link from a high-authority domain directly improves your ranking for target keywords. The mechanism is explicit and well-documented.

In AI search, the relationship is more indirect. AI models are trained on large datasets that include web content, and high-authority sites with lots of inbound links are more likely to be included in training data and cited as sources. So there's a correlation between traditional domain authority and AI citation rates -- but it's not a clean 1:1 relationship.

What matters more for AI visibility is whether your content actually answers questions clearly and completely. A well-structured FAQ page on a mid-authority domain can outperform a thin page on a high-authority domain in AI citations. The AI is optimizing for answer quality, not link equity.

This is why a Reddit thread from a community discussion can end up cited in a ChatGPT answer alongside or instead of your carefully optimized product page. The AI found the Reddit thread more useful for answering the specific question.

The prompt vs keyword distinction

Traditional SEO is built around keywords -- short phrases people type into a search box. "Best CRM software", "project management tools", "email marketing platform". You track rankings for these keywords and optimize pages to target them.

AI search is built around prompts -- full questions and conversational queries. "I'm a solo founder with 3 salespeople, what CRM should I use?", "We're switching from HubSpot, what are the best alternatives?", "Which email marketing platform works best with Shopify?"

These prompts have context, persona, and intent baked in. The same brand might appear for one persona's prompt and not another's. A tool that's great for enterprise might dominate prompts from enterprise buyers but be invisible in prompts from SMBs.

This means AI visibility tracking needs to cover a much wider prompt space than keyword tracking. You're not tracking 50 keywords -- you're tracking hundreds of prompt variations across different buyer personas, use cases, and competitor comparison queries.

Promptwatch handles this by tracking prompt volumes and difficulty scores, so you can prioritize which prompts are worth winning rather than trying to cover everything at once.

Content strategy shifts: from ranking pages to answer-worthy content

Traditional SEO content strategy is largely about targeting keywords with dedicated pages. Write a page targeting "best CRM for small business", optimize it with the right headings and internal links, build some backlinks, and try to rank.

AI search content strategy is about becoming the most useful, citable source on a topic. That means:

- Answering specific questions directly and completely, not burying the answer in 2,000 words of padding

- Covering topics with enough depth that AI models see you as an authoritative source

- Structuring content so AI crawlers can extract clear answers (headers, lists, concise definitions)

- Publishing content that addresses the exact prompts your target buyers are using

The practical implication is that content gaps look different. In traditional SEO, a gap is a keyword you're not ranking for. In AI search, a gap is a prompt your competitors appear in but you don't -- even if you technically have a page that covers the topic.

Tools like Promptwatch surface these answer gaps specifically: here are the prompts where your competitors are visible and you're not, here's what content you're missing, here's what to write.

This is a fundamentally more actionable kind of gap analysis than seeing "you rank #8 for this keyword."

Tracking AI crawler activity: a new technical layer

Traditional SEO has technical SEO -- crawl errors, indexation issues, page speed, structured data. You use tools like Screaming Frog to audit your site and fix problems that prevent Google from properly indexing your content.

AI search has an equivalent: AI crawler activity. ChatGPT, Perplexity, Claude, and other AI systems have their own crawlers that visit your site to gather content for their models. These crawlers behave differently from Googlebot and have different requirements.

Questions you need to answer:

- Which AI crawlers are visiting your site?

- Which pages are they reading?

- Are they hitting errors or getting blocked?

- How often do they return?

Most traditional SEO tools don't capture this data. It requires monitoring your server logs or implementing a dedicated tracking layer. Some GEO platforms now offer this as a feature -- Promptwatch's crawler logs show real-time AI crawler activity, which pages they read, and errors they encounter.

This matters because if Perplexity's crawler can't access your key product pages, those pages won't get cited in Perplexity answers, regardless of how well-optimized they are.

Attribution: connecting AI visibility to actual revenue

Traditional SEO attribution is relatively straightforward. Google Search Console shows you which queries drove clicks, Google Analytics shows you what those visitors did, and you can connect organic traffic to conversions.

AI search attribution is harder. When someone reads a ChatGPT answer that mentions your brand and then visits your site directly (typing your URL or searching your brand name), that visit shows up as direct traffic in GA4 -- not as AI referral traffic. The connection is invisible.

This is a real problem for justifying investment in AI visibility. If you can't show that AI citations are driving revenue, it's hard to get budget for GEO work.

The solutions emerging in 2026 include:

- UTM-tagged links in AI responses (when AI models include clickable citations)

- JavaScript snippet tracking that captures AI referral signals

- Server log analysis to identify AI-originated sessions

- Brand search lift measurement (increased branded search volume after AI visibility improvements)

None of these are perfect, but combining them gives a reasonable picture of AI-driven revenue impact.

Which tools cover which channels

Here's a practical breakdown of what different tool categories cover:

| Tool type | Traditional SEO | AI search visibility | Content gap analysis | AI traffic attribution |

|---|---|---|---|---|

| Google Search Console | Yes | No | No | Partial |

| Ahrefs / Moz / Semrush | Yes | Limited | No | No |

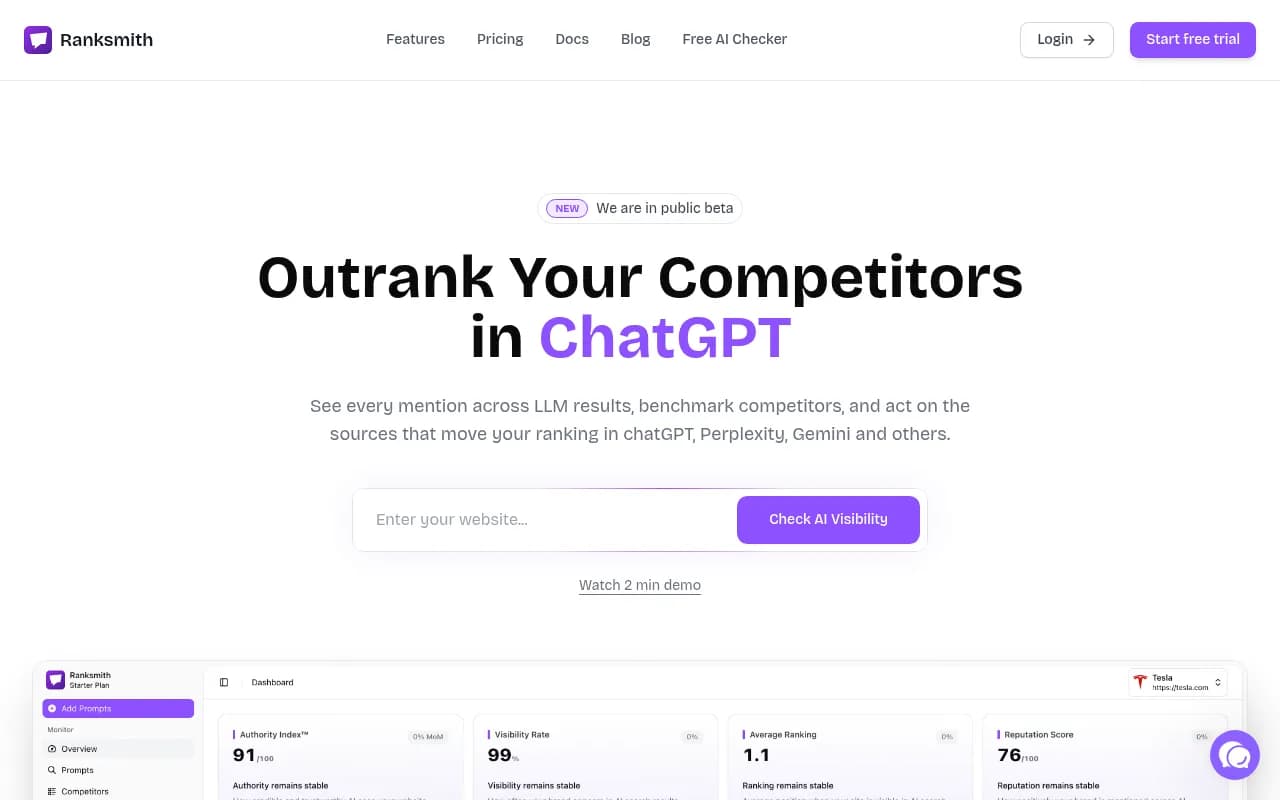

| Dedicated GEO platforms (Promptwatch, Profound, Otterly) | No | Yes | Varies | Varies |

| Brand monitoring (Brand24) | Partial | Partial | No | No |

| Marketing attribution (HockeyStack) | Partial | Partial | No | Yes |

The honest takeaway: you probably need both a traditional SEO tool and a dedicated AI visibility platform in 2026. They're not substitutes for each other -- they're measuring different things.

What "winning" looks like in AI search vs traditional SEO

In traditional SEO, winning is clear: you rank #1 for your target keywords, you get the most clicks, you drive the most organic traffic. The scoreboard is public and updated daily.

In AI search, winning is more nuanced. A brand that appears in 60% of relevant ChatGPT responses, is described positively, and gets cited alongside specific use cases it wants to own -- that brand is winning. But the scoreboard isn't public, it changes with every model update, and it varies across different AI systems.

This is why sentiment tracking matters in AI visibility in a way it doesn't in traditional SEO. It's not just whether you appear -- it's how you're described. An AI that mentions your brand but calls it "expensive and complex" is worse than not appearing at all.

Practical starting points for 2026

If you're trying to get a handle on AI search visibility alongside your existing SEO work, here's a reasonable starting sequence:

-

Audit your current AI visibility. Query ChatGPT, Perplexity, and Claude with 10-15 prompts your target buyers would use. See if your brand appears. This takes 30 minutes and gives you a baseline.

-

Set up dedicated AI visibility tracking. Pick a platform that monitors the AI models your audience uses. Promptwatch covers 10 models including ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews.

-

Identify your biggest prompt gaps. Where are competitors appearing and you're not? These are your highest-priority content opportunities.

-

Audit your AI crawler access. Check your robots.txt and server logs to confirm AI crawlers can access your key pages.

-

Create content specifically designed to answer the prompts you're missing. This is different from traditional SEO content -- it's structured around questions, not keywords.

-

Track the results. Watch your citation rates improve as AI models start finding and citing your new content.

The bottom line

Traditional SEO isn't dead -- Google still drives enormous traffic and ranking signals still matter. But treating traditional SEO metrics as a complete picture of your brand's search visibility is increasingly a mistake.

The brands that are pulling ahead in 2026 are the ones running both tracks in parallel: maintaining their traditional SEO foundation while building AI visibility on top of it. The tools, metrics, and content strategies are different enough that you can't just bolt AI visibility onto your existing SEO workflow. It requires a separate discipline.

The good news is that the discipline is now well-defined. The tools exist, the metrics are established, and the content strategies are proven. The question is whether your team has started building this capability yet.